Embedding Procedures

Overview

This document lists the procedures used for requesting embedding, running embedding jobs, and retrieving data. Please note that some steps require privileged accounts.

Embedding Documentation

Overview

The purpose of embedding is to provide physicists with a known; Monte Carlo tracks, where the kinematics and particle type is known exactly, are introduced into the realistic environment seen in the analysis - a real data event. How reconstruction preforms on these "standard candle" tracks provides a baseline which can be used to correct for acceptance and effciency effects in various analyses.

In STAR, embedding is achieved with a set of software tools - Monte Carlo simulation software, as well as the tools for real data event reconstruction - accessed through a suite of scripts. These documents attempt to provide an introduction to the scripts and their use, but the STAR collaborator is referred to the separate web pages maintained for Simulations and Reconstruction for deeper questions of the processes involved in those respective tasks.

Please note, the general procedure for embedding is:

- The Physics Working Group Convenor submits an embedding request. The request will be assigned a request number and priority by the Analysis Coordinator, see this page for more information.

- The Embedding Coordinator will coordinate the distribution of embedding requests. Users who wish to run their own embedding are encouraged to do so - but it must be verified with the Embedding Coordinator.

- The Embedding Coordinator is responsible for the QA of all embedding requests. When the Embedding Coordinator has verified that the test sample produced is acceptable, full production is authorized.

For EC and ED(s) (OBSOLETE)

Embedding instructions for EC and ED(s)

Last updated on Sept/06/2011 by Christopher Powell

Current Embedding Coordinator (EC): Xianglei Zhu (zhux@rcf.rhic.bnl.gov)

Current Embedding Deputy (ED):

Current PDSF Point Of Contact (POC): Jeff Porter (RJPorter@lbl.gov)

-

Sept/06/2011: Update EC and ED

-

Nov/17/2010: Added some tickets for the records

-

Aug/02/2010: Update directory structure for LOG and Generator files

-

Jun/11/2010: Added approved chains by Lidia

-

May/29/2010: Modify procedures of 'Production chain options'

-

May/27/2010: Several minor fixes

-

May/25/2010: Added 'Production chain options', 'Locations of outputs etc at HPSS'

- May/24/2010: Update trigger id options

- May/21/2010: Update instructions for xml file, useful discussions

<!-- Generated by StRoot/macros/embedding/get_embedding_xml.pl on Mon Aug 2 15:26:13 PDT 2010 --> <?xml version="1.0" encoding="utf-8"?> <job maxFilesPerProcess="1" fileListSyntax="paths"> <command> <!-- Load library --> starver SL07e <!-- Set tags file directory --> setenv EMBEDTAGDIR /eliza3/starprod/tags/ppProductionJPsi/P06id <!-- Set year and day from filename --> setenv EMYEAR `StRoot/macros/embedding/getYearDayFromFile.pl -y ${FILEBASENAME}` setenv EMDAY `StRoot/macros/embedding/getYearDayFromFile.pl -d ${FILEBASENAME}` <!-- Set log files area --> setenv EMLOGS /project/projectdirs/star/embedding <!-- Set HPSS outputs/LOG path --> setenv EMHPSS /nersc/projects/starofl/embedding/ppProductionJPsi/JPsi_&FSET;_20100601/P06id.SL07e/${EMYEAR}/${EMDAY} <!-- Print out EMYEAR and EMDAY and EMLOGS --> echo EMYEAR : $EMYEAR echo EMDAY : $EMDAY echo EMLOGS : $EMLOGS echo EMHPSS : $EMHPSS <!-- Start job --> echo 'Executing bfcMixer_TpcSvtSsd(1000, 1, 1, "$INPUTFILE0", "$EMBEDTAGDIR/${FILEBASENAME}.tags.root", 0, 6.0, -1.5, 1.5, -200, 200, 160, 1, triggers, "P08ic", "FlatPt"); ...' root4star -b <<EOF std::vector<Int_t> triggers; triggers.push_back(117705); triggers.push_back(137705); triggers.push_back(117701); .L StRoot/macros/embedding/bfcMixer_TpcSvtSsd.C bfcMixer_TpcSvtSsd(1000, 1, 1, "$INPUTFILE0", "$EMBEDTAGDIR/${FILEBASENAME}.tags.root", 0, 6.0, -1.5, 1.5, -200, 200, 160, 1, triggers, "P08ic", "FlatPt"); .q EOF ls -la . cp $EMLOGS/P06id/LOG/$JOBID.log ${FILEBASENAME}.$JOBID.log cp $EMLOGS/P06id/LOG/$JOBID.elog ${FILEBASENAME}.$JOBID.elog <!-- New command to organize log files --> mkdir -p $EMLOGS/P06id/JPsi_20100601/LOG/&FSET; mv $EMLOGS/P06id/LOG/$JOBID.* $EMLOGS/P06id/JPsi_20100601/LOG/&FSET;/ <!-- Archive in HPSS --> hsi "mkdir -p $EMHPSS; prompt; cd $EMHPSS; mput *.root; mput ${FILEBASENAME}.$JOBID.log; mput ${FILEBASENAME}.$JOBID.elog" </command> <!-- Define locations of log/elog files --> <stdout URL="file:/project/projectdirs/star/embedding/P06id/LOG/$JOBID.log"/> <stderr URL="file:/project/projectdirs/star/embedding/P06id/LOG/$JOBID.elog"/> <!-- Input daq files --> <input URL="file:/eliza3/starprod/daq/2006/st*"/> <!-- csh/list files --> <Generator> <Location>/project/projectdirs/star/embedding/P06id/JPsi_20100601/LIST</Location> </Generator> <!-- Put any locally-compiled stuffs into a sand-box --> <SandBox installer="ZIP"> <Package name="Localmakerlibs"> <File>file:./.sl44_gcc346/</File> <File>file:./StRoot/</File> <File>file:./pams/</File> </Package> </SandBox> </job>

1. Set up daq/tags files

<!-- Input daq files --> <input URL="file:/eliza3/starprod/daq/2006/st*"/>

<!-- Set tags file directory --> setenv EMBEDTAGDIR /eliza3/starprod/tags/ppProductionJPsi/P06id

> StRoot/macros/embedding/get_embedding_xml.pl -daq /eliza3/starprod/daq/2006 -tag /eliza3/starprod/tags/ppProductionJPsi/P06id

> StRoot/macros/embedding/get_embedding_xml.pl -tag /eliza3/starprod/tags/ppProductionJPsi/P06id -daq /eliza3/starprod/daq/2006

2. Running job, archive outputs into HPSS

Below is the descriptions to run the job (bfcMixer), save log files, put outputs/logs into HPSS.

<!-- Start job --> echo 'Executing bfcMixer_TpcSvtSsd(1000, 1, 1, "$INPUTFILE0", "$EMBEDTAGDIR/${FILEBASENAME}.tags.root", 0, 6.0, -1.5, 1.5, -200, 200, 160, 1, triggers, "P08ic", "FlatPt"); ...' root4star -b <<EOF std::vector<Int_t> triggers; triggers.push_back(117705); triggers.push_back(137705); triggers.push_back(117701); .L StRoot/macros/embedding/bfcMixer_TpcSvtSsd.C bfcMixer_TpcSvtSsd(1000, 1, 1, "$INPUTFILE0", "$EMBEDTAGDIR/${FILEBASENAME}.tags.root", 0, 6.0, -1.5, 1.5, -200, 200, 160, 1, triggers, "P08ic", "FlatPt"); .q EOF ls -la . cp $EMLOGS/P06id/LOG/$JOBID.log ${FILEBASENAME}.$JOBID.log cp $EMLOGS/P06id/LOG/$JOBID.elog ${FILEBASENAME}.$JOBID.elog <!-- New command to organize log files --> mkdir -p $EMLOGS/P06id/JPsi_20100601/LOG/&FSET; mv $EMLOGS/P06id/LOG/$JOBID.* $EMLOGS/P06id/JPsi_20100601/LOG/&FSET;/ <!-- Archive in HPSS --> hsi "mkdir -p $EMHPSS; prompt; cd $EMHPSS; mput *.root; mput ${FILEBASENAME}.$JOBID.log; mput ${FILEBASENAME}.$JOBID.elog"

> StRoot/macros/embedding/get_embedding_xml.pl -mixer StRoot/macros/embedding/bfcMixer_Tpx.C

<= Run4 : bfcMixer_TpcOnly.C

Run5 - Run7 : bfcMixer_TpcSvtSsd.C

>= Run8 : bfcMixer_Tpx.C

> StRoot/macros/embedding/get_embedding_xml.pl -production P06id -lib SL07e -r 20100601 -trg ppProductionJPsi

<!-- Load library --> starver SL07e ... ... ls -la . cp $EMLOGS/P06id/LOG/$JOBID.log ${FILEBASENAME}.$JOBID.log cp $EMLOGS/P06id/LOG/$JOBID.elog ${FILEBASENAME}.$JOBID.elog <!-- New command to organize log files --> mkdir -p $EMLOGS/P06id/JPsi_20100601/LOG/&FSET; mv $EMLOGS/P06id/LOG/$JOBID.* $EMLOGS/P06id/JPsi_20100601/LOG/&FSET;/ <!-- Archive in HPSS --> hsi "mkdir -p $EMHPSS; prompt; cd $EMHPSS; mput *.root; mput ${FILEBASENAME}.$JOBID.log; mput ${FILEBASENAME}.$JOBID.elog" ... ... <!-- csh/list files --> <Generator> <Location>/project/projectdirs/star/embedding/P06id/JPsi_20100601/LIST</Location> </Generator>

Error: No /project/projectdirs/star/embedding/P06id/JPsi_20100601/LIST exists. Stop. Make sure you've put the correct path for generator file.

mv $EMLOGS/P06id/LOG/$JOBID.* $EMLOGS/P06id/JPsi_20100601/LOG/&FSET;/

3. Arguments in the bfcMixer

3-1. Particle id and particle name

Particle Jpsi code=160 TrkTyp=4 mass=3.096 charge=0 tlife=7.48e-21,pdg=443 bratio= { 1, } mode= { 203, }

> StRoot/macros/embedding/get_embedding_xml.pl -geantid 160 -particle JPsi

3-2. Particle settings (how to simulate pt distribution)

> StRoot/macros/embedding/get_embedding_xml.pl -mode Strange

3-3. Multiplicity

> StRoot/macros/embedding/get_embedding_xml.pl -mult 0.05

3-4. Primary z-vertex range

> StRoot/macros/embedding/get_embedding_xml.pl -zmin -30.0 -zmax 30.0

3-5. Rapidity and transverse momentum range

> StRoot/macros/embedding/get_embedding_xml.pl -ymin -1.0 -ymax 1.0 -ptmin 0.0 -ptmax 6.0

3-6. Trigger id's

> StRoot/macros/embedding/get_embedding_xml.pl -trigger 117705

> StRoot/macros/embedding/get_embedding_xml.pl -trggier 117705 -trigger 137705 -trigger 117001

> StRoot/macros/embedding/get_embedding_xml.pl -trigger 117705 137705 ...

3-7. Production chain name

StRoot/macros/embedding/get_embedding_xml.pl -prodname P06idpp

> StRoot/macros/embedding/get_embedding_xml.pl -f -daq /eliza3/starprod/daq/2006 -tag /eliza3/starprod/tags/ppProductionJPsi/P06id \ -production P06id -lib SL07e -r 20100601 -trg ppProductionJPsi -geantid 160 -particle JPsi -ptmax 6.0 -trigger 117705 -trigger 137705 -trigger 117701 \ -prodname P06idpp

Production chain options

- ED will set up the proper bfcMixer macro with the correct chain options

-

EC and POC at PDSF will take a look and give feedback

-

Ask Lidia for her inputs, and verify the new chain with Lidia and Yuri

-

Enter all chains into Drupal embedding page for documentation

-

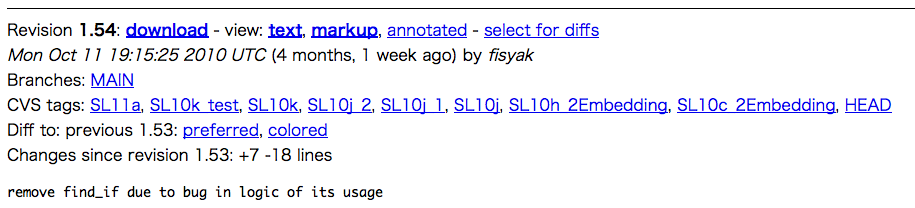

Commit bfcMixer into CVS

--------------------------------

P07ic CuCu production: TString prodP07icAuAu("P2005b DbV20070518 MakeEvent ITTF ToF ssddat spt SsdIt SvtIt pmdRaw OGridLeak OShortR OSpaceZ2 KeepSvtHit skip1row VFMCE -VFMinuit -hitfilt");

P08ic AuAu production: DbV20080418 B2007g ITTF adcOnly IAna KeepSvtHit VFMCE -hitfilt l3onl emcDY2 fpd ftpc trgd ZDCvtx svtIT ssdIT Corr5 -dstout

If spacecharge and gridleak corrections are on average instead of event by event then Corr5-> Corr4, OGridLeak3D, OSpaceZ2.

P08ie dAu production : DbV20090213 P2008 ITTF OSpaceZ2 OGridLeak3D beamLine, VFMCE TpcClu -VFMinuit -hitfilt

TString chain20pt("NoInput,PrepEmbed,gen_T,geomT,sim_T,trs,-ittf,-tpc_daq,nodefault);

P06id pp production : TString prodP06idpp("DbV20060729 pp2006b ITTF OSpaceZ2 OGridLeak3D VFMCE -VFPPVnoCTB -hitfilt");

P06ie pp production : TString prodP06iepp("DbV20060915 pp2006b ITTF OSpaceZ2 OGridLeak3D VFMCE -VFPPVnoCTB -hitfilt"); run# 7096005-7156040

TString prodP06iepp("DbV20061021 pp2006b ITTF OSpaceZ2 OGridLeak3D VFMCE -VFPPVnoCTB -hitfilt"); run# 7071001-709402

Locations of outputs, log files and back up of relevant codes at HPSS

/nersc/projects/starofl/embedding/${TRGSETUPNAME}/${PARTICLE}_&FSET;_${REAUESTID}/${PRODUCTION}.${LIBRARY}/${EMYEAR}/${EMDAY}

(starofl home) /home/starofl/embedding/CODE/${TRGSETUPNAME}/${PARTICLE}_${REQUESTID}/${PRODUCTION}.${LIBRARY}/${EMYEAR} (HPSS) /nersc/projects/starofl/embedding/CODE/${TRGSETUPNAME}/${PARTICLE}_${REQUESTID}/${PRODUCTION}.${LIBRARY}/${EMYEAR}

1. VFMCE chain option

2. Eta dip around eta ~ 0

3. EmbeddingShortCut chain option

will not apply corrections for simulated data (IdTruth > 0 && IdTruth

< 10000 && QA > 0.95).

Trs has to have it. TrsRS should not have it.

Yuri

Till this release this option was always "ON" by default.

The only need for back propagation is when you will use release >= SL10c

with Trs. This correction will be done in dev for nightly tests.

Yuri

4. Bug in StAssociationMaker

For EC and ED(s)

Embedding instructions for EC and ED(s)

Last updated on Oct/23/2018 by Xianglei Zhu

Current Embedding Coordinator (EC): Xianglei Zhu (zhux@tsinghua.edu.cn)

Current Embedding Deputy (ED): Derek Anderson (derekwigwam9@tamu.edu)

Current NERSC Point Of Contact (POC): Jeff Porter (RJPorter@lbl.gov) and Jan Balewski (balewski@lbl.gov)

- Jun/24/2018: initial version (copied from old instructions, still under construction)

- Instructions how to sample daq files and provide tags files

- Instructions how to set up xml file

- Production chain options

- Locations of outputs, log files and back up of relevant codes at HPSS

- Some useful discussions in hyper-news and bug-report

<!-- Generated by StRoot/macros/embedding/get_embedding_xml.pl on Mon Aug 2 15:26:13 PDT 2010 --> <?xml version="1.0" encoding="utf-8"?> <job maxFilesPerProcess="1" fileListSyntax="paths"> <command> <!-- Load library --> starver SL07e <!-- Set tags file directory --> setenv EMBEDTAGDIR /eliza3/starprod/tags/ppProductionJPsi/P06id <!-- Set year and day from filename --> setenv EMYEAR `StRoot/macros/embedding/getYearDayFromFile.pl -y ${FILEBASENAME}` setenv EMDAY `StRoot/macros/embedding/getYearDayFromFile.pl -d ${FILEBASENAME}` <!-- Set log files area --> setenv EMLOGS /project/projectdirs/star/embedding <!-- Set HPSS outputs/LOG path --> setenv EMHPSS /nersc/projects/starofl/embedding/ppProductionJPsi/JPsi_&FSET;_20100601/P06id.SL07e/${EMYEAR}/${EMDAY} <!-- Print out EMYEAR and EMDAY and EMLOGS --> echo EMYEAR : $EMYEAR echo EMDAY : $EMDAY echo EMLOGS : $EMLOGS echo EMHPSS : $EMHPSS <!-- Start job --> echo 'Executing bfcMixer_TpcSvtSsd(1000, 1, 1, "$INPUTFILE0", "$EMBEDTAGDIR/${FILEBASENAME}.tags.root", 0, 6.0, -1.5, 1.5, -200, 200, 160, 1, triggers, "P08ic", "FlatPt"); ...' root4star -b <<EOF std::vector<Int_t> triggers; triggers.push_back(117705); triggers.push_back(137705); triggers.push_back(117701); .L StRoot/macros/embedding/bfcMixer_TpcSvtSsd.C bfcMixer_TpcSvtSsd(1000, 1, 1, "$INPUTFILE0", "$EMBEDTAGDIR/${FILEBASENAME}.tags.root", 0, 6.0, -1.5, 1.5, -200, 200, 160, 1, triggers, "P08ic", "FlatPt"); .q EOF ls -la . cp $EMLOGS/P06id/LOG/$JOBID.log ${FILEBASENAME}.$JOBID.log cp $EMLOGS/P06id/LOG/$JOBID.elog ${FILEBASENAME}.$JOBID.elog <!-- New command to organize log files --> mkdir -p $EMLOGS/P06id/JPsi_20100601/LOG/&FSET; mv $EMLOGS/P06id/LOG/$JOBID.* $EMLOGS/P06id/JPsi_20100601/LOG/&FSET;/ <!-- Archive in HPSS --> hsi "mkdir -p $EMHPSS; prompt; cd $EMHPSS; mput *.root; mput ${FILEBASENAME}.$JOBID.log; mput ${FILEBASENAME}.$JOBID.elog" </command> <!-- Define locations of log/elog files --> <stdout URL="file:/project/projectdirs/star/embedding/P06id/LOG/$JOBID.log"/> <stderr URL="file:/project/projectdirs/star/embedding/P06id/LOG/$JOBID.elog"/> <!-- Input daq files --> <input URL="file:/eliza3/starprod/daq/2006/st*"/> <!-- csh/list files --> <Generator> <Location>/project/projectdirs/star/embedding/P06id/JPsi_20100601/LIST</Location> </Generator> <!-- Put any locally-compiled stuffs into a sand-box --> <SandBox installer="ZIP"> <Package name="Localmakerlibs"> <File>file:./.sl44_gcc346/</File> <File>file:./StRoot/</File> <File>file:./pams/</File> </Package> </SandBox> </job>

1. Set up daq/tags files

<!-- Input daq files --> <input URL="file:/eliza3/starprod/daq/2006/st*"/>

<!-- Set tags file directory --> setenv EMBEDTAGDIR /eliza3/starprod/tags/ppProductionJPsi/P06id

> StRoot/macros/embedding/get_embedding_xml.pl -daq /eliza3/starprod/daq/2006 -tag /eliza3/starprod/tags/ppProductionJPsi/P06id

> StRoot/macros/embedding/get_embedding_xml.pl -tag /eliza3/starprod/tags/ppProductionJPsi/P06id -daq /eliza3/starprod/daq/2006

2. Running job, archive outputs into HPSS

Below is the descriptions to run the job (bfcMixer), save log files, put outputs/logs into HPSS.

<!-- Start job --> echo 'Executing bfcMixer_TpcSvtSsd(1000, 1, 1, "$INPUTFILE0", "$EMBEDTAGDIR/${FILEBASENAME}.tags.root", 0, 6.0, -1.5, 1.5, -200, 200, 160, 1, triggers, "P08ic", "FlatPt"); ...' root4star -b <<EOF std::vector<Int_t> triggers; triggers.push_back(117705); triggers.push_back(137705); triggers.push_back(117701); .L StRoot/macros/embedding/bfcMixer_TpcSvtSsd.C bfcMixer_TpcSvtSsd(1000, 1, 1, "$INPUTFILE0", "$EMBEDTAGDIR/${FILEBASENAME}.tags.root", 0, 6.0, -1.5, 1.5, -200, 200, 160, 1, triggers, "P08ic", "FlatPt"); .q EOF ls -la . cp $EMLOGS/P06id/LOG/$JOBID.log ${FILEBASENAME}.$JOBID.log cp $EMLOGS/P06id/LOG/$JOBID.elog ${FILEBASENAME}.$JOBID.elog <!-- New command to organize log files --> mkdir -p $EMLOGS/P06id/JPsi_20100601/LOG/&FSET; mv $EMLOGS/P06id/LOG/$JOBID.* $EMLOGS/P06id/JPsi_20100601/LOG/&FSET;/ <!-- Archive in HPSS --> hsi "mkdir -p $EMHPSS; prompt; cd $EMHPSS; mput *.root; mput ${FILEBASENAME}.$JOBID.log; mput ${FILEBASENAME}.$JOBID.elog"

> StRoot/macros/embedding/get_embedding_xml.pl -mixer StRoot/macros/embedding/bfcMixer_Tpx.C

<= Run4 : bfcMixer_TpcOnly.C

Run5 - Run7 : bfcMixer_TpcSvtSsd.C

>= Run8 : bfcMixer_Tpx.C

> StRoot/macros/embedding/get_embedding_xml.pl -production P06id -lib SL07e -r 20100601 -trg ppProductionJPsi

<!-- Load library --> starver SL07e ... ... ls -la . cp $EMLOGS/P06id/LOG/$JOBID.log ${FILEBASENAME}.$JOBID.log cp $EMLOGS/P06id/LOG/$JOBID.elog ${FILEBASENAME}.$JOBID.elog <!-- New command to organize log files --> mkdir -p $EMLOGS/P06id/JPsi_20100601/LOG/&FSET; mv $EMLOGS/P06id/LOG/$JOBID.* $EMLOGS/P06id/JPsi_20100601/LOG/&FSET;/ <!-- Archive in HPSS --> hsi "mkdir -p $EMHPSS; prompt; cd $EMHPSS; mput *.root; mput ${FILEBASENAME}.$JOBID.log; mput ${FILEBASENAME}.$JOBID.elog" ... ... <!-- csh/list files --> <Generator> <Location>/project/projectdirs/star/embedding/P06id/JPsi_20100601/LIST</Location> </Generator>

Error: No /project/projectdirs/star/embedding/P06id/JPsi_20100601/LIST exists. Stop. Make sure you've put the correct path for generator file.

mv $EMLOGS/P06id/LOG/$JOBID.* $EMLOGS/P06id/JPsi_20100601/LOG/&FSET;/

3. Arguments in the bfcMixer

3-1. Particle id and particle name

Particle Jpsi code=160 TrkTyp=4 mass=3.096 charge=0 tlife=7.48e-21,pdg=443 bratio= { 1, } mode= { 203, }

> StRoot/macros/embedding/get_embedding_xml.pl -geantid 160 -particle JPsi

3-2. Particle settings (how to simulate pt distribution)

> StRoot/macros/embedding/get_embedding_xml.pl -mode Strange

3-3. Multiplicity

> StRoot/macros/embedding/get_embedding_xml.pl -mult 0.05

3-4. Primary z-vertex range

> StRoot/macros/embedding/get_embedding_xml.pl -zmin -30.0 -zmax 30.0

3-5. Rapidity and transverse momentum range

> StRoot/macros/embedding/get_embedding_xml.pl -ymin -1.0 -ymax 1.0 -ptmin 0.0 -ptmax 6.0

3-6. Trigger id's

> StRoot/macros/embedding/get_embedding_xml.pl -trigger 117705

> StRoot/macros/embedding/get_embedding_xml.pl -trggier 117705 -trigger 137705 -trigger 117001

> StRoot/macros/embedding/get_embedding_xml.pl -trigger 117705 137705 ...

3-7. Production chain name

StRoot/macros/embedding/get_embedding_xml.pl -prodname P06idpp

> StRoot/macros/embedding/get_embedding_xml.pl -f -daq /eliza3/starprod/daq/2006 -tag /eliza3/starprod/tags/ppProductionJPsi/P06id \ -production P06id -lib SL07e -r 20100601 -trg ppProductionJPsi -geantid 160 -particle JPsi -ptmax 6.0 -trigger 117705 -trigger 137705 -trigger 117701 \ -prodname P06idpp

Production chain options

- ED will set up the proper bfcMixer macro with the correct chain options

- EC and POC at PDSF will take a look and give feedback

- Ask Lidia for her inputs, and verify the new chain with Lidia and Yuri

- Enter all chains into Drupal embedding page for documentation

- Commit bfcMixer into CVS

--------------------------------

P07ic CuCu production: TString prodP07icAuAu("P2005b DbV20070518 MakeEvent ITTF ToF ssddat spt SsdIt SvtIt pmdRaw OGridLeak OShortR OSpaceZ2 KeepSvtHit skip1row VFMCE -VFMinuit -hitfilt");

P08ic AuAu production: DbV20080418 B2007g ITTF adcOnly IAna KeepSvtHit VFMCE -hitfilt l3onl emcDY2 fpd ftpc trgd ZDCvtx svtIT ssdIT Corr5 -dstout

If spacecharge and gridleak corrections are on average instead of event by event then Corr5-> Corr4, OGridLeak3D, OSpaceZ2.

P08ie dAu production : DbV20090213 P2008 ITTF OSpaceZ2 OGridLeak3D beamLine, VFMCE TpcClu -VFMinuit -hitfilt

TString chain20pt("NoInput,PrepEmbed,gen_T,geomT,sim_T,trs,-ittf,-tpc_daq,nodefault);

P06id pp production : TString prodP06idpp("DbV20060729 pp2006b ITTF OSpaceZ2 OGridLeak3D VFMCE -VFPPVnoCTB -hitfilt");

P06ie pp production : TString prodP06iepp("DbV20060915 pp2006b ITTF OSpaceZ2 OGridLeak3D VFMCE -VFPPVnoCTB -hitfilt"); run# 7096005-7156040

TString prodP06iepp("DbV20061021 pp2006b ITTF OSpaceZ2 OGridLeak3D VFMCE -VFPPVnoCTB -hitfilt"); run# 7071001-709402

Locations of outputs, log files and back up of relevant codes at HPSS

/nersc/projects/starofl/embedding/${TRGSETUPNAME}/${PARTICLE}_&FSET;_${REAUESTID}/${PRODUCTION}.${LIBRARY}/${EMYEAR}/${EMDAY}

(starofl home) /home/starofl/embedding/CODE/${TRGSETUPNAME}/${PARTICLE}_${REQUESTID}/${PRODUCTION}.${LIBRARY}/${EMYEAR} (HPSS) /nersc/projects/starofl/embedding/CODE/${TRGSETUPNAME}/${PARTICLE}_${REQUESTID}/${PRODUCTION}.${LIBRARY}/${EMYEAR}

1. VFMCE chain option

2. Eta dip around eta ~ 0

3. EmbeddingShortCut chain option

will not apply corrections for simulated data (IdTruth > 0 && IdTruth

< 10000 && QA > 0.95).

Trs has to have it. TrsRS should not have it.

Yuri

Till this release this option was always "ON" by default.

The only need for back propagation is when you will use release >= SL10c

with Trs. This correction will be done in dev for nightly tests.

Yuri

4. Bug in StAssociationMaker

For Embedding Helpers (OBSOLETE)

Embedding instructions for Embedding Helpers

This instructions provide for embedding helpers how to prepare/submit the embedding jobs at PDSF

NOTE: This is specific instructions at PDSF, some procedures may not work at RCF

Current Embedding Coordinator (EC): Terence Tarnowsky (tarnowsk@nscl.msu.edu)

Christopher Powell (CBPowell@lbl.gov)

Current PDSF Point Of Contact (POC): Jeff Porter (RJPorter@lbl.gov)

-

Nov/8/2011: Updated EC and ED information to bring it in line with other webpages.

-

Apr/12/2011: Update Section 3.1, remove obsolete procedure for StPrepEmbedMaker

-

Feb/17/2011: Update Section 3.2

-

Nov/17/2010: Update to get afs token to access afs area

-

Sep/08/2010: Update memory limit in the batch at PDSF (Section 1)

-

Aug/25/2010: Update Section 3.2

-

May/25/2010: Update Section 3 (include klog, library and NOTE about VFMCE)

-

May/21/2010: Added "How to setup your environment at PDSF ?" and "Links"

-

May/20/2010: Updated instructions

Contents

1. How to set up your environment at PDSF ?

Please have a look at the "common issue: memory limit in batch"

and follow the procedure how to increase the memory limit in the batch jobs.

All EH should make this change before submitting any embedding production jobs.

2. Set up daq and tags files at PDSF

3. Production area setup

Below is the copy from the link above what you need to do in order to get afs token to access CVS

If so, you need to specify your afs account name explicitly as well.

> klog -cell rhic -principal YourRCFUserName

> cvs co StRoot/macros/embedding > cvs co StRoot/St_geant_Maker

> mv StRoot/St_geant_Maker/Embed/StPrepEmbedMaker* StRoot/St_geant_Maker/ > starver ${library} > cons

> cvs co pams/sim/gstar > cons

Please contact EC or ED whether bfcMixer (either bfcMixer_TpcSvtSsd.C or bfcMixer_Tpx.C) is ready to submit or not, and confirm which bfcMixer should be used for the current request.

4. Submit jobs

<!-- Put any locally-compiled stuffs into a sand-box --> <SandBox installer="ZIP"> <Package name="Localmakerlibs"> <File>file:./.sl44_gcc346/</File> <File>file:./StRoot/</File> <File>file:./pams/</File> </Package> </SandBox>

in your xml file. If you have anything other than above codes, please include them.

Please contact EC or ED if you are not clear enough which codes you need to include.

4-2. Submit jobs by scheduler

> star-submit-template -template embed_template.xml -entities FSET=200

4-3. Re-submitting jobs

Sometime you may need to modify something under "StRoot" or "pams", and recompile to fix some problems.

Each time you recompiled your local codes, you should clean up the current "Localmakerlibs.zip" and

"Localmakerlibs.package/" before starting resubmission. If you forgot to clean up the older "Localmakerlibs",

then the modification in the local codes will not reflect in the resubmitting jobs.

5. Back up macros, scripts, xml file and codes

6. Links

Obsolete documentations

Embedding Production Setup

This page describes how to set up embedding production. This procedure needs to be followed for any set of daq files/production version that requires embedding. Since this typically involves hacking the reconstruction chain, it is not advised that the typical STAR PA attempt this step. Please coordinate with a local embedding coordinator, and the overal Embedding coordinator (Olga).Note: The documentation here is very terse; it will be enriched as the documentation as a whole is iterated on. Patience is appreciated.

Get daq files from RCF.

| Grab a set of daq files from RCF which cover the lifetime of the run, the luminosity range experienced, and the conditions for the production. |

Rerun standard production but without corrections.

| bfc.C macros are located under ~starofl/bfc. Edit the submit.[Production] script to point to the daq files loaded (as above). |

Put tags files on disk.

| The results of the previous jobs will be .tags.root files located on HPSS. Retrieve the files, set a pointer for the tags files in the Production-specific directory under ~starofl/embedding. |

Now you're ready to start production.

Embedding Setup Off-site

Introduction

The purpose of this document is to describe step-by-step the setting up of embedding infrastructure on a remote site i.e. not at it's current home which is PDSF. It is based on the experience of setting up embedding at Birmingham's NP cluster (Bham). I will try to maintain a distinction between steps which are necessary in general and those which were specific to porting things to Bham. It should also be a useful guide for those wanting to run embedding at PDSF and needing to copy the relevant files into a suitable directory structure.Pre-requisites

Before trying to set up embedding on a remote site you should have:- a working local installation of the STAR library in which you are interested (or be satisified with your AFS-based library performance).

- a working mirror of the star database (or be satisfied with your connection to the BNL hosted db).

Collect scripts

The scripts are currently housed at PDSF in the 'starofl' account area. At the time of writing (and the time at which I set up embedding in Bham) they are not archived in CVS. The suggested way to collect them is to copy them into a directory in your own PDSF home account then tar and export it for installation on your local cluster. The top directory for embedding is /u/starofl/embedding . Under this directory there are several subdirectories of interest.- Those named after each production, e.g. P06ib which contain mixer macro and perl scripts

- Common which contains further subdirectories lists and csh and a submission perl script

- GSTAR which contains the kumac for running the simulation

mkdir embedding

cd embedding

mkdir Common

mkdir Common/lists

mkdir Common/csh

mkdir GSTAR

mkdir P06ib

mkdir P06ib/setup

Now it needs populating with the relevant files. In the following /u/user/embedding as an example of your new embedding directory in your user home directory.

cd /u/user/embedding

cp /u/starofl/embedding/getVerticesFromTags_v4.C .

cp -R /u/starofl/embedding/P06ib/EmbeddingLib_v4_noFTPC/ P06ib/

cp /u/starofl/embedding/P06ib/Embedding_sge_noFTPC.pl P06ib/

cp /u/starofl/embedding/P06ib/bfcMixer_v4_noFTPC.C P06ib/

cp /u/starofl/embedding/P06ib/submit.starofl.pl P06ib/submit.user.pl

cp /u/starofl/embedding/P06ib/setup/Piminus_101_spectra.setup P06ib/setup/

cp /u/starofl/embedding/GSTAR/phasespace_P06ib_revfullfield.kumac GSTAR/

cp /u/starofl/embedding/GSTAR/phasespace_P06ib_fullfield.kumac GSTAR/

cp /u/starofl/embedding/Common/submit_sge.pl Common/

You now have all the files need to run embedding. There are further links to make but as you are going to export them to your own cluster you need to make the links afterwards.

Alternatively you can run embedding on PDSF from your home directory. There are a number of change to make first though because the various perl scripts have some paths relating to the starofl account inside them.

For those planning to export to a remote site you should tar and/or scp the data. I would recommend tar so that you can have the original package preserved in case something goes wrong. E.g.

tar -cvf embedding.tar embedding/

scp embedding.tar remoteuser@mycluster.blah.blah:/home/remoteuser

Obviously this step is unnecessary if you intend to run from your PDSF account although you may still want to create a tar file so that you can undo any changes which are wrong.

Login to your remote cluster and extract the archive. E.gcd /home/remoteuser

tar -xvf embedding.tar

Script changes

The most obvious thing you will find are a number of places inside the perl scripts where the path or location for other scripts appears in the code. These must be changed accordingly.

P06ib/Embedding_sge_noFTPC.pl

changes to e.g.changes to e.g.changes to e.g.changes to e.g.

P06ib/EmbeddingLib_v4_noFTPC/Process_object.pm

changes to e.g.

changes to e.g.

This is because the location of tcsh was different and probably will be for you too.

Common/submit_sge.pl

changes to e.g.

Change relates to parsing the name of the directory with daq files in to extract the 'data vault' and 'magnetic field' which form part of job name and are used by Embedding_sge_noFTPC.pl (This may not make much sense right now and needs the detailed docs on each component. It is actually just a way to pass a file list with the same basename as the job). In the original script the path to the data is something like/dante3/starprod/daq/2005/cuProductionMinBias/FullField

whereas on Bham cluster it is/star/data1/daq/2005/cuProductionMinBias/FullField

and thus the pattern match in perl has to change in order to extract the same information. If you have a choice then choose your directory names with care!changes to e.g.

Change relates to the line printing the job submission shell script that this perl script writes and submits. The first line had to be changed such that it can correctly be identified as a sh script. I am not sure how original can ever have worked?changes to e.g.

This line prints part of the job submission script where the options for the job are specified. In SGE the job options can be in the file and not just on the command line. The extra options for Bham relate to our SGE setup. The-q

option provides the name of the queue to use, otherwise it uses the default which I did not want in this case. The other extra options are to make the environment and working diretory correct as they were not the default for us. This is very specific to each cluster. If your cluster does not have SGE then I imagine extensive changes to the part writing the job submission script would be necessary. The scripts use the ability of SGE to have job arrays of similar jobs so you would have to emulate that somehow.

- getVerticesFromTags_v4.C - none

- GSTAR/phasespace_P06ib_fullfield.kumac, GSTAR/phasespace_P06ib_fullfield.kumac - actually there are changes but they only relate to redefining particle decay modes for (anti-)Ξ and (anti-)Ω to go 100% to charged modes of interest. This is only relevant for strangeness group

- P06ib/bfcMixer_v4_noFTPC.C - checked carefully that

chain3->SetFlagsline actually sets the same flags since Andrew and I had to change the same flags e.g. add GeantOut option after I made orginal copy - P06ib/EmbeddingLib_v4_noFTPC/Chain_object.pm - none

- P06ib/EmbeddingLib_v4_noFTPC/EmbeddingUtilities.pm - there are lines where you may have to add the run numbers of the daq files which you are using so that they are recognised as either full field or reversed full field. In this example (Cu+Cu embedding in P06ib) the lines begin

and. This is also something that Andrew and I both changed after I made the original copy. - P06ib/submit.user.pl - changes here relate to setup that you want to run and not to the cluster or directory you are using i.e. which setup file to use, what daq directories to use and any pattern match on the file names (usually for testing purposes to avoid filling the cluster with useless jobs) although you probably want to change the

line! - P06ib/setup/Piminus_101_spectra.setup - any changes here relate to the simulation parameters of the job that you want to do and not to the cluster or directory you are using

Create links

A number of links are required. For example in the /u/starofl/embedding/P06ib there are the following links:daq_dir_2005_cuPMBFF -> /dante3/starprod/daq/2005/cuProductionMinBias/FullFielddaq_dir_2005_cuPMBRFF -> /dante3/starprod/daq/2005/cuProductionMinBias/ReversedFullFielddaq_dir_2005_cuPMBHTFF -> /eliza5/starprod/daq/2005/cucuProductionHT/FullField/daq_dir_2005_cuPMBHTRFF -> /eliza5/starprod/daq/2005/cucuProductionHT/ReversedFullFieldtags_dir_cuHT_2005 -> /eliza5/starprod/embedding/tags/P06ibdata -> /eliza12/starprod/embedding/datalists ->../Common/listscsh-> ../Common/cshLOG-> ../Common/LOG

tags_dir_cu_2005 -> /dante3/starprod/tags/P06ib/2005

That is it! Some things will probably need to be adapted to your circumstances but it should give you a good idea of what to do

Author: Lee Barnby, University of Birmingham (using starembed account)

Modified: A. Rose, Lawrence Berkeley National Laboratory (using starembed account)

Modified Birmingham Files

Upload of modified embedding infrastructure files used on Birmingham NP cluster for Cu+Cu for (anti-)Λ and K0S embedding request.Production Management

1) Usually embedding jobs are run in "HPSS" mode so the files end up in HPSS (via FTP). To transfer them from HPSS to disk copy the perl script ~starofl/hjort/getEmbed.pl and modify as needed. This script does at least two things that are not possible with, e.g., a command line hsi command: it only gets the files needed (usually the .geant and .event files) and it changes the permissions after the transfers. Note that if you do the transfers shortly after running the jobs the files will probably still be on the HPSS disk cache and transfers will be much fast than getting the files from tapes.2) To clean up old embedding files make your own copy of ~starofl/hjort/embedAge.pl and use as needed. Note that $accThresh determines the maximum access time in days of files that will not be deleted.

Running Embedding

This page describes how to run embedding jobs once the daq files and tags files are in place (see other page about embedding production setup).Basics:

Embedding code is located in production specific directories: ~starofl/embedding/P0xxx. The basic job submission template is typically called submit.starofl.pl in that directory.Jobs are usually run by user starofl but personal accounts with group starprod membership will work, too (but test first as the group starprod write permissions typically are not in place by default).

The script to submit a set of jobs is submit.[user].pl. The script should be modified to submit an embedding set from the the configuration file

~starofl/embedding/[Production]/setup/[Particle]_[set]_[ID].setup

where

[Particle] is the particle type submitted (Piminus for GEANTID=9, as set inside file)

[set] is the file set submitted (more on this later)

[ID] is the embedding request numberTest procedure:

The best way to test a particular job configuration is to run a single job in "DISK" mode (by selecting a specific daq file in your submission). In this mode all of the intermediate files, scripts, logs, etc., are saved on disk. The location will be under the "data" link in the working directory. You can then go and figure out which script failed, hack as necessary and and try to make things work...Details:

QA Documentation

New embedding Base QA instructions

Current Embedding Coordinator (EC): Xianglei Zhu (zhux@tsinghua.edu.cn)

Current NERSC Point Of Contact (POC): Jeff Porter (RJPorter@lbl.gov) and Jan Balewski (balewski@lbl.gov)

-

Jan/9/2015: Update trigger selection on real data histogram making

-

Apr/19/2013: update responsible persons and add note about Omega mis-labeling issue

-

Apr/12/2011: Update NOTE in Section1

- Feb/17/2011: Update NOTE in Section1, and for checking out StAssociationMaker

-

Jan/27/2011: Update the Section 3 for some special decay daughters

-

Oct/25/2010: Added minimc reproduction in Section 1 and StAssociationMaker in Section

-

Jul/23/2010: Update the real data QA

-

May/21/2010: Minor style change, added "contents" and "Links" for "Embedding instructions for Embedding Helpers"

-

May/14/2010: Update rapidity/trigger selections for real data

- Apr/07/2010: Update the instructions on "drawEmbeddingQA.C"

- Feb/22/2010: Added how to change the z-vertex cut and about 'isEmbeddingOnly' flag in "drawEmbeddingQA.C"

- Jan/29/2010: Update the NOTE for "doEmbeddingQAMaker" (see below)

- Jan/22/2010: Update the documentation for the latest QA code

- Sep/21/2009: Add how to plot the QA histograms in the Section 3

- Sep/18/2009: Modify codes to "StEmbeddingQA*", and macro to "doEmbeddingQAMaker.C" (Please have a look at the instructions below carefully).

- Sep/17/2009: Modify Section 2.1

- Sep/15/2009: Add new embedding QA instructions

1. Produce minimc files

> cvs co StRoot/StMiniMcEvent > cvs co StRoot/StMiniMcMaker > cons

.sl53_gcc432/obj/StRoot/StMiniMcMaker/StMiniMcMaker.cxx: In member function 'void StMiniMcMaker::fillRcTrackInfo(StTinyRcTrack*, const StTrack*, const StTrack*, Int_t)': .sl53_gcc432/obj/StRoot/StMiniMcMaker/StMiniMcMaker.cxx:1622: error: 'const class StTrack' has no member named 'seedQuality'

1.1 Check out the relevant codes and macros in your working directory (suppose your working directory is ${work})

> cp /eliza8/rnc/hmasui/embedding/QA/StMiniHijing.C ${work}

- If the eliza8 is down, you can also copy the macro from the link below

- The current "StMiniHijing.C" has been slightly modified from the original version

to obtain a proper minimc filename based on the input geant.root file, see line 159-162 in "StMiniHijing.C"

159 TString filename = MainFile; 160 // int fileBeginIndex = filename.Index(filePrefix,0); 161 // filename.Remove(0,fileBeginIndex); 162 filename.Remove(0, filename.Last('/')+1);

- You don't need to modify the argument "filePrefix".

> cvs co StRoot/StAssociationMaker > cons

1.2 Run "StMiniHijing.C"

Either

> root4star -b -q StMiniHijing.C'(1000, "/eliza9/starprod/embedding/P08ie/dAu/Piplus_201_1233091546/Piplus_st_physics_adc_9020060_raw_2060010_201/st_physics_adc_9020060_raw_2060010.geant.root", "./")'

or

> root4star -b [0] .L StMiniHijing.C [1] StMiniHijing(1000, "/eliza9/starprod/embedding/P08ie/dAu/Piplus_201_1233091546/Piplus_st_physics_adc_9020060_raw_2060010_201/st_physics_adc_9020060_raw_2060010.geant.root", "./"); .... .... .... [2].q

- The 1st argument is maximum number of events.

- The 2nd argument is your input geant.root file.

- The 3rd argument is your output directory. For example, if you would like to put the output into

"./output" directory, you can set the 3rd argument as "./output/".

such as PiPlus_201_1233091546, PiPlus_202_1233091546 etc.

Since the filename of input geant.root's are usually identical among different groups,

you will overwrite your minimc.root if you put the minimc outputs under the same directory.

1.3 Make sure MC geantid is correct

> root4star st_physics_adc_9020060_raw_2060010.minimc.root [0] StMiniMcTree->Draw("mMcTracks.mGeantId")

[0] StMiniMcTree->Scan("mMcTracks.mGeantId") [1].q

2. Run QA codes

2.1 Check out the QA codes from CVS

QA macro

> cvs checkout StRoot/macros/embedding/doEmbeddingQAMaker.C

QA codes

> cvs checkout StRoot/StEmbeddingUtilities

2.2 Run the QA macro

2.2.1 QA for embedding outputs

and the output filename for your QA histograms is "embedding.root"

Either

> root4star -b -q doEmbeddingQAMaker.C'(2008, "P08ie", "minimc.list", "embedding.root")'

or

> root4star -b [0] .L doEmbeddingQAMaker.C [1] doEmbeddingQAMaker(2008, "P08ie", "minimc.list", "embedding.root"); ... ... ... [2] .q

The details of arguments can be found in the "doEmbeddingQAMaker.C"

- Output file name (4th argument, in this case "embedding.root") will be

automatically detemined according to the "year", "production"

and "particle name" if you leave it blank.

you can modify the 6th argument like

> roo4star -b[0] .L doEmbeddingQAMaker.C [1] doEmbeddingQAMaker(2008, "P08ie", "minimc.list", "", kTRUE, 60.0); ... ... ... [2] .q

where the 5th argument is the switch to analyze embedding (kTRUE) or real data (kFALSE).

2.2.2 QA for real data

> roo4star -b[0] .L doEmbeddingQAMaker.C [1] doEmbeddingQAMaker(2008, "P08ie", "mudst.list", "", kFALSE); ... ... ... [2] .q

2.2.3 Trigger selections and rapidity cuts for real data

- The trigger id can be also selected by

StEmbeddingQAUtilities::addTriggerIdCut(const UInt_t id)

StEmbeddingQAUtilities accept multiple trigger id's while current code assumes 1 trigger id per event,

The trigger id cut only affects the real data, not for the embedding outputs.

- You can also apply rapidity cut by

StEmbeddingQAUtilities::setRapidityCut(const Double_t ycut)

It would be good to have the same rapidity cut in the real data as the embedding production.

Please have a look at the simulation request page for rapidity cut or ask embedding helpers

what rapidity cuts they used for the productions.

3. Draw your QA results, compare with the real data

You can make the QA plots by "drawEmbeddingQA.C" under "StRoot/macros/embedding".

> cvs checkout StRoot/macros/embedding/drawEmbeddingQA.C

and "qa_real_2007_P08ic.root" (the output file format is automatically

determined like this if you leave the output filename blank in StEmbeddingQA).

> root4star -l drawEmbeddingQA.C'("./", "qa_embedding_2007_P08ic.root", "qa_real_2007_P08ic.root", 8, 10.0)'

First argument is the directory where the output PDF file is printed.

The default output directory is the current directory.

You can now check the QA histograms from embedding outputs only by

> root4star -l drawEmbeddingQA.C'("./", "qa_embedding_2007_P08ic.root", "qa_real_2007_P08ic.root", 8, 10.0, kTRUE)'

where the last argument 'isEmbeddingOnly' (default is kFALSE) is the switch

to draw the QA histograms for embedding outputs only if it is true.

If you name the output ROOT files by hand, you need to put the year and

production by yourself since those are used

for the output figure name and to print those informations in

the legend for each QA plot.

Suppose you have output ROOT files in your current directory: "qa_embedding.root" and "qa_real.root"

> root4star -l drawEmbeddingQA.C'("./", "qa_embedding.root", "qa_real.root", 2005, "P08ic", 8, 10.0, kFALSE)'

> root4star -l drawEmbeddingQA.C'("./", "qa_embedding_2007_P08ic.root", "qa_real_2007_P08ic.root", 8, 10, kFALSE, 37)'

maker->setParentGeantId(parentGeantId) ;

NOTE: Omegas are labelled as Ant-Omegas and vice versa. This is a known issue due to

of these libraries might fix it. For now, it has not been considered necessary to update all

embedding libraries to fix this issue. Please take note of this in all presentations regarding

(Anti-)Omega embedding.

4. Links

End of New embedding QA instructions

------------------------------------------------------------------------------------

This document is intended to describe the macros used during the quality assurance(Q/A) studies. This page is being updated today April 19 2009

* Macro : scan_embed_mc.C

After knowing the location of the minimc.root files use this macro to generate and output files with extension .root, in which all the histogramas for a basic QA had been filled. New histogramas had been added, ofr instacne a 3D histogram for Dca (pt, eta, dca) will give the distribution of dca as a function of pt and eta simultaneously. Same is done for the number of fit points (pr, eta, nfit). Also histograms to evaluate the randomness of the embedding files had been added to this macro.

* Macros: scan_embed_mudst.C

This macro hopefully you won't have to use it unless is requested. This macro is meant to generate and output root file with distributions coming from the MuDst (MuDst from Lidia) for a particular production. You will need just the location of the output file.

* Macro : plot_embed.C

This macro will take both outputs ( the one coming from minimc and that one coming from MuDst) and plot all the basic qa distributions for a particular production.

Hit Level

At the hit level, this is the documentation:* StEmbedHitsMaker.C

* doEmbedHits.C

* ScanHits.C

* PlotHits.C

See below the links for these macros

Scan-Hits.C

Plot_Hits.C

Plot_Dca.C

Plot_Nfit.C

Plot_embed

<code>//First run scan_embed.C to generate root file with all the histograms

// V. May 31 2007 - Cristina

#ifndef __CINT__

#include "TROOT.h"

#include "TSystem.h"

#include <iostream.h>

#include "TH1.h"

#include "TH2.h"

#include "TH3.h"

#include "TFile.h"

#include "TTree.h"

#include "TChain.h"

#include "TTreeHelper.h"

#include "TText.h"

#include "TLatex.h"

#include "TAttLine.h"

#include "TCanvas.h"

#endif

void plot_embed(Int_t id=9) {

gROOT->LoadMacro("~/macros/Utility.C"); //location of Utility.C

gStyle->SetOptStat(1);

//gStyle->SetOptTitle(0);

gStyle->SetOptDate(0);

gStyle->SetOptFit(0);

gStyle->SetPalette(1);

float mass2;

if (id == 8) { TString tag = "Piplus"; mass2 = 0.019;}

if (id == 9) { TString tag = "Piminus"; mass2 = 0.019;}

if (id == 11) { TString tag = "Kplus"; mass2 = 0.245;}

if (id == 12) { TString tag = "Kminus"; mass2 = 0.245;}

if (id == 14) { TString tag = "Proton"; mass2 = 0.880;}

if (id == 15) { TString tag = "Pbar"; mass2 = 0.880;}

if (id == 50) { TString tag = "Phi"; mass2 = 1.020;}

if (id == 2) { TString tag = "Eplus"; mass2 = 0.511;}

if (id == 1) { TString tag = "Dmeson"; mass2 = 1.864;}

char text1[80];

sprintf(text1,"P05_CuCu200_01_02_08");//this is going to show in all the histograms

char title[100],

char gif[100];

TString prod = "P05_CuCu200_01_02_08";

int nch1 = 0;

int nch2 = 1000;

/////////////////////////////////////////////////

//Cloning Histograms

/////////////////////////////////////////////////

f1 = new TFile ("~/data/P05_CuCu200_010208.root");

TH3D *hDca1 = (TH3D*)hDca -> Clone("hDca1");//DCA

TH3D *hNfit1 = (TH3D*)hNfit -> Clone("hNFit1");//Nfit

TH2D *hPtM_E1 = (TH2D*)hPtM_E -> Clone("hPtM_E1");//Energy Loss

TH2D *dedx1 = (TH2D*)dedx -> Clone("dedx1");

TH2D *dedxG1 = (TH2D*)dedxG -> Clone("dedxG1");

TH2D *vxy1 = (TH2D*)vxy -> Clone("vxy1");

TH1D *vz1 = (TH1D*)vz -> Clone("vz1");

TH1D *dvx1 = (TH1D*)dvx -> Clone("dvx1");

TH1D *dvy1 = (TH1D*)dvy -> Clone("dvy1");

TH1D *dvz1 = (TH1D*)dvz -> Clone("dvz1");

TH1D *PhiMc1 = (TH1D*)PhiMc -> Clone("PhiMc1");

TH1D *EtaMc1 = (TH1D*)EtaMc -> Clone("EtaMc1");

TH1D *PtMc1 = (TH1D*)PtMc -> Clone("PtMc1");

TH1D *PhiM1 = (TH1D*)PhiM -> Clone("PhiM1");

TH1D *EtaM1 = (TH1D*)EtaM -> Clone("EtaM1");

TH1D *PtM1 = (TH1D*)PtM -> Clone("PtM1");

TH2D *PtM_eff1 = (TH2D*)hPtM_eff ->Clone("PtM_eff1");//efficiency

//if you have MuDst hist

TH3D *hDca1r = (TH3D*)hDcaR -> Clone("hDca1r");

TH3D *hNfit1r = (TH3D*)hNfitR -> Clone("hNFit1r");

TH2D *dedx1R = (TH2D*)dedxR -> Clone("dedx1R");

/*

//use the following if you need to compare

f2 = new TFile ("~/data/test_P07ib_pi_10percent_10_03_07.root");

TH2D *PtM_eff2 = (TH2D*)hPtM_eff ->Clone("PtM_eff2");

TH3D *hNfit2 = (TH3D*)hNfit -> Clone("hNFit2");//Nfit

TH1D *PtM2 = (TH1D*)PtM -> Clone("PtM2");

TH1D *PtMc2 = (TH1D*)PtMc -> Clone("PtMc2");

f3 = new TFile ("~/data/test_P07ib_pi_10percent_10_12_07.root");

TH3D *hNfit3 = (TH3D*)hNfit -> Clone("hNFit3");//Nfit

TH2D *PtM_eff3 = (TH2D*)hPtM_eff ->Clone("PtM_eff3");

TH1D *PtM3 = (TH1D*)PtM -> Clone("PtM3");

TH1D *PtMc3 = (TH1D*)PtMc -> Clone("PtMc3");

*/

int nch1 = 0;

int nch2 = 1000;

Double_t pt[4]= {0.3,0.4, 0.5, 0.6};

////////////////////////////////////////////////////////////

//efficiency

/////////////////////////////////////////////////////////////

/*

TCanvas *c10= new TCanvas("c10","Efficiency",500, 500);

c10->SetGridx(0);

c10->SetGridy(0);

c10->SetLeftMargin(0.15);

c10->SetRightMargin(0.05);

//c10->SetTitleOffSet(0.1, "Y");

c10->cd;

PtM1->Rebin(2);

PtMc1->Rebin(2);

PtM1->Divide(PtMc1);

PtM1->SetLineColor(1);

PtM1->SetMarkerStyle(23);

PtM1->SetMarkerColor(1);

PtM1->Draw();

PtM1->SetXTitle ("pT (GeV/c)");

PtM1->SetAxisRange(0.0, 6.0, "X");

return;

/*

PtM2->Rebin(2);

PtMc2->Rebin(2);

PtM2->Divide(PtMc2);

PtM2->SetLineColor(9);

PtM2->SetMarkerStyle(21);

PtM2->SetMarkerColor(9);

PtM2->Draw("same");

PtM3->Rebin(2);

PtMc3->Rebin(2);

PtM3->Divide(PtMc2);

PtM3->SetLineColor(2);

PtM3->SetMarkerStyle(22);

PtM3->SetMarkerColor(2);

PtM3->Draw("same");

keySymbol(0.08, 1.0, text1, 1, 23, 0.04);

/////////////////////////////////////////////////////////////

//Vertex position

//////////////////////////////////////////////////////////

TCanvas *c6= new TCanvas("c6","Vertex position",600, 400);

c6->Divide(2,1);

c6_1->cd();

vz1->Rebin(2);

vz1->SetXTitle("Vertex Z");

vz1->Draw();

c6_2->cd();

vxy1->Draw("colz");

vxy1->SetAxisRange(-1.5, 1.5, "X");

vxy1->SetAxisRange(-1.5, 1.5, "Y");

vxy1->SetXTitle ("vertex X");

vxy1->SetYTitle ("vertex Y");

keyLine(.2, 1.05,text1,1);

c6->Update();

/////////////////////////////////////////////////////////////////////

//Dedx

////////////////////////////////////////////////////////////////////

TCanvas *c8= new TCanvas("c8","dEdx vs P",500, 500);

c8->SetGridx(0);

c8->SetGridy(0);

c8->SetLeftMargin(0.15);

c8->SetRightMargin(0.05);

c8->cd;

dedxG1->SetXTitle("Momentum P (GeV/c)");

dedxG1->SetYTitle("dE/dx");

dedxG1->SetAxisRange(0, 5., "X");

dedxG1->SetAxisRange(0, 8., "Y");

dedxG1->SetMarkerColor(1);

dedxG1->Draw();//"colz");

dedx1->SetMarkerStyle(7);

dedx1->SetMarkerSize(0.3);

dedx1->SetMarkerColor(2);

dedx1->Draw("same");

keyLine(.3, 0.87,"Embedded Tracks",2);

keyLine(.3, 0.82,"Ghost Tracks",1);

keyLine(.2, 1.05,text1,1);

c8->Update();

/////////////////////////////////////////////////////

//MIPS (just for pions)

/////////////////////////////////////////////////////

if (id==8 || id==9)

{

TCanvas *c9= new TCanvas("c9","MIPS",500, 500);

c9->SetGridx(0);

c9->SetGridy(0);

c9->SetLeftMargin(0.15);

c9->SetRightMargin(0.05);

c9->cd;

double pt1 = 0.4;

double pt2 = 0.6;

dedxG1 -> ProjectionX("rpx");

int blG = rpx->FindBin(pt1);

int bhG = rpx->FindBin(pt2);

cout<<blG<<endl;

cout<<bhG<<endl;

dedxG1->ProjectionY("rpy",blG,bhG);

rpy->SetTitle("MIPS");

rpy->SetMarkerStyle(22);

// rpy->SetMarkerColor(2);

rpy->SetAxisRange(1.3, 4, "X");

//dedxG1->Draw();

dedx1->ProjectionX("mpx");

int blm = mpx->FindBin(pt1);

int bhm = mpx->FindBin(pt2);

cout<<blm<<endl;

cout<<bhm<<endl;

dedx1->ProjectionY("mpy", blm,bhm);

mpy->SetAxisRange(0.5, 6, "X");

mpy->SetMarkerStyle(22);

mpy->SetMarkerColor(2);

float max_rpy = rpy->GetMaximum();

max_rpy /= 1.*mpy->GetMaximum();

mpy->Scale(max_rpy);

cout<<"max_rpy is: "<<max_rpy<<endl;

cout<<"mpy is: "<<mpy<<endl;

rpy->Sumw2();

mpy->Sumw2();

rpy->Fit("gaus","","",1,4);

mpy->Fit("gaus","","", 1, 4);

mpy->GetFunction("gaus")->SetLineColor(2);

rpy->SetAxisRange(0.5 ,6.0, "X");

mpy->Draw();

rpy->Draw("same");

float mipMc = mpy->GetFunction("gaus")->GetParameter(1);//mean

float mipGhost = rpy->GetFunction("gaus")->GetParameter(1);

float sigmaMc = mpy->GetFunction("gaus")->GetParameter(2);//mean

float sigmaGhost = rpy->GetFunction("gaus")->GetParameter(2);

char label1[80];

char label2[80];

char label3[80];

char label4[80];

sprintf(label1,"mip MC %.3f",mipMc);

sprintf(label2,"mip Ghost %.3f",mipGhost);

sprintf(label3,"sigma MC %.3f",sigmaMc);

sprintf(label4,"sigma Ghost %.3f",sigmaGhost);

keySymbol(.5, .9, label1,2,1);

keySymbol(.5, .85, label3,2,1);

keySymbol(.5, .75, label2,1,1);

keySymbol(.5, .70, label4,1,1);

keyLine(.2, 1.05,text1,1);

char name[30];

sprintf(name,"%.2f GeV/c < Pt < %.2f GeV/c",pt1, pt2);

keySymbol(0.3, 0.65, name, 1, 1, 0.04);

c9->Update();

}//close if pion

/////////////////////////////////////////////////////////////////////////

//Energy loss

//////////////////////////////////////////////////////////////////////////

TCanvas *c7= new TCanvas("c7","Energy Loss",400, 400);

c7->SetGridx(0);

c7->SetGridy(0);

c7->SetLeftMargin(0.20);

c7->SetRightMargin(0.05);

c7->cd;

hPtM_E->ProfileX("pfx");

pfx->SetAxisRange(-0.01, 0.01, "Y");

pfx->SetAxisRange(0, 6, "X");

pfx->GetYaxis()->SetDecimals();

pfx->SetMarkerStyle(23);

pfx->SetMarkerSize(0.038);

pfx->SetMarkerColor(4);

pfx->SetLineColor(4);

pfx->SetXTitle ("Pt-Reco");

pfx->SetYTitle ("ptM - PtMc");

pfx->SetTitleOffset(2,"Y");

pfx->Draw();

/*hPtM_E1->ProfileX("pfx1");

pfx1->SetAxisRange(-0.007, 0.007, "Y");

pfx1->GetYaxis()->SetDecimals();

pfx1->SetLineColor(2);

pfx1->SetMarkerStyle(21);

pfx1->SetMarkerSize(0.035);

pfx1->SetXTitle ("Pt-Reco");

pfx1->SetYTitle ("ptM - PtMc");

pfx1->SetTitleOffset(2,"Y");

pfx1->Draw("same");

c7->Update();

//////////////////////////////////////////////////////

//pt

//////////////////////////////////////////////////////

TCanvas *c2= new TCanvas("c2","pt",500, 500);

c2->SetGridx(0);

c2->SetGridy(0);

c2->SetTitle(0);

c2->cd();

//embedded

PtMc1->Rebin(2);

PtMc1->SetLineColor(2);

PtMc1->SetMarkerStyle(20);

PtMc1->SetMarkerColor(2);

PtMc1->Draw();

PtMc1->SetXTitle ("pT (GeV/c)");

PtMc1->SetAxisRange(0.0, 6.0, "X");

//Reco

PtM1->Rebin(2);

PtM1->SetMarkerStyle(20);

PtM1->SetMarkerColor(1);

PtM1->Draw("same");

keySymbol(.2, 1.05,text1,1);

keyLine(.3, 0.20,"Embeded-McTracks",2);

keyLine(.3, 0.16,"Matched Pairs",1);

// keyLine(.4, 0.82,"Previous Embedding",4);

c2->Update();

//////////////////////////////////////////////////////////////////

//phi

/////////////////////////////////////////////////////////////////

TCanvas *c5= new TCanvas("c5","pt",500, 500);

//c5->Divide(2,1);

c5->SetGridx(0);

c5->SetGridy(0);

c5->SetTitle(0);

c5->cd();

//embedded

PhiMc1->Rebin(2);

PhiMc1->SetLineColor(2);

PhiMc1->SetMarkerStyle(20);

PhiMc1->SetMarkerColor(2);

PhiMc1->Draw();

PhiMc1->SetXTitle ("Phi");

PhiMc1->SetAxisRange(-4, 4.0, "X");

//Reco

PhiM1->Rebin(2);

PhiM1->SetMarkerStyle(20);

PhiM1->SetMarkerColor(1);

PhiM1->Draw("same");

//Previous

// PhiM ->Rebin(2);

// PtM->SetLineColor(4);

// PtM->SetMarkerColor(4);

//PtM->Draw("same");

TLatex l;

l.DrawLatex(7.0, 450.0, prod);

keySymbol(.2, 1.05,text1,1);

keyLine(.3, 0.20,"Embeded-McTracks",2);

keyLine(.3, 0.16,"Reco - Matched Pairs",1);

// keyLine(.4, 0.82,"Previous Embedding",4);

c2->Update();

c5->Update();

/////////////////////////////////////

//eta

///////////////////////////////////////////////////////////////

TCanvas *c2= new TCanvas("c2","Eta",500, 500);

c2->SetGridx(0);

c2->SetGridy(0);

c2->SetTitle(0);

c2->cd();

//embedded

EtaMc1->Rebin(2);

EtaMc1->SetLineColor(2);

EtaMc1->SetMarkerStyle(20);

EtaMc1->SetMarkerColor(2);

EtaMc1->Draw();

EtaMc1->SetXTitle ("Eta");

EtaMc1->SetAxisRange(-1.2, 1.2, "X");

//Reco

EtaM1->Rebin(2);

EtaM1->SetMarkerStyle(20);

EtaM1->SetMarkerColor(1);

EtaM1->Draw("same");

TLatex l;

l.DrawLatex(7.0, 450.0, prod);

keySymbol(.2, 1.05,text1,1);

keyLine(.3, 0.20,"Embeded-McTracks",2);

keyLine(.3, 0.16,"Reco - Matched Pairs",1);

// keyLine(.4, 0.82,"Previous Embedding",4);

c2->Update();

/////////////////////////////////////////////////////////////

//DCA

////////////////////////////////////////////////////////////

TCanvas *c= new TCanvas("c","DCA",800, 400);

c->Divide(3,1);

c->SetGridx(0);

c->SetGridy(0);

//Matched (Bins for Multiplicity)

TH1D *hX1 = (TH1D*)hDca1->Project3D("X");

Int_t bin_nch1 = hX1->FindBin(nch1);

Int_t bin_nch2 = hX1->FindBin(nch2);//this should be the same for both graphs (for 3 graphs)

//Bins for Pt

TString name1 = "hDca1";

TString namer1 = "hDcar1";

TString name = "hDca";

TH1D *hY1 = (TH1D*)hDca1->Project3D("Y");

TH1D *hY1_r = (TH1D*)hDca1r->Project3D("Y");

TH1D *hY = (TH1D*)hDca->Project3D("Y");

Double_t Sum1_MC;

Double_t Sum1_Real;

Double_t Sum_MC;

for(Int_t i=0; i<3 ; i++)

{

c->cd(i+1);

Int_t bin_ptl_1 = hY1->FindBin(pt[i]);

Int_t bin_pth_1 = hY1->FindBin(pt[i+1]);

TH1D *hDcaNew1= (TH1D*)hDca1->ProjectionZ(name1+i,bin_nch1, bin_nch2, bin_ptl_1, bin_pth_1);

Sum1_MC = hDcaNew1 ->GetSum();

cout<<Sum1_MC<<endl;

hDcaNew1->Scale(1./Sum1_MC);

hDcaNew1 ->SetLineColor(2);

hDcaNew1->Draw();

hDcaNew1->SetXTitle("Dca (cm)");

sprintf(title," %.2f GeV < pT < %.2f GeV, %d < nch < %d", pt[i],pt[i+1],nch1,nch2);

hDcaNew1->SetTitle(title);

//----Now MuDSt

Int_t bin_ptrl_1r = hY1_r->FindBin(pt[i]);

Int_t bin_ptrh_1r = hY1_r->FindBin(pt[i+1]);

TH1D *hDca_r1= (TH1D*)hDca1r->ProjectionZ(namer1+i,bin_nch1, bin_nch2, bin_ptrl_1r, bin_ptrh_1r);

Sum1_Real = hDca_r1 ->GetSum();

cout<<Sum1_Real<<endl;

hDca_r1->Scale(1./Sum1_Real);

hDca_r1->Draw("same");

//Now Previous Embedding

Int_t bin_ptl = hY->FindBin(pt[i]);

Int_t bin_pth = hY->FindBin(pt[i+1]);

TH1D *hDcaNew = (TH1D*)hDca->ProjectionZ(name+i,bin_nch1, bin_nch2, bin_ptl, bin_pth);

Sum_MC = hDcaNew ->GetSum();

cout<<Sum_MC<<endl;

hDcaNew->Scale(1./Sum_MC);

hDcaNew ->SetLineColor(4);

hDcaNew->Draw("same");

keySymbol(.4, .95,text1,1,1);

keyLine(0.4, 0.90,"MC- Matched Pairs",2);

keyLine(0.4, 0.85,"MuDst",1);

keyLine(0.4, 0.80,"Previous Embedding P06ib",4);

}

c->Update();

///////////////////////////////////////////////////

//NFIT

////////////////////////////////////////////////////

TCanvas *c1= new TCanvas("c1","NFIT",800, 400);

c1->Divide(3,1);

c1->SetGridx(0);

c1->SetGridy(0);

//Bins for Multiplicity -Matched tracks

TH1D *hX1 = (TH1D*)hNfit1->Project3D("X");

Int_t bin_nch1 = hX1->FindBin(nch1);

Int_t bin_nch2 = hX1->FindBin(nch2);//this should be the same for both graphs (for 3 graphs)

//Bins for Pt

TString name_nfit1 = "hNfit1";

TString name_nfitr1 = "hNfitr1";

TString name_nfit = "hNfit";

TH1D *hY1 = (TH1D*)hNfit1->Project3D("Y");

TH1D *hY1_r = (TH1D*)hNfit1r->Project3D("Y");

TH1D *hY = (TH1D*)hNfit->Project3D("Y");

Double_t Sum1_Nfit_MC;

Double_t Sum1_Nfit_Real;

Double_t Sum__Nfit_MC;

for(Int_t i=0; i<3 ; i++)

{

c1->cd(i+1);

Int_t bin_ptl_1 = hY1->FindBin(pt[i]);

Int_t bin_pth_1 = hY1->FindBin(pt[i+1]);

TH1D *hNfitNew1= (TH1D*)hNfit1->ProjectionZ(name_nfit1+i,bin_nch1, bin_nch2, bin_ptl_1, bin_pth_1);

Sum1_Nfit_MC = hNfitNew1 ->GetSum();

cout<<Sum1_Nfit_MC<<endl;

hNfitNew1->Scale(1./Sum1_Nfit_MC);

hNfitNew1 ->SetLineColor(2);

hNfitNew1->Draw();

hNfitNew1->SetXTitle("Nfit");

sprintf(title," %.2f GeV < pT < %.2f GeV, %d < nch < %d", pt[i],pt[i+1],nch1,nch2);

hNfitNew1->SetTitle(title);

//----Now MuDSt

Int_t bin_ptrl_1r = hY1_r->FindBin(pt[i]);

Int_t bin_ptrh_1r = hY1_r->FindBin(pt[i+1]);

TH1D *hNfit_r1= (TH1D*)hNfit1r->ProjectionZ(name_nfitr1+i,bin_nch1, bin_nch2, bin_ptrl_1r, bin_ptrh_1r);

Sum1_Nfit_Real = hNfit_r1 ->GetSum();

cout<<Sum1_Nfit_Real<<endl;

hNfit_r1->Scale(1./Sum1_Nfit_Real);

hNfit_r1->Draw("same");

//Now Previous Embedding

Int_t bin_ptl = hY->FindBin(pt[i]);

Int_t bin_pth = hY->FindBin(pt[i+1]);

TH1D *hNfitNew = (TH1D*)hNfit->ProjectionZ(name_nfit+i,bin_nch1, bin_nch2, bin_ptl, bin_pth);

Sum_Nfit_MC = hNfitNew ->GetSum();

cout<<Sum__Nfit_MC<<endl;

hNfitNew->Scale(1./Sum_Nfit_MC);

hNfitNew ->SetLineColor(4);

hNfitNew->Draw("same");

///*T = new TBox(40, 0, 50, 0.01);

//T->SetLineColor(2);

//T->SetLineWidth(2);

//T->Draw("same");

//check this....

keySymbol(.2, .95,text1,1,1);

keyLine(0.2, 0.90,"MC- Matched Pairs",2);

keyLine(0.2, 0.85,"MuDst",1);

keyLine(0.2, 0.80,"Previous Embedding P06ib",4);

}

return;

}

</code>

scan_embed.C

Notes

Overview

The document is intended as a forum for embedding group members to share information and notes.

Jobs Done

P06ib:6050022_1050001.28606: /eliza5/starprod/embedding/P06ib

6050022_1050001.7247: /eliza5/starprod/embedding/P06ib

6050022_1060001.3717: /eliza5/starprod/embedding/P06ib

6050022_1070001.10533: /eliza5/starprod/embedding/P06ib

6050022_1070001.15557: /eliza5/starprod/embedding/P06ib

6050022_1080001.26181: /eliza5/starprod/embedding/P06ib

6050022_1080002.25705: /eliza5/starprod/embedding/P06ib

6050022_1080002.6089: /eliza5/starprod/embedding/P06ib

6050022_2050001.28441: /eliza5/starprod/embedding/P06ib

6050022_2060001.20921: /eliza5/starprod/embedding/P06ib

6050022_2060001.2233: /eliza5/starprod/embedding/P06ib

6050022_2060002.29805: /eliza5/starprod/embedding/P06ib

6050022_2060002.31396: /eliza5/starprod/embedding/P06ib

6050022_2070001.1384: /eliza5/starprod/embedding/P06ib

6050022_2070001.27931: /eliza5/starprod/embedding/P06ib

6050022_3050001.26648: /eliza5/starprod/embedding/P06ib

6050022_3050002.20435: /eliza5/starprod/embedding/P06ib

6050022_3060001.32622: /eliza5/starprod/embedding/P06ib

6050022_3060001.6911: /eliza5/starprod/embedding/P06ib

6050022_3060002.10366: /eliza5/starprod/embedding/P06ib

6050022_3060002.5583: /eliza5/starprod/embedding/P06ib

6052072_1050001.15271: /eliza5/starprod/embedding/P06ib

6052072_1060001.18062: /eliza5/starprod/embedding/P06ib

6052072_1080001.20338: /eliza5/starprod/embedding/P06ib

6052072_1080002.27976: /eliza5/starprod/embedding/P06ib

6052072_2070001.27969: /eliza5/starprod/embedding/P06ib

6052072_2070002.21296: /eliza5/starprod/embedding/P06ib

6052072_2080001.17830: /eliza5/starprod/embedding/P06ib

6052072_3070001.15967: /eliza5/starprod/embedding/P06ib

AOmega_111_strange: /eliza5/starprod/embedding/P06ib

AOmega_112_strange: /eliza5/starprod/embedding/P06ib

AOmega_121_strange: /eliza5/starprod/embedding/P06ib

AOmega_122_strange: /eliza5/starprod/embedding/P06ib

AOmega_123_strange: /eliza5/starprod/embedding/P06ib

AXi_101_strange: /eliza5/starprod/embedding/P06ib

AXi_102_strange: /eliza5/starprod/embedding/P06ib

AXi_111_strange: /eliza5/starprod/embedding/P06ib

AXi_112_strange: /eliza5/starprod/embedding/P06ib

AXi_113_strange: /eliza5/starprod/embedding/P06ib

E_101_1154003879: /eliza5/starprod/embedding/P06ib

E_102_1154003879: /eliza5/starprod/embedding/P06ib

E_106_1154003879: /eliza5/starprod/embedding/P06ib

E_107_1154003879: /eliza5/starprod/embedding/P06ib

E_201_1154003879: /eliza5/starprod/embedding/P06ib

E_202_1154003879: /eliza5/starprod/embedding/P06ib

E_203_1154003879: /eliza5/starprod/embedding/P06ib

E_204_1154003879: /eliza5/starprod/embedding/P06ib

E_205_1154003879: /eliza5/starprod/embedding/P06ib

E_206_1154003879: /eliza5/starprod/embedding/P06ib

E_207_1154003879: /eliza5/starprod/embedding/P06ib

Eplus_502_1154003879: /eliza5/starprod/embedding/P06ib

Lambda_201_strange: /eliza5/starprod/embedding/P06ib

Omega_111_strange: /eliza5/starprod/embedding/P06ib

Omega_112_strange: /eliza5/starprod/embedding/P06ib

Omega_113_strange: /eliza5/starprod/embedding/P06ib

Phi_101_1163628205: /eliza5/starprod/embedding/P06ib

Pi0_101_1163628205: /eliza5/starprod/embedding/P06ib

Pi0_102_1163628205: /eliza5/starprod/embedding/P06ib

Pi0_103_1163628205: /eliza5/starprod/embedding/P06ib

Xi_111_strange: /eliza5/starprod/embedding/P06ib

Xi_112_strange: /eliza5/starprod/embedding/P06ib

Xi_113_strange: /eliza5/starprod/embedding/P06ib

P05if:

Eminus_503_1154003879: /eliza12/starprod/embedding/P05if

Eminus_504_1154003879: /eliza12/starprod/embedding/P05if

Eplus_503_1154003879: /eliza12/starprod/embedding/P05if

Eplus_504_1154003879: /eliza12/starprod/embedding/P05if

Eplus_505_1154003879: /eliza12/starprod/embedding/P05if

P05ic:

K0Short_122_strange: /auto/pdsfdv40/starprod/embedding/P05ic

K0Short_124_strange: /auto/pdsfdv40/starprod/embedding/P05ic

K0Short_131_strange: /auto/pdsfdv40/starprod/embedding/P05ic

K0Short_132_strange: /auto/pdsfdv40/starprod/embedding/P05ic

Lambda_112_strange: /auto/pdsfdv40/starprod/embedding/P05ic

Lambda_114_strange: /auto/pdsfdv40/starprod/embedding/P05ic

Lambda_122_strange: /auto/pdsfdv40/starprod/embedding/P05ic

Lambda_124_strange: /auto/pdsfdv40/starprod/embedding/P05ic

Lambda_131_strange: /auto/pdsfdv40/starprod/embedding/P05ic

Lambda_132_strange: /auto/pdsfdv40/starprod/embedding/P05ic

Piminus_101_spectra: /auto/pdsfdv40/starprod/embedding/P05ic

Piplus_101_spectra: /auto/pdsfdv40/starprod/embedding/P05ic

Pbar_201_spectra: /auto/pdsfdv45/starprod/embedding/P05ic

Pbar_202_spectra: /auto/pdsfdv45/starprod/embedding/P05ic

Phi_115_1118150698: /auto/pdsfdv45/starprod/embedding/P05ic

Phi_116_1118150698: /auto/pdsfdv45/starprod/embedding/P05ic

Phi_117_1118150698: /auto/pdsfdv45/starprod/embedding/P05ic

Piplus_201_spectra: /auto/pdsfdv45/starprod/embedding/P05ic

Piplus_202_spectra: /auto/pdsfdv45/starprod/embedding/P05ic

K0Short_101_strange: /dante3/starprod/embedding/P05ic

K0Short_102_strange: /dante3/starprod/embedding/P05ic

Jpsi_102_spectra: /eliza1/starprod/embedding/P05ic

Jpsi_103_spectra: /eliza1/starprod/embedding/P05ic

Jpsi_104_spectra: /eliza1/starprod/embedding/P05ic

Jpsi_105_spectra: /eliza1/starprod/embedding/P05ic

Piplus_201_ftpcw: /eliza1/starprod/embedding/P05ic

Sigma1385minus_301_strange: /eliza1/starprod/embedding/P05ic

Sigma1385plus_301_strange: /eliza1/starprod/embedding/P05ic

Sigma1385plus_302_strange: /eliza1/starprod/embedding/P05ic

Omega_101_strange: /eliza5/starprod/embedding/P05ic

Omega_102_strange: /eliza5/starprod/embedding/P05ic

Photon_201_spectra: /eliza5/starprod/embedding/P05ic

Photon_202_spectra: /eliza5/starprod/embedding/P05ic

Photon_203_spectra: /eliza5/starprod/embedding/P05ic

Photon_204_spectra: /eliza5/starprod/embedding/P05ic

Photon_205_spectra: /eliza5/starprod/embedding/P05ic

Photon_206_spectra: /eliza5/starprod/embedding/P05ic

Photon_207_spectra: /eliza5/starprod/embedding/P05ic

Photon_208_spectra: /eliza5/starprod/embedding/P05ic

Photon_209_spectra: /eliza5/starprod/embedding/P05ic

Photon_210_spectra: /eliza5/starprod/embedding/P05ic

Photon_211_spectra: /eliza5/starprod/embedding/P05ic

Photon_212_spectra: /eliza5/starprod/embedding/P05ic

Photon_213_spectra: /eliza5/starprod/embedding/P05ic

Photon_214_spectra: /eliza5/starprod/embedding/P05ic

Photon_215_spectra: /eliza5/starprod/embedding/P05ic

Photon_301_spectra: /eliza5/starprod/embedding/P05ic

Photon_302_spectra: /eliza5/starprod/embedding/P05ic

Photon_303_spectra: /eliza5/starprod/embedding/P05ic

Photon_304_spectra: /eliza5/starprod/embedding/P05ic

Photon_305_spectra: /eliza5/starprod/embedding/P05ic

Photon_306_spectra: /eliza5/starprod/embedding/P05ic

Photon_308_spectra: /eliza5/starprod/embedding/P05ic

Photon_309_spectra: /eliza5/starprod/embedding/P05ic

Photon_310_spectra: /eliza5/starprod/embedding/P05ic

Photon_311_spectra: /eliza5/starprod/embedding/P05ic

Photon_312_spectra: /eliza5/starprod/embedding/P05ic

Photon_313_spectra: /eliza5/starprod/embedding/P05ic

Photon_314_spectra: /eliza5/starprod/embedding/P05ic

Photon_315_spectra: /eliza5/starprod/embedding/P05ic

Photon_501_spectra: /eliza5/starprod/embedding/P05ic

Photon_502_spectra: /eliza5/starprod/embedding/P05ic

Photon_503_spectra: /eliza5/starprod/embedding/P05ic

Photon_504_spectra: /eliza5/starprod/embedding/P05ic

Piminus_101_spectra: /eliza5/starprod/embedding/P05ic

Piminus_118_spectra: /eliza5/starprod/embedding/P05ic

Piminus_119_spectra: /eliza5/starprod/embedding/P05ic

Piminus_120_spectra: /eliza5/starprod/embedding/P05ic

Piminus_121_spectra: /eliza5/starprod/embedding/P05ic

Piminus_122_spectra: /eliza5/starprod/embedding/P05ic

Piplus_118_spectra: /eliza5/starprod/embedding/P05ic

Piplus_119_spectra: /eliza5/starprod/embedding/P05ic

Piplus_120_spectra: /eliza5/starprod/embedding/P05ic

Piplus_121_spectra: /eliza5/starprod/embedding/P05ic

Piplus_122_spectra: /eliza5/starprod/embedding/P05ic

Piplus_212_ftpcw: /eliza5/starprod/embedding/P05ic

Xi_101_strange: /eliza5/starprod/embedding/P05ic

Xi_102_strange: /eliza5/starprod/embedding/P05ic

Xi_103_strange: /eliza5/starprod/embedding/P05ic

Xi_104_strange: /eliza5/starprod/embedding/P05ic

Xi_105_strange: /eliza5/starprod/embedding/P05ic

Xi_110_strange: /eliza5/starprod/embedding/P05ic

Xi_111_strange: /eliza5/starprod/embedding/P05ic

Xi_112_strange: /eliza5/starprod/embedding/P05ic

Xi_113_strange: /eliza5/starprod/embedding/P05ic

Xi_114_strange: /eliza5/starprod/embedding/P05ic

Xi1530_405_strange: /eliza5/starprod/embedding/P05ic

Xi1530_406_strange: /eliza5/starprod/embedding/P05ic

Xi1530_407_strange: /eliza5/starprod/embedding/P05ic

Xi1530_408_strange: /eliza5/starprod/embedding/P05ic

P04ik:

dbar_101_200GeV: /auto/pdsfdv37/starprod/embedding/P04ik

dbar_102_200GeV: /auto/pdsfdv37/starprod/embedding/P04ik

dbar_103_200GeV: /auto/pdsfdv37/starprod/embedding/P04ik

dbar_104_200GeV: /auto/pdsfdv37/starprod/embedding/P04ik

dbar_105_200GeV: /auto/pdsfdv37/starprod/embedding/P04ik

dbar_106_200GeV: /auto/pdsfdv37/starprod/embedding/P04ik

dbar_107_200GeV: /auto/pdsfdv37/starprod/embedding/P04ik

dbar_108_200GeV: /auto/pdsfdv37/starprod/embedding/P04ik

dbar_109_200GeV: /auto/pdsfdv37/starprod/embedding/P04ik

dbar_110_200GeV: /auto/pdsfdv37/starprod/embedding/P04ik

Pi0_300_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_301_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_302_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_303_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_304_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_305_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_306_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_307_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_308_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_309_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_310_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_311_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_312_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_313_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_314_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_315_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_316_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_317_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_318_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

Pi0_319_1112996078: /auto/pdsfdv40/starprod/embedding/P04ik

dbar_101_200GeV: /eliza1/starprod/embedding/P04ik

Pi0_282_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_283_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_284_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_285_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_286_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_287_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_288_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_289_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_290_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_291_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_292_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_293_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_294_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_295_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_296_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_297_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_298_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_299_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_311_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_312_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_313_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_314_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_315_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_316_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_317_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_318_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_319_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_321_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_322_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_323_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_324_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_325_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_326_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_327_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_328_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_329_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_331_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_332_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_333_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_334_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_335_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_336_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_337_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_338_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_339_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_340_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_341_dalitz: /eliza1/starprod/embedding/P04ik

Pi0_341_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_342_dalitz: /eliza1/starprod/embedding/P04ik

Pi0_342_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_343_dalitz: /eliza1/starprod/embedding/P04ik

Pi0_343_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_344_dalitz: /eliza1/starprod/embedding/P04ik

Pi0_344_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_345_dalitz: /eliza1/starprod/embedding/P04ik

Pi0_345_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_346_dalitz: /eliza1/starprod/embedding/P04ik

Pi0_346_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_347_dalitz: /eliza1/starprod/embedding/P04ik

Pi0_347_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_348_dalitz: /eliza1/starprod/embedding/P04ik

Pi0_348_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_349_dalitz: /eliza1/starprod/embedding/P04ik

Pi0_349_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_350_dalitz: /eliza1/starprod/embedding/P04ik

Pi0_350_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_351_dalitz: /eliza1/starprod/embedding/P04ik

Pi0_351_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_352_dalitz: /eliza1/starprod/embedding/P04ik

Pi0_352_flatpt: /eliza1/starprod/embedding/P04ik

Pi0_353_dalitz: /eliza1/starprod/embedding/P04ik

Pi0_353_flatpt: /eliza1/starprod/embedding/P04ik