Calibration

STAR Calibrations

In addition to the child pages listed below:

Calibration Run preparation

This page is meant to organize information about the calibration preparations and steps required for each subdetector for upcoming runs.- Run preparation Run VI (2006)

- Run preparation Run VII

- preparation Run VIII (2008)

- You do not have access to view this node

Run 18 Calibration Datasets

Below are the calibration dataset considerations for isobar needs in Run 18:- EMC

- emc-check runs once per fill (at the start)

- For the purpose of EMC pedestals and status tables

- 50k events

- emc-check runs once per fill (at the start)

- TOF

- VPD

- Resolutions needs to be calibrated (for trigger) and confirmed to be similar for both species

- VPD

- TPC

- Laser runs

- For the purpose of calibrating TPC drift velocities

- Every few hours (as usual)

- Either dedicated laser runs, or included in physics runs

- ~5000 laser events

- GMT inclusion in a fraction of events

- For the purpose of understanding fluctuations in TPC SpaceCharge

- Trigger choice is not very important, as long as it has TPX and during most physics running

- Something around 100-200 Hz is sufficient (too much will cause dead time issues for the trigger)

- Vernier scans

- For the purpose of understanding backgrounds in the TPC that may be different between species

- Once for each species under typical operating conditions

- 4 incremental steps of collision luminosity, each step ~1 minute long and on the order of ~50k events (total = ~4 minutes)

- TPX, BEMC, BTOF must be included

- Minimum bias trigger with no (or wide) vertex z cut

- You do not have access to view this node

- Low luminosity fill IF a TPC shorted field cage ring occurs

- Only one species needed (unimportant which)

- Minimum bias trigger with no (or wide) vertex z cut

- ~1M events

- ZDC coincidence rates below 3 kHz

- Old ALTRO thresholds run

- For the purpose of TPC dE/dx understanding

- Only one species needed (unimportant which)

- ~2M events

- Could be at the end of a fill, or any other convenient time during typical operations

- Magnetic field flipped to A ("forward") polarity before AuAu27 data

- For the purpose of acquiring sufficient cosmic ray data with both magnetic fields to understand alignment of new iTPC sector

- Laser runs

-Gene

Run preparation Run VI (2006)

This page is meant to organize information about the calibration preparations and steps required for each subdetector for upcoming runs. Items with an asterisk (*) need to be completed in advance of data.For the run in winter 2006, the plan is to take primarily pp data. This may lead to different requirements than in the past.

- TPC

-

- * Code development for 1 Hz scalers.

- * Testing of T0/Twist code with pp data.

- * Survey alignment information into DB.

- Drift velocity from lasers into DB (automated).

- T0 calibration as soon as data starts.

- Twist calibration as soon as data starts (for each field).

- SpaceCharge and GridLeak (SpaceCharge and GridLeak Calibration How-To Guide) as soon as T0/Twist calibrated (for each field).

- Would like a field flip to identify origins of offsets in SpaceCharge vs. scalers.

- dEdx: final calibration after run ends by sampling over the whole run period, but initial calibration can be done once TPC momentum is well-calibrated (after distortion corrections).

- FTPC

-

- HV calibration performed at start of data-taking.

- Temperatures into DB (automated)

- Rotation alignment of each FTPC done for each field (needs calibrated vertex from TPC[+SVT][+SSD]). There was concern about doing this calibration with the pp data - status???

- SVT

-

- Steps for pp should be the same as heavy ion runs, but more data necessary.

- Self-alignment (to be completed January 2006)

- Requires well-calibrated TPC.

- Requires a few million events (a week into the run?)

- Would like field flip to discriminate between SVT/SSD alignment vs. TPC distortions.

- SSD

-

- * Code development in progress (status???)

- Requires well-calibrated TPC.

- EEMC

-

- EEMC initial setting & monitoring during data taking relies on prompt and fully automatic muDst EzTree production for all minias-only fast-only runs. Assume fast offline muDst exist on disk for 1 week.

- Initial settings for HV: 500k minbias fast-triggered events will give slopes necessary to adjust relative gans. Same 60 GeV E_T maximum scale as in previous years.

- Pedestals from 5k minbias events, once per fill.

- Stability of towers from one 200k minbias run per fill.

- Highly prescaled minbias and zerobias events in every run for "general monitoring" (e.g. correlated pedestal shift)

- Offline calibrations unnecessary for production (can be done on the MuDst level).

- "Basic" offline calibration from MIP response in 5M minbias fast events (taken a few weeks into the run)

- "Final" offline calibration from pi0 (or other TBD) signal requires "huge" statistics of EHT and EJP triggers to do tower-by-tower (will need the full final dataset).

- * Calibration codes exist, but are scattered. Need to be put in CVS with consistent paths and filenames.

- BEMC

-

- * LED runs to set HV for 60 GeV E_T maximum, changed from previous years (status???).

- Online HV calibration from 300k minbias events (eta ring by eta ring) - requires "full TPC reconstruction".

- MuDsts from calibration runs feedback to run settings (status???).

- Pedestals from low multiplicity events from the event pool every 24 hours.

- Offline calibrations unnecessary for production (can be done on the MuDst level).

- "Final" offline tower-by-tower calibration from MIPs and electrons using several million events.

- TOF

-

- upVPD upgrade (coming in March 2006) for better efficiency and start resolution

- Need TPC momentum calibrated first.

- Requires several million events, less with upVPD (wait for upVPD???)

Run preparation Run VII

There is some question as to whether certain tasks need to be done this year because the detector was not moved during the shutdown period. Omitting such tasks should be justified before skipping!

- TPC

-

- Survey alignment information into DB (appears to be no survey data for TPC this this year)

- High stats check of laser drift velocity calibration once there's gas in the TPC: 30k laser events with and without B field.

- Check of reversed GG cable on sector 8 (lasers) once there's gas in the TPC: same laser run as above

- Drift velocity from laser runs (laser runs taken once every 3-4 hours, ~2-3k events, entire run) into DB (automated); check that it's working

- T0 calibration, new method from laser runs (same laser runs as above).

- Twist calibration as soon as data starts: ~100k events, preferrably central/high multiplicity, near start of running for each field

- SpaceCharge and GridLeak (SpaceCharge and GridLeak Calibration How-To Guide) as soon as T0/Twist calibrated: ~100k events from various luminosities, for each field.

- BBC scaler study for correlation with azimuthally asymmetric backgrounds in TPC: needs several days of generic data.

- Zerobias run with fast detectors to study short time scale fluctuations in luminosity (relevant for ionization distortions): needs a couple minutes of sequential, high rate events taken at any time.

- Need a field flip to identify origins of offsets in SpaceCharge vs. scalers as well as disentangling TPC distortions from silicon alignment.

- dEdx: final calibration after run ends by sampling over the whole run period, but initial calibration can be done once TPC momentum is well-calibrated (after distortion corrections).

- FTPC

-

- HV calibration performed at start of data-taking (special HV runs).

- Temperatures into DB (automated)

- Rotation alignment of each FTPC done for each field (needs calibrated vertex from TPC[+SVT][+SSD]): generic collision data

- SSD

-

- Pulser runs (for initial gains and alive status?)

- Initial alignment can be done using roughly-calibrated TPC: ~100k minbias events.

- P/N Gain-matching (any special run requirements?)

- Alignment, needs fully-calibrated TPC: 250k minbias events from one low luminosity (low TPC distortions/background/occupancy/pile-up) fill, for each field, +/-30cm vertex constraint; collision rate preferrably below 1kHz.

- SVT

-

- Temp oscillation check with lasers: generic data once triggers are set.

- Initial alignment can be done using roughly-calibrated TPC+SSD: ~100k minbias events.

- Alignment, needs fully-calibrated TPC: 250k minbias events from one low luminosity fill (see SSD).

- End-point T0 + drift velocity, needs fully-calibrated SSD+TPC: same low luminosity runs for initial values, watched during rest of run.

- Gains: same low luminosity runs.

- EEMC

-

- Timing scan of all crates: few hours of beam time, ~6 minb-fast runs (5 minutes each) for TCD phase of all towers crates, another 6 minbias runs for the timing of the MAPMT crates, 2 days analysis

- EEMC initial setting & monitoring during data taking: requests to process specific data will be made as needed during the run.

- Initial settings for HV: 200k minbias fast-triggered events will give slopes necessary to adjust relative gans, 2 days analysis

- Pedestals (for offline DB) from 5k minbias events, once per 5 hours

- Stability of towers from one 200k minbias-fast run per fill

- "General monitoring" (e.g. correlated pedestal shift) from highly prescaled minbias and zerobias events in every run.

- Beam background monitoring from highly prescaled EEMC-triggered events with TPC for at the beginning of each fill.

- Expect commissioning of triggers using EMC after one week of collisions

- Offline calibrations unnecessary for production (can be done on the MuDst level).

- "Basic" offline calibration from MIP response in 5M minbias fast events taken a few weeks into the run

- "Final" offline calibration from pi0 (or other TBD) signal requires "huge" statistics of EHT and EJP triggers to do tower-by-tower (still undone for previous runs).

- Calibration codes exist, but are scattered. Need to be put in CVS with consistent paths and filenames (status?)

- BEMC

-

- Timing scan of all crates

- Online HV calibration of towers - do for outliers and new PMTs/crates; needed for EMC triggering. Needs~5 minutes of minbias fast-triggered events (eta ring by eta ring) at beginning of running (once a day for a few days) - same runs as for EEMC.

- Online HV calibration of preshower - matching slopes. Not done before, will piggyback off other datasets.

- Pedestals from low multiplicity events from the event pool every 24 hours.

- Offline calibrations unnecessary for production (can be done on the MuDst level).

- "Final" offline tower-by-tower calibration from MIPs and electrons using several million events

- upVPD/TOF

-

- upVPD (calibration?)

- No TOF this year.

preparation Run VIII (2008)

This page is meant to organize information about the calibration preparations and steps required for each subdetector for upcoming runs.Previous runs:

Red means that nothing has been done for this (yet), or that this needs to continue through the run.

Blue means that the data has been taken, but the calibration task is not completed yet.

Black indicates nothing (more) remaining to be done for this task.

- TPC

-

- Survey alignment information into DB (appears to be no survey data for TPC this this year)

- Drift velocity from laser runs (laser runs taken once every 3-4 hours, ~2-3k events, entire run) into DB (automated); check that it's working

- T0 calibration, using vertex-matching (~500k events, preferably high multiplicity, once triggers are in place).

- Twist calibration as soon as data starts: same data

- SpaceCharge and GridLeak (SpaceCharge and GridLeak Calibration How-To Guide) as soon as T0/Twist calibrated: ~500k events from various luminosities, for each field.

- BBC scaler study for correlation with azimuthally asymmetric backgrounds in TPC: needs several days of generic data.

- Zerobias run with fast detectors to study short time scale fluctuations in luminosity (relevant for ionization distortions): needs a couple minutes of sequential, high rate events taken at any time.

- dEdx: final calibration after run ends by sampling over the whole run period, but initial calibration can be done once TPC momentum is well-calibrated (after distortion corrections).

- TPX

- ???

- FTPC

-

- HV calibration performed at start of data-taking (special HV runs).

- Temperatures into DB (automated)

- Rotation alignment of each FTPC done for each field (needs calibrated vertex from TPC[+SVT][+SSD]): generic collision data

- EEMC

-

- Timing scan of all crates: few hours of beam time, ~6 fast runs (5 minutes each) for TCD phase of all towers crates

- EEMC initial setting & monitoring during data taking: requests to process specific data will be made as needed during the run.

- Initial settings for HV: 500k minbias fast-triggered events will give slopes necessary to adjust relative gans, 2 days analysis

- Pedestals (for offline DB) from 5k minbias events, once per fill

- "General monitoring" (e.g. correlated pedestal shift) from highly prescaled minbias and zerobias events in every run.

- Beam background monitoring from highly prescaled EEMC-triggered events with TPC for at the beginning of each fill.

- Expect commissioning of triggers using EMC after one week of collisions

- Offline calibrations unnecessary for production (can be done on the MuDst level).

- "Basic" offline calibration from MIP response in 5M minbias fast events taken a few weeks into the run

- "Final" offline calibration from pi0 (or other TBD) signal requires "huge" statistics of EHT and EJP triggers to do tower-by-tower.

- Calibration codes exist, but are scattered. Need to be put in CVS with consistent paths and filenames (status?)

- BEMC

-

- Timing scan of all crates

- Online HV calibration of towers - do for outliers and new PMTs/crates; needed for EMC triggering. Needs~5 minutes of minbias fast-triggered events (eta ring by eta ring) at beginning of running (once a day for a few days) - same runs as for EEMC.

- Online HV calibration of preshower - matching slopes. (same data).

- Pedestals from low multiplicity events from the event pool every 24 hours.

- Offline calibrations unnecessary for production (can be done on the MuDst level).

- "Final" offline tower-by-tower calibration from MIPs and electrons using several million events

- PMD

-

- Hot Cells (~100k generic events from every few days?)

- Cell-by-cell gains (same data)

- SM-by-SM gains (same data)

- VPD/TOF

-

- T-TOT (~3M TOF-triggered events)

- T-Z (same data)

Calibration Schedules

The listed dates should be considered deadlines for production readiness. Known issues with any of the calibrations by the deadline should be well-documented by the subsystems.- Run 15: projected STAR physics operations end date of

2015-06-19 (CAD)2015-06-22- pp200

- tracking: 2015-07-22

- all: 2015-08-22

- pAu200

- tracking: 2015-08-22

- all: 2015-09-05

- pAl200

- tracking: 2015-09-22

- all: 2015-10-06

- pp200

Calibration topics by dataset

Focus here will be on topics of note by datasetRun 12 CuAu200

Regarding the P14ia Preview Production of Run 12 CuAu200 from early 2014:A check-list of observables and points to consider to help understand alayses' sensitivity to non-final calibrations

To unambiguously see issues due to mis-calibration of the TPC, stringent determination of triggered-event tracks is necessary. Pile-up tracks are expected to be incorrect in many ways, and they constitute a larger and larger fraction of TPC tracks as luminosity grows, so their inclusion can lead to luminosity-dependencies of what appear to be mis-calibrations but are not.

- TPC dE/dx PID (not calibrated)

- differences between real data dE/dx peaks' means and width vs. nsigma provided in the MuDst

- variability of these differences with time

- TPC alignment (old one used)

- sector-by-sector variations in charge-separated signed DCAs and momentum spectra (e.g h-/h+ pT spectra) that are time- and luminosity-independent

- differences in charge-separated invariant masses from expectations that are time- and luminosity-independent

- any momentum effects (including invariant masses) grow with momentum: delta(pT) is proprotional to q*pT^2

- alternatively, and perhaps more directly, delta(q/pT) effects are constant, and one could look at charge-separated 1/pT from sector-to-sector

- TPC SpaceCharge & GridLeak (preliminary calibration)

- sector-by-sector variations in charge-separated DCAs and momentum spectra that are luminosity-dependent

- possible track splitting between TPC pad rows 13 and 14 + possible track splitting at z=0, in the radial and/or azimuthal direction

- differences in charge-separated invariant masses from expectations that are luminosity-dependent

- any momentum effects (including invariant masses) grow with momentum: delta(pT) is proprotional to q*pT^2

- alternatively, and perhaps more directly, delta(q/pT) effects are constant, and one could look at charge-separated 1/pT from sector-to-sector

- TPC T0 & drift velocities (preliminary calibration)

- track splitting at z=0, in the z direction

- splitting of primary vertices into two close vertices (and subsequent irregularities in primary track event-wise

distributions)

TOF PID (VPD not calibrated, BTOF calibration from Run 12 UU used)

[particularly difficult to disentangle from TPC calibration issues]- not expected to be much of an issue, as the "startless" mode of using BTOF was forced (no VPD) and the calibration used for BTOF is expected to be reasonable

broadening of, and differences in mass^2 peak positions from expectations are more likely due to TPC issues (particularly if charge-dependent, as BTOF mis-calibrations should see no charge sign dependence) while TOF results may not be the best place to identify TPC issues, it is worth noting that BTOF-matching is beneficial to removing pile-up tracks from studies

Docs

Miscellaneous calibration-related documentsIntrinsic resolution in a tracking element

Foreword: This has probably been worked out in a textbook somewhere, but I wanted to write it down for my own sake. This is a re-write (hopefully more clear, with slightly better notation) of Appendix A of my PhD thesis (I don't think it was well-written there)...-Gene

_______________________

Let's establish a few quantities:

- Eintr : error on the measurement by the element in question

- σintr2 = <Eintr2> : intrinsic resolution of the element, and its relation to an ensemble of errors in measurement

- Eproj : error on the track projection to that element (excluding the element from the track fit)

- σproj2 = <Eproj2> : resolution of the track projection to an element, and its relation to an ensemble of errors in track projections

- Etrack : error on track fit at an element including the element in the fit

- Rincl = Eintr - Etrack : residual difference between the measurement and the inclusive track fit

- σincl2 = <(Eintr - Etrack)2> : resolution from the inclusive residuals

- Rexcl = Eintr - Eproj : residual difference between the measurement and the exclusive track fit

- σexcl2 = <(Eintr - Eproj)2> : resolution from the exclusive residuals

Our goal is to determine σintr given that we can only observe σincl and σexcl.

To that end, we utilize a guess, σ'intr, and write down a reasonable estimation of Etrack using a weighted average of Eintr and Eproj, where the weights are wproj = 1/σproj2, and wintr = 1/σ'intr2:

= [(Eintr / σ'intr2) + (Eproj / σproj2)] / [(1/σ'intr2) + (1/σproj2)]

= [(σproj2 Eintr) + (σ'intr2 Eproj)] / (σ'intr2 + σproj2)

Substituing this, we find...

= <Eintr2> - 2 <Eintr Etrack> + <Etrack2>

= σintr2 - 2 <Eintr {[(σproj2 Eintr) + (σ'intr2 Eproj)] / (σ'intr2 + σproj2)}> + <{[(σproj2 Eintr) + (σ'intr2 Eproj)] / (σ'intr2 + σproj2)}2}>

Dropping terms of <Eintr Eproj>, replacing terms of <Eproj2> and <Eintr2> with σproj2 and σintr2 respectively, and multiplying through such that all terms on the right-hand-side of the equation have the denominator (σ'intr2 + σproj2)2, we find

= (σintr2 σ'intr4 + σ'intr4 σproj2) / (σ'intr2 + σproj2)2

= σ'intr4 (σintr2 + σproj2) / (σ'intr2 + σproj2)2

We can substitute for σproj2 using σexcl2 = σintr2 + σproj2:

σincl = σ'intr2 σexcl / (σ'intr2 + σexcl2 - σintr2)

And solving for σintr2 we find:

σintr = √{ σexcl2 - σ'intr2 [(σexcl / σincl) - 1] }

This is an estimator of σintr. Ideally, σintr and σ'intr should be the same. One can iterate a few times starting with a good guess for σ'intr and then replacing it in later iterations with the σintr found from the previous iteration until the two are approximately equal.

_______

-Gene

SVT Calibrations

SVT Self-Alignment

Using tracks fit with SVT points plus a primary vertex alone, we can self-align the SVT using residuals to the fits. This document explains how this can be done, but only works for the situation in which the SVT is already rather well aligned, and only small scale alignment calibration remains. The technique explained herein also allows for calibration of the hybrid drift velocities.

TPC Calibrations

TPC Calibration & Data-Readiness Tasks:

Notes:

* "Run", with a capital 'R', refers to a year's Run period, e.g. Run 10)

* Not all people who have worked on various tasks are listed as they were recalled only from (faulty) memory and only primary persons are shown. Corrections and additions are welcome.

- You do not have access to view this node

- Should be done each time the TPC may be moved (e.g. STAR is rolled out and back into the WAH) (not done in years)

- Must be done before magnet endcaps are moved in

- Past workers: J. Castillo (Runs 3,4), E. Hjort (Runs 5,6), Y. Fisyak (Run 14)

- TPC Pad Pedestals

- Necessary for online cluster finding and zero suppression

- Uses turned down anode HV

- Performed by DAQ group frequently during Run

- Past workers: A. Ljubicic

- TPC Pad Relative Gains & Relative T0s

- Necessary for online cluster finding and zero suppression

- Uses pulsers

- Performed by DAQ group occasionally during Run

- Past workers: A. Ljubicic

- TPC Dead RDOs

- Influences track reconstruction

- Monitored by DAQ

- Past workers: A. Ljubicic

- You do not have access to view this node

- Monitor continually during Run

- Currently calibrated from laser runs and uploaded to the DB automatically

- Past workers: J. Castillo (Runs 3,4), E. Hjort (Runs 5,6), A. Rose (Run 7), V. Dzhordzhadze (Run 8), S. Shi (Run 9), M. Naglis (Run 10), G. Van Buren (Run 12)

- TPC Anode HV

- Trips should be recorded to avoid during reconstruction

- Dead regions may influence track reconstruction

- Reduced voltage will influence dE/dx

- Dead/reduced voltage near inner/outer boundary will affect GridLeak

- Past workers: G. Van Buren (Run 9)

- TPC Floating Gated Grid Wires

- Wires no longer connected to voltage cause minor GridLeak-like distortions at one GG polarity

- Currently known to be two wires in Sector 3 (seen in reversed polarity), and two wires in sector 8 (corrected with reversed polarity)

- Past workers: G. Van Buren (Run 5)

- TPC T0s

- Potentially: global, sector, padrow

- Could be different for different triggers

- Once per Run

- Past workers: J. Castillo (Runs 3,4), Eric Hjort (Runs 5,6), G. Webb (Run 9), M. Naglis (Runs 10,11,12), Y. Fisyak (Run 14)

- You do not have access to view this node

- Dependence of reconstructed time on pulse height seen only in Run 9 so far (un-shaped pulses)

- Past workers: G. Van Buren (Run 9)

- You do not have access to view this node

- Known shorts can be automatically monitored and uploaded to the DB during Run

- New shorts need determination of location and magnitude, and may require low luminosity data

- Past workers: G. Van Buren (Runs 4,5,6,7,8,9,10)

- You do not have access to view this node

- Two parts: Inner/Outer Alignment, and Super-Sector Alignment

- Requires low luminosity data

- In recent years, done at least once per Run, and per magnetic field setting (perhaps not necessary)

- Past workers: B. Choi (Run 1), H. Qiu (Run 7), G. Van Buren (Run 8), G. Webb (Run 9), L. Na (Run 10), Y. Fisyak (Run 14)

- TPC Clocking (Rotation of east half with respect to west)

- Best done with low luminosity data

- Calibration believed to be infrequently needed (not done in years)

- Past workers: J. Castillo (Runs 3,4), Y. Fisyak (Run 14)

- TPC IFC Shift

- TPC Twist (ExB) (Fine Global Alignment)

- Best done with low luminosity data

- At least once per Run, and per magnetic field setting

- Past workers: J. Castillo (Runs 3,4), E. Hjort (Runs 5,6), A. Rose (Runs 7,8,9), Z. Ahammed (Run 10), R. Negrao (Run 11), M. Naglis (Run 12), J. Campbell (Runs 13,14), Y. Fisyak (Run 14)

- You do not have access to view this node

- Done with low (no) luminosity data from known distortions

- Done once (but could benefit from checking again)

- Past workers: G. Van Buren (Run 4), M. Mustafa (Run 4 repeated)

- You do not have access to view this node

- At least once per Run, and per beam energy & species, and per magnetic field setting

- Past workers: J. Dunlop (Runs 1,2), G. Van Buren (Runs 4,5,6,7,8-dAu,12-pp500), H. Qiu (Run 8-pp), J. Seele (Run 9-pp500), G. Webb (Run 9-pp200), J. Zhao (Run 10), A. Davila (Runs 11,12-UU192,pp200,pp500), D. Garand (Run 12-CuAu200), M Vanderbroucke (Run 13), M. Posik (Run 12-UU192 with new alignment), P. Federic (Run 12-CuAu200 R&D)

- You do not have access to view this node

- Once per Run, and per beam energy & species, and per magnetic field setting

- Past workers: Y. Fisyak (Runs 1,2,3,4,5,6,7,9), P. Fachini (Run 8), L. Xue (Run 10), Y. Guo (Run 11), M. Skoby (Runs 9-pp200,12-pp200,pp500), R. Haque (Run 12-UU192,CuAu200)

- TPC Hit Errors

- Once per Run, and per beam energy & species, and per magnetic field setting

- Past workers: V. Perev (Runs 5,7,9), M. Naglis (Runs 9-pp200,10,11,12-UU193), R. Witt (Run 12-pp200,pp500, Run13)

- TPC Padrow 13 and Padrow 40 static distortions

- Once ever

- Past workers: Bum Choi (Run 1), J. Thomas (Runs 1,18,19), G. Van Buren (Runs 18,19) I. Chakaberia (Runs 18,190

To better understand what is the effect of distortions on momentum measurements in the TPC, the attached sagitta.pdf file shows the relationship between track sagitta and its transverse momentum.

Miscellaneous TPC calibration notes

The log file for automated drift velocity calculations is at ~starreco/AutoCalib.log.Log files for fast offline production are at /star/rcf/prodlog/dev/log/daq.

The CVS area for TPC calibration related scripts, macros, etc., is StRoot/macros/calib.

Padrow 13 and Padrow 40 static distortions

These two distortion corrections focus on static non-uniformities in the electric field in the gating grid region of the TPC, radially between the inner and outer sectors. This region has an absence of gating grid wires where the subsector structures meet, allowing some bending of the equipotential lines, creating radial electric field components. In both cases, the region of distorted fields is quite localized near the endcap and only over a small radial extent, but this then distorts all drifting electrons (essentially from all drift distances equally) in that small radial region, affecting signal only for a few padrows on each side of the inner/outer boundary.Padrow 13 Distortion

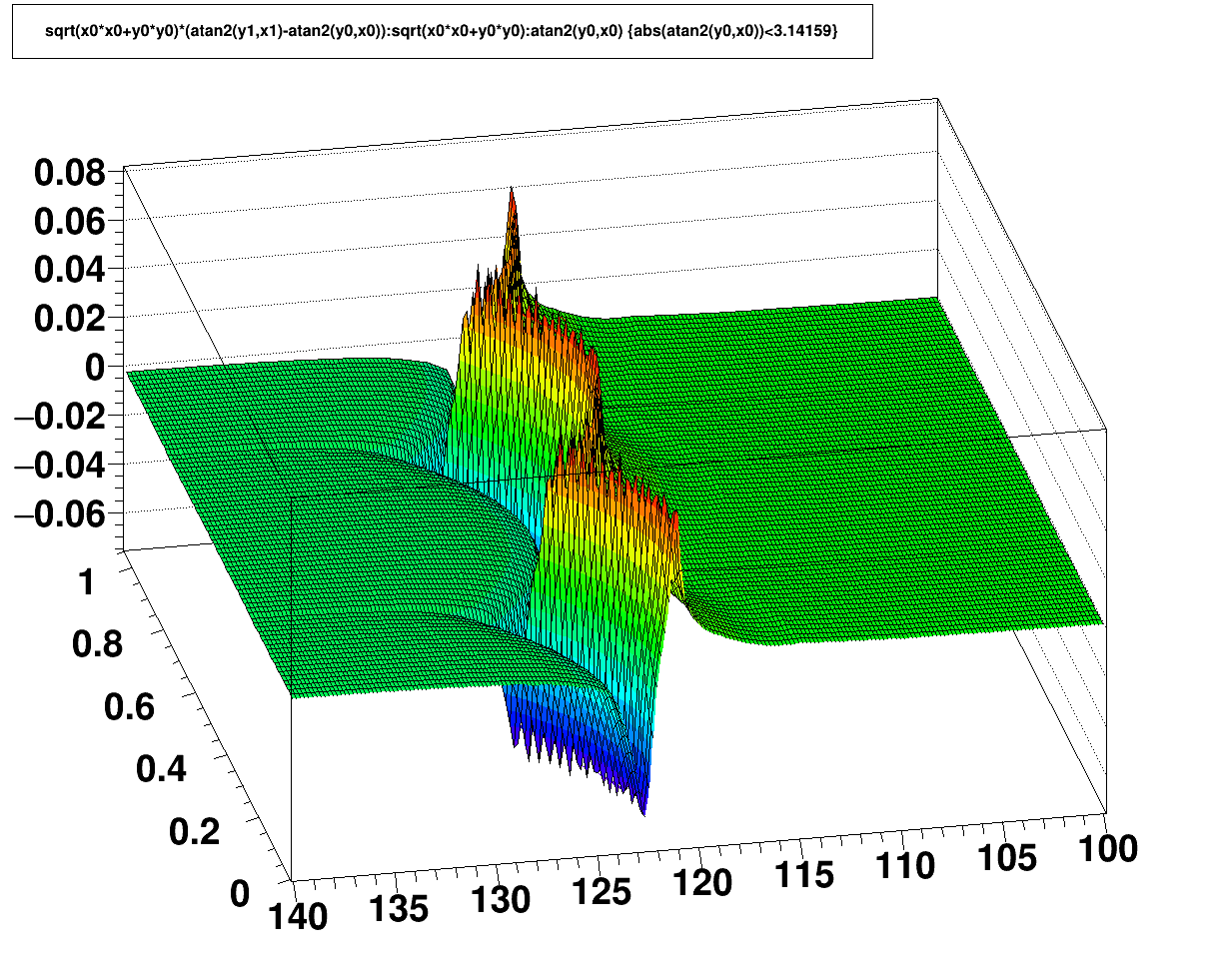

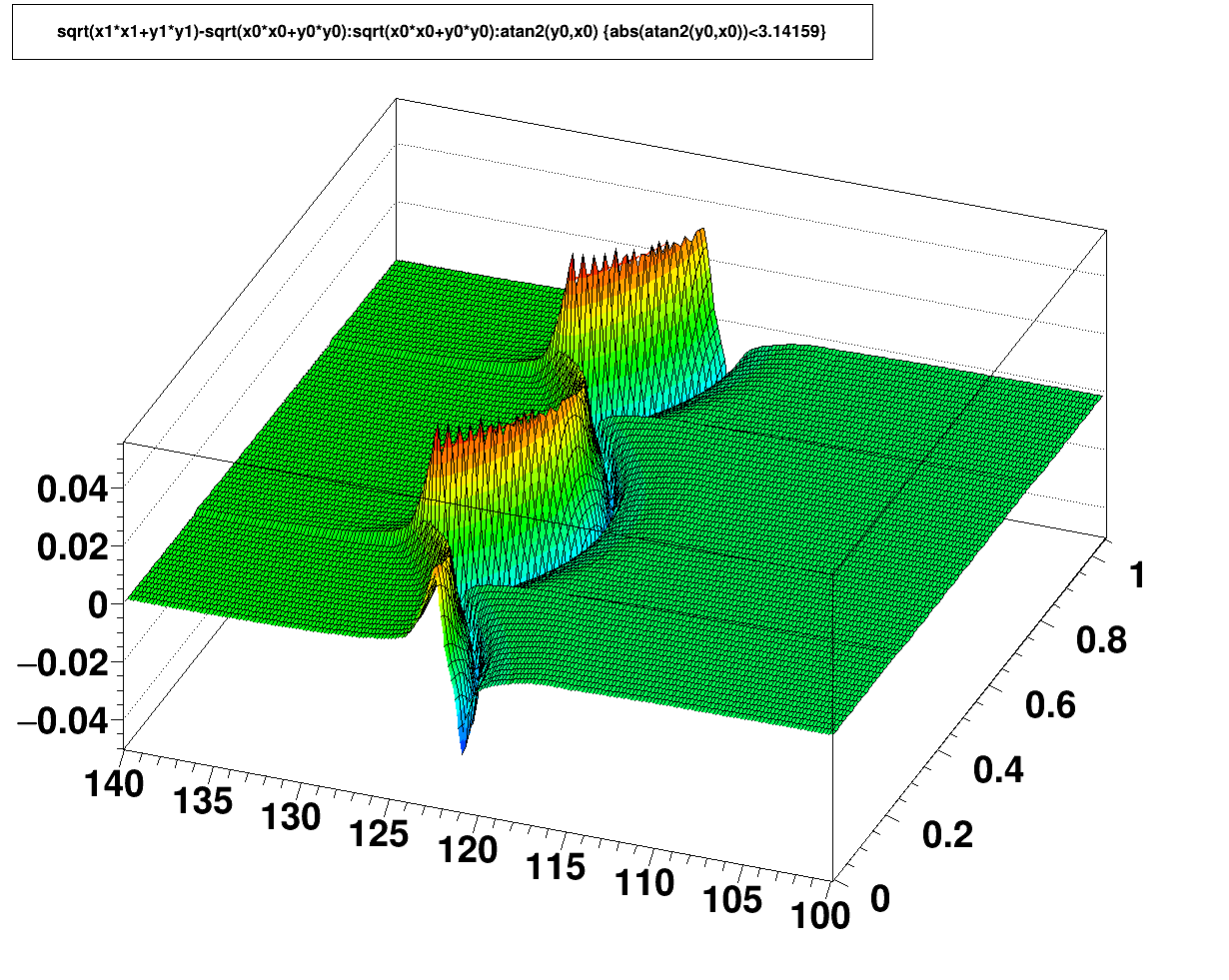

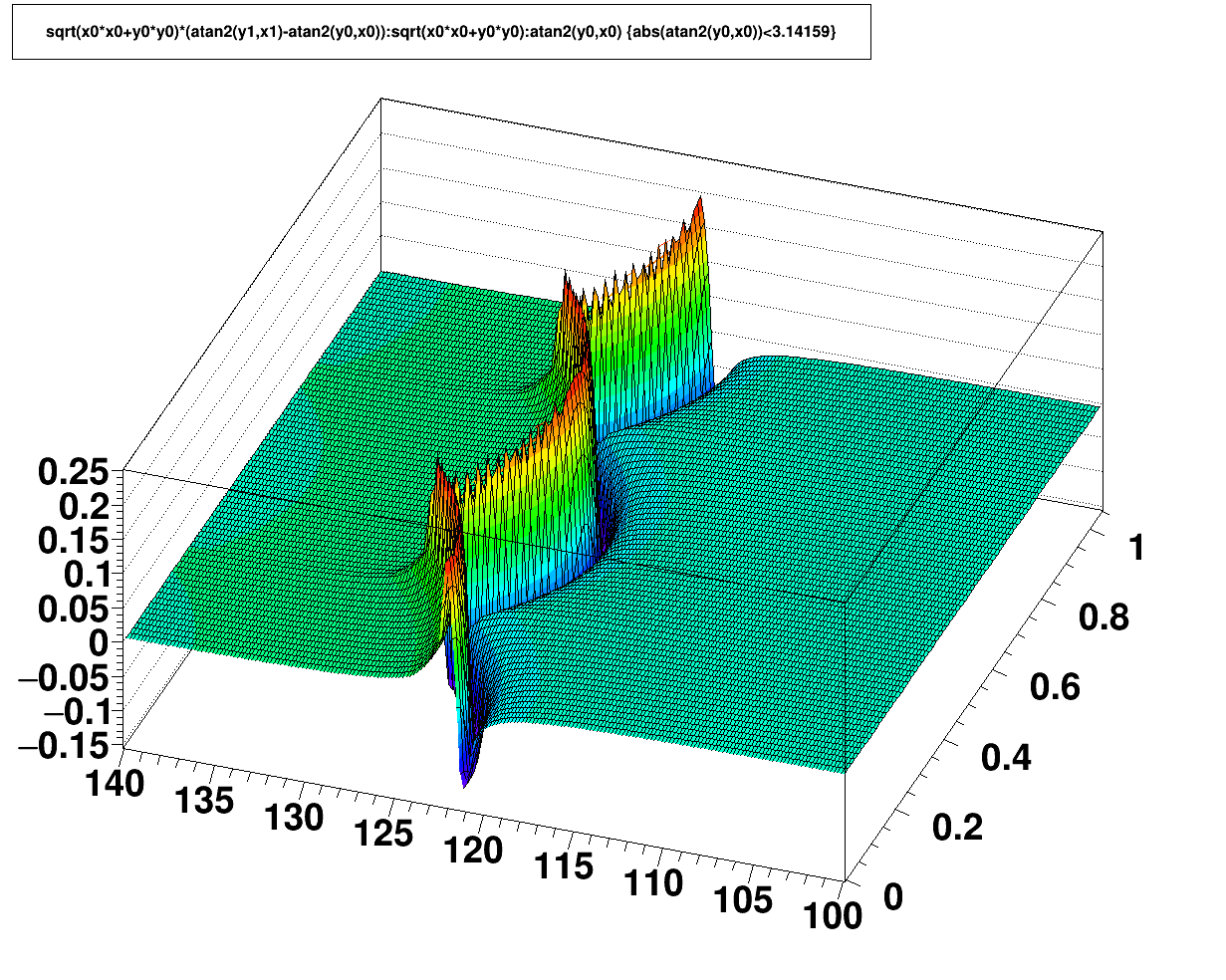

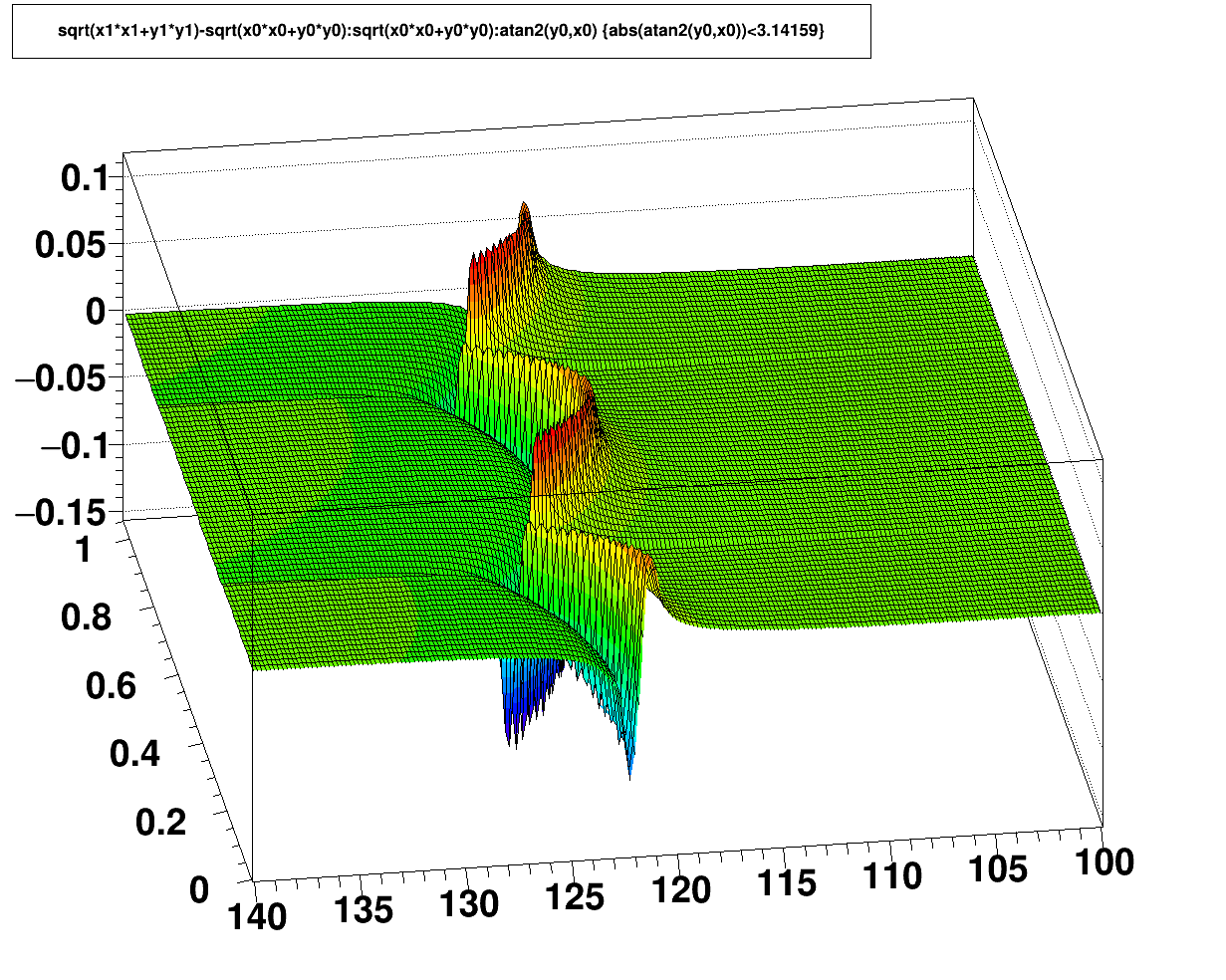

For the original TPC structure, the was simply nothing in the gap between the inner and outer sectors. More about the gap structure can be learned by looking some of the GridLeak documentation (which is different in that it is dynamic with the ionization in the TPC. This static distortion was observed in the early operation of STAR when luminosities were low, well before the GridLeak distortion was ever observed. It was modeled as an offset potential on a small strip of the TPC drift field boundary, with the offset equal to a scale factor times the gating grid voltage, where the scale factor was calibrated from fits to the data. Below are the related distortion correction maps.

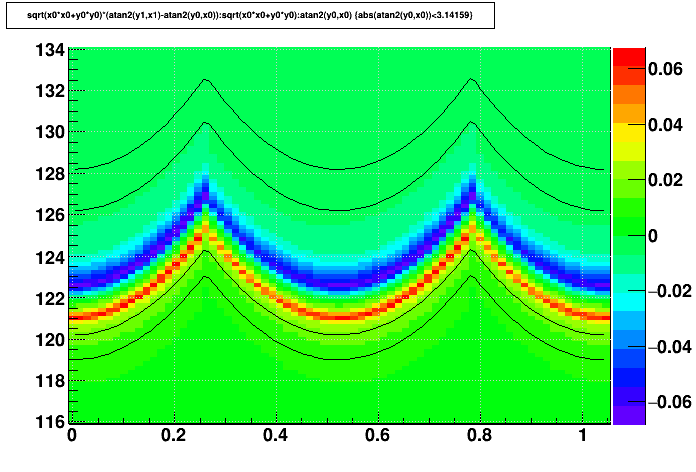

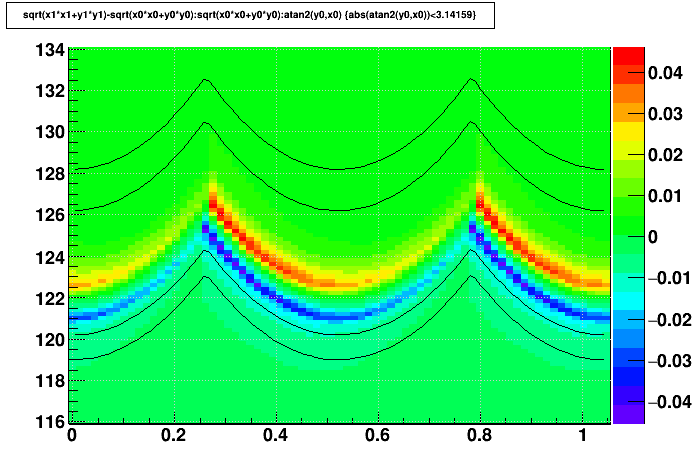

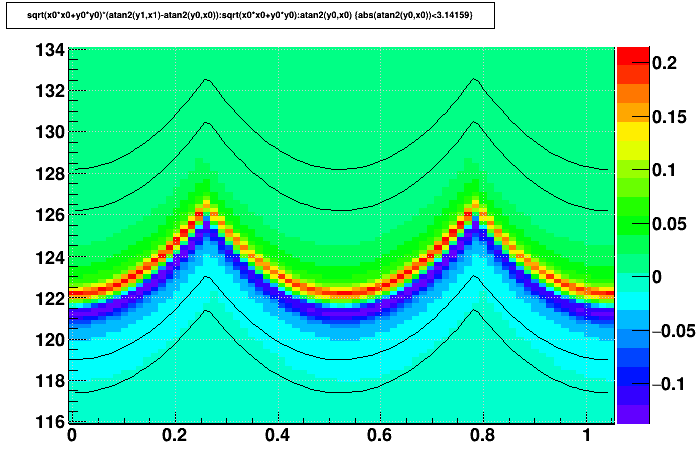

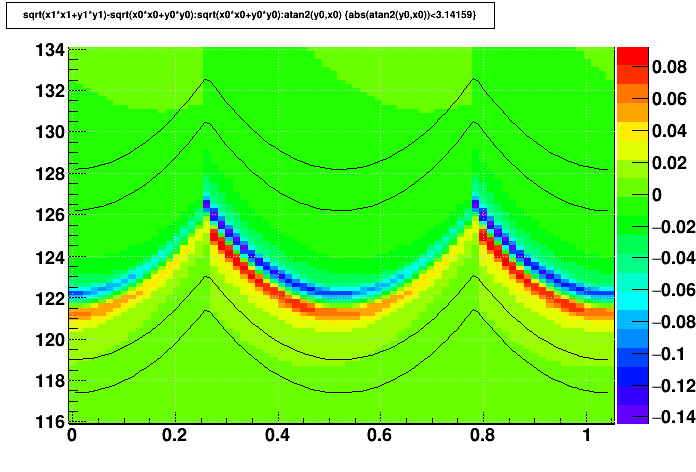

Padrow 40 Distortion

With the iTPC sectors, a wall was placed at the outer edge of the new inner sectors with conductive stripes on it to express potentials primarily to suppress the GridLeak distortion. It was understood that this would modify the static distortion, and in the maps below it is apparent that the static distortions became a few times larger with the wall, but in both cases only at the level of ~100 μm or less at the outermost padrow of the inner sectors. It is also worth noting that the sign of the distortion flipped with respect to Padrow 13.

This distortion correction is determined from the sum of 3 maps that represent the distortion contributions from (1) the wall's grounded structure, (2) the potential at the wall's tip [nominally -115 V], and (3) the potential on the wall's outer side [nominally -450 V]. Plots on this page were made using the nominal potentials.

Distortion correction maps:

The maps are essentially uniform in z, so the maps shown below focus only on the radial and azimuthal dependencies. Further, there is no distortion more than a few centimeters away from the inner/outer boundaries, and there is essentially a 12-fold symmetry azimuthally (though this is not strictly true if different wall voltages are used on different iTPC sectors), so the maps zoom in on a limited region of r and φ to offer a better view of the fine details. Also, the inner and outer edges of the nearest padrows are shown as thin black lines on some plots to help understand their proximity to the distortions.

Open each map in a new web browser tab or window to see higher resolution. Units are [cm] and [radian].

| Δ(r-φ) vs. r and φ | Δ(r) vs. r and φ | |

|---|---|---|

| Padrow 13 |  |

|

| Padrow 13 lines indicating locations of rows 13 and 14 |

|

|

| Padrow 40 |  |

|

| Padrow 40 lines indicating locations of rows 40 and 41 |

|

|

-Gene

RunXI dE/dx calibration recipe

This is a recipe of RunXI dEdx calibration by Yi Guo.

TPC Hit Errors

TPC T0s

Global T0:- Instructions (as of 2012, calibrated along with Twist)

- Run 10 calibration

- Run 12 calibration

Twist (ExB) Distortion

ExB (twist) calibration procedure

In 2012, the procedure documentation was updated, including global T0 calibration:

Below are the older instructions.________________

The procedure here is basically to calculate two beamlines using only west tpc data and only east tpc data independently and then adjust the XTWIST and YTWIST parameters so that the east and west beamlines meet at z=0. The calibration needs to be done every run for each B field configuration. The obtained parameters are stored in the tpcGlobalPosition table with four different flavors: FullmagFNegative, FullMagFPositive, HalfMagFPositive and HalfMagFNegative.

To calculate the beamline intercept the refitting code (originally written by Jamie Dunlop) is used. An older evr-based version used by Javier Castillo for the 2005 heavy ion run can be found at ~startpc/tpcwrkExB_2005, and a version used for the 2006 pp run that uses the minuit vertex finder can be found at ~hjort/tpcwrkExB_2006. Note that for the evr-based version the value of the B field is hard coded at line 578 of pams/global/evr/evr_am.F. All macros referred to below can be found under both of the tpcwrkExB_200X directories referred to above, and some of them under ~hjort have been extensively rewritten.

Step-by-step outline of the procedure:

1. If using evr set the correct B field and compile.

2. Use the "make_runs.pl" script to prepare your dataset. It will create links to fast offline event.root files in your runsXXX subdirectory (create it first, along with outdirXXX). The script will look for files that were previously processed in the outdirXXX file and skip over them.

3. Use the "submit.pl" script to submit your jobs. It has advanced options but the standard usage is "submit.pl rc runsXXX outdirXXX" where "rc" indicates to use the code for reconstructed real events. The jobs will create .refitter.root files in your ourdirXXX subdirectory.

4. Next you create a file that lists all of the .refitter.root files. A command something like this should do it: "ls outdirFF6094 | grep refitter | awk '{print "outdirFF6094/" $1}' > outdirFF6094/root.files"

5. Next you run the make_res.C macro (in StRoot/macros). Note that the input and output files are hard coded in this macro. This will create a histos.root file.

6. Finally you run plot_vtx.C (in StRoot/macros) which will create plots showing your beamline intercepts. Note that under ~hjort/tpcwrkExB_2006 there is also a macro called plot_diff.C which can be used to measure the offset between the east/west beams more directly (useful for pp where data isn't as good).

Once you have made a good measurement of the offsets an iterative procedure is used to find the XTWIST and YTWIST that will make the offset zero:

7. In StRoot/StDbUtilities/StMagUtilities.cxx change the XTWIST and YTWIST parameters to what was used to process the files you analyzed in steps 1-6, and then compile.

8. Run the macro fitDCA2new.C (in StRoot/macros). Jim Thomas produces this macro and you might want to consult with him to see if he has a newer, better version. An up-to-date version as of early 2006 is under ~hjort/tpcwrkExB_2006. When you run this macro it will first ask for a B field and the correction mode, which is 0x20 for this correction. Then it will ask for pt, rapidity, charge and Z0 position. Only Z0 position is really important for our purposes here and typical values to use would be "1.0 0.1 1 0.001". The code will then report the VertexX and VertexY coordinates, which we will call VertexX0 and VertexY0 in the following steps.

9. If we now take VertexX0 and VertexY0 and our measured beamline offsets we can calculate the values for VertexX and VertexY that we want to obtain when we run fitDCA2new.C - call them VertexX_target and VertexY_target:

VertexX_target = (West_interceptX - East_interceptX)/2 + VertexX0

VertexY_target = (East_interceptY - East_interceptY)/2 + VertexY0

The game now is to modify XTWIST and YTWIST in StMagUtilities, recompile, rerun fitDCA2new.C and obtain values for VertexX and VertexY that match VertexX_target and VertexY_target (within 10 microns for heavy ion runs in the past).

10. Once you have found XTWIST and YTWIST parameters you are happy with they can be entered into the db table tpcGlobalPosition as PhiXZ and PhiYZ.

However - IMPORTANT NOTE: XTWIST = 1000 * PhiXZ , but YTWIST = -1000 * PhiYZ.

NOTE THE MINUS SIGN!! What is stored in the database is PhiXZ and PhiYZ. But XTWIST and YTWIST are what are printed in the log files.

Enter the values into the db using AddGlobalPosition.C and a file like tpcGlobalPosition*.C. To check the correction you either need to use files processed in fast offline with your new XTWIST and YTWIST values or request (re)processing of files.