- Bulk correlations

- Common

- GPC Paper Review: ANN-ASS pp Elastic Scattering at 200 GeV

- Hard Probes

- Heavy Flavor

- Abstracts, Presentations and Proceedings

- Comparisons between STAR and PHENIX

- HF PWG QM2011 analysis topics

- HF PWG Embedding page

- HF PWG Preliminary plots

- HF PWG Weekly Meeting

- Heavy Quark Physics in Nucleus-Nucleus Collisions Workshop at UCLA

- NPE Analyses

- PWG members and analysis

- PicoDst production requests

- Upsilon Analysis

- Combinatorial background subtraction for e+e- signals

- Estimating Acceptance Uncertainty due to unknown Upsilon Polarization

- Estimating Drell-Yan contribution from NLO calculation.

- Response to PRD referee comments on Upsilon Paper

- Upsilon Analysis in Au+Au 2007

- Upsilon Analysis in d+Au 2008

- Upsilon Analysis in p+p 2009

- Upsilon Paper: pp, d+Au, Au+Au

- Using Pythia 8 to get b-bbar -> e+e-

- Varying the Continuum Contribution to the Dielectron Mass Spectrum

- WWW

- Jet-like correlations

- LFS-UPC

- Measurement of Single Transverse Spin Asymmetry, A_N in Proton–Proton Elastic Scattering at √s = 510 GeV

- Other Groups

- Peripheral Collisions

- Spin

NmbAA calculation

Updated on Thu, 2010-09-02 17:39. Originally created by rjreed on 2010-09-01 16:51.

Under:

NmbAA = #upsilon minbias events in the given centrality bin

upsilon minbias trigger ID 200611

Total # of ups-mb events = sum #ups-mb(i)*prescale(i) where i is done on a run by run basis

To calculate this #, I used Jamie's bytrig.pl script, which returns a cross-section per run #

This cross-section was calculated assuming a "minbias" cross-section of 3.12 b. This number is comes from Jamies assumption that 10 b is the ZDC cross-section, but 3 b is from E&M processes that don't produce any particles and won't fire the vpd-mb trigger. 7 b is the hadronic cross-section as calculated by Glauber, but the vpd will miss about 1 b in the peripheral region. Then, the vpd vertex cut will remove ~1/2 of all triggers giving a cross-section ~3.12 b. This number is very ad hoc, but is necessary to use in order to get the # of events from the macro. To get from the integrated luminosity per run number to the # of minbias events, one calculates int L(i)/min-bias cross section = #minbias triggers(i) x prescale(i). I have confirmed that this works by checking some run numbers calculated by the script versus the runlog.

Summing over all "good" runs gives a total number of upsilon minbias triggers x prescale of 1.57 x 10^9. Attached is a spread sheet of each run number, the number of L2 upsilon triggers, the integrated luminosity per run # and the # of ups-mb triggers x prescale for that run #.

This information was presented to the HF list in presentation at:

http://drupal.star.bnl.gov/STAR/system/files/HF05112010.ppt

The error on this number is essentially zero because the statistical error of a number of order 10^9 is of order 1/10000, see: http://www.star.bnl.gov/HyperNews-star/protected/get/heavy/2753.html for discussion.

Next, we need the number of these events that are in the 0-60% or the 0-10% centrality bin. Since the vpd is inefficient for peripheral collisions, the total number of upsilon minbias events should be higher than what we see in the detector. First, we need to know the reference multiplicity cut for these centrality bins. See:

http://drupal.star.bnl.gov/STAR/system/files/HF06012010.ppt

Centrality

This trigger could not use the standard STAR centrality definitions because the base upsilon minbias trigger had a much wider vpd cut then the +/-30 cm that the more generic minbias trigger had. Beyond 30 cm the acceptance of the TPC changes, which means that the reference Multiplicity will not be constant. The centrality definitions are calculated by integrating a glauber model calculation of the reference Multiplicity.

First, I used Hiroshi Masui's code "run_glauber_mc.C" which runs the actual glauber simulation to generate Ncoll and Npart for 100k throws. The default values are for AuAu 200 GeV. The created root file along with the refMult from the upsilon minbias data set are then analyzed with NbdFitMaker. This code attempts to take Npart and Ncol and turn those into a reference multiplicity by using the two component model to describe the reference multiplicity. See Hiroshi's talk at:

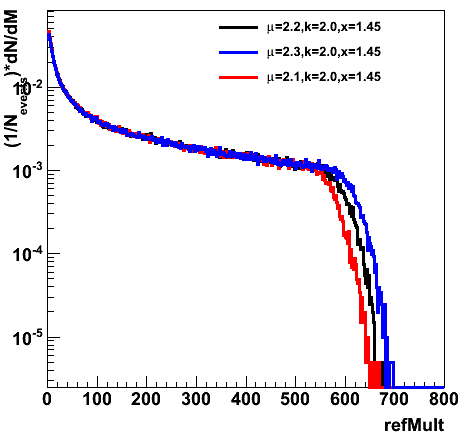

dN/deta = npp[(1-x)Npart/2 + xNcoll] and is convoluted with a negative binomial distribution with 2 free parameters. The calculated RefMult is then matched to the data refMult at high refMults where the trigger efficiency should be 100%. Minuit is not used because it doeos not converge. I found that it was difficult to get these distributions to match exactly at the high end. Stepping through the allowed range of parameters, I found that mu = 2.2, x = 0.145 and k = 2.0 gave the closest result of chi^2/dof of 2.2 (for refMult>100). This resulted in a total efficiency of 84.6% and 100% for centrality 0-60%. Changing x and k by a reasonable amount did not significantly change the centrality defintion. Changing mu did change the centrality defintion slightly.

Figure 1: RefMult distributions versus changes in mu. The default mu was set to 2.4 which is ~npp the mean multipliclity of p+p collisions. This was changed to match the upsilon trigger minbias refmult distribution.

The calculated refMult cuts are > 47+/-3 for 0-60% centrality and >430+/-19 for 0-10% centrality.

Output and input files for this calculation can be found at:

/star/u/rjreed/UpsilonAA2007Paper/GlauberRefMult/

Hiroshi's code used for this exercise can be found at:

/star/u/rjreed/glauber/Try02/

Attached to this note is the "Centcut.C" macro used to generate Figure 1 and calculate the centrality versus refMult and the systematic uncertainty in that number.

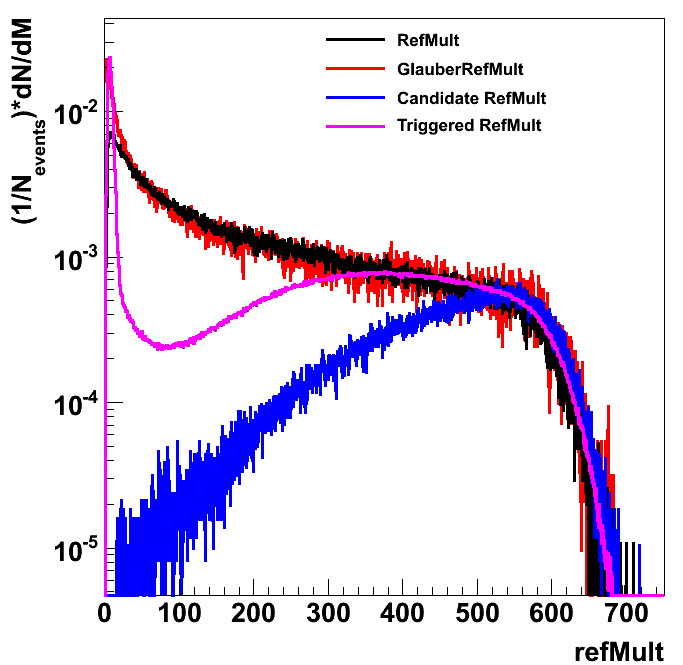

Also of interest is the refMult distributions of the L2 upsilon triggered data set and the candidates. I normalized all these distributions to the glauber calculation at the high end. This is especially true of the candidate distribution as there can be more than one candidate per vertex. For these purposes, I limited the distributions to the index 0 vertices.

Figure 2: Normalized refMult distributions for the upsilon minbias trigger in black, the glauber calculation in red, the L2 upsilon triggered sample in pink and the upsilon candidates in blue. Candidates were chosen from index 0 vertices where the two daughter particles had an R<0.04, -2<nsigmaElectron<3 and E/p<3. Macro used to generate this graph, drawRef2.C is attached to this post.

Another number that is needed from this calculation is Nbin, the number of binary collsisions. I altered Hiroshi's code so that it created a text file with Ncoll, Nbin and refMult. This allowed me to calculate the average Nbin for a particular refMult. For 0-60% centrality, Nbin = 395 +/- 6.5(sys) and for 0-10% centrality Nbin = 964 +/- 27(sys). Additional systematic uncertainties due to the cross-section, Woods-Saxon distribution and exclusion region need to be checked. Attached is a spreadsheet I made from the output text file that allowed me to calculate these numbers. The values were generated with the mu=2.2 k=2.0 x=0.145 values, but the different centrality cuts were applied.

The corrected yield for upsilons is calculated as (1/NmbAA)*(dN/dy). We can cancel out the base minbias efficiency from this number by using the exact same cuts for NmbAA and dN/dy. This means that we merely need to calculate the number of minbias triggers that pass the reference multiplicity cut. For 0-60% centrality, NmbAA = 1.14e9 +0.2e9/-0.1e9 (sys due to centrailty determination) and for 0-10% centrality NmbAA = 1.78e8 +0.25e8/-0.24e8 (sys due to centrality determination)

»

- Printer-friendly version

- Login or register to post comments