Infrastructure

The pages in this tree relates to the Infrastructure sub-group of the S&C team.

The areas comprise: General infrastructure (software, web service, security,...), Online computing, operations and user support.

Online Computing

General

The online Web server front page is available here. This Drupal section will hold complementary informations.

A list of all operation manuals (beyond detector sub-systems) is available at You do not have access to view this node.

Please use it a startup page.

Detector sub-systems operation procedures - Updated 2008, requested confirmation for 2009

- BEMC Detector Operator Manual

- E-EMC Operations

- FTPC detector operator home

- Basic Operation Procedure for STAR PMD

Online computing run preparation plans

This page will list by year action items, run plans and opened questions. It will server as a repository for documents serving as basis for drawing the requirements. To see documents in this tree, you must belong to the Software and Computing OG (the pages are not public).

Run 19

Feedback from software coordinators

Active feedback

| Sub-system | Coordinator | Calibration POC | Online monitoring POC |

| MTD | Rongrong Ma | - same - | - same - |

| EMC |

Raghav Kunnawalkam Elayavalli Nick Lukow |

- same - |

Note: L2algo, bemc and bsmdstatus |

| EPD | Prashant Shanmuganathan | N/A | - same - |

| BTOF | Frank Geurts | - same - | Frank Geurts Zaochen Ye |

| ETOF | Florian Seck | - same - | Florian Seck Philipp Weidenkaff |

| HLT | Hongwei Ke | - same - | - same - |

Other software coordinators

| sub-system | Coordinator |

| iTPC (TPC?) | Irakli Chakaberia |

| Trigger | Akio Ogawa |

| DAQ | Jeff Landgraf |

| ... |

Run 20

Status of calibration timeline initialization

In RUN: EEMC, EMC, EPD, ETOF, GMT, TPC, MTD, TOF

Test: FST, FCS, STGC (no tables)

Desired init dates where announced to all software coordinators:

- Geometry tag has a timestamp of 20191120

- Simulation timeline [20191115,20191120[

- DB initialization for real data [20191125,...]

Please initialize your table content appropriate yi.e.

sim flavor initial values are entered at 20191115 up to 20191119

(please exclude the edge), ofl initial values at 20191125

(run starting on the 1st of December, even tomorrow's cosmic

and commissioning would pick the proper values).

Status - 2019/12/10

EMC = ready

ETOF = ready - initialized at 2019-11-25, no sim (confirming)

TPC = NOT ready [look at year 19 for comparison]

MTD = ready

TOF = Partially ready? INL correction, T0, TDC, status and alignement tables initialized

EPD = gain initialized at 2019-12-15 (!?), status not initialized, no sim

EEMC = ready? (*last init at 2017-12-20)

GMT = ready (*no db tables)

Status - 2019/12/09

EMC = ready

ETOF = ready? initialized at 2019-11-25, no sim

TPC = NOT ready

MTD = ready

TOF = NOT ready

EPD = gain initialized at 2019-12-15 (!?), status not initialized, no sim

EEMC = ready? (*last init at 2017-12-20)

GMT = ready (*no db tables)

Software coordinator feedback for Run 20 - Point of Contacts

| Sub-system | Coordinator | Calibration POC | Online monitoring POC |

| MTD | Rongrong Ma | - same - | - same - |

| EMC EEMC |

Raghav Kunnawalkam Elayavalli Nick Lukow |

- same - |

Note: L2algo, bemc and bsmdstatus |

| EPD | [ TBC] | - same - | - same - |

| BTOF | Frank Geurts | - same - | Frank Geurts Zaochen Ye |

| ETOF | Florian Seck | - same - | Florian Seck Philipp Weidenkaff |

| HLT | Hongwei Ke | - same - | - same - |

| TPC | Irakli Chakaberia | - same - |

Flemming Videbaek

|

| Trigger detectors | Akio Ogawa | - same - | - same - |

| DAQ | Jeff Landgraf | N/A |

---

Run 21

Status of calibration timeline initialization

- Simulation timeline [20201210, 20201215]

- DB initialization for real data [20201220,...]

Status - 2020/12/10

Software coordinator feedback for Run 21 - Point of Contacts

| Sub-system | Coordinator | Calibration POC | Online monitoring POC |

| MTD | Rongrong Ma | - same - | - same - |

| EMC EEMC |

Raghav Kunnawalkam Elayavalli Nick Lukow |

- same - |

Note: L2algo, bemc and bsmdstatus |

| EPD | Prashanth Shanmuganathan (TBC) | Skipper Kagamaster | - same - |

| BTOF | Zaochen | - same - | Frank Geurts Zaochen Ye |

| ETOF | Philipp Weidenkaff | - same - | Philipp Weidenkaff |

| HLT | Hongwei Ke | - same - | - same - |

| TPC | Yuri Fisyak | - same - | Flemming Videbaek |

| Trigger detectors | Akio Ogawa | - same - | - same - |

| DAQ | Jeff Landgraf | N/A | |

| Forward Upgrade | Daniel Brandenburg | - same - | FCS - Akio Ogawa sTGC - Daniel Brandenburg FST - Shenghui Zhang/Zhenyu Ye |

---

Run 22

Status of calibration timeline initialization

- Simulation timeline [20211015, 20211020[

- DB initialization for real data [20211025,...]

Status - 2021/10/13

Software coordinator feedback for Run 22 - Point of Contacts (TBC)

| Sub-system | Coordinator | Calibration POC | Online monitoring POC |

| MTD | Rongrong Ma | - same - | - same - |

| EMC EEMC |

Raghav Kunnawalkam Elayavalli |

- same - |

Note: L2algo, bemc and bsmdstatus |

| EPD | Prashanth Shanmuganathan (TBC) | Skipper Kagamaster | - same - |

| BTOF | Zaochen | - same - | Frank Geurts Zaochen Ye |

| ETOF | Philipp Weidenkaff | - same - | Philipp Weidenkaff |

| HLT | Hongwei Ke | - same - | - same - |

| TPC | Yuri Fisyak | - same - | Flemming Videbaek |

| Trigger detectors | Akio Ogawa | - same - | - same - |

| DAQ | Jeff Landgraf | N/A | |

| Forward Upgrade | Daniel Brandenburg | - same - | FCS - Akio Ogawa sTGC - Daniel Brandenburg FST - Shenghui Zhang/Zhenyu Ye |

---

Run XIII

Preparation meeting minutes

- Preparation meeting #1 (this included a summary of the You do not have access to view this node)

- Preparation meeting #2 (this meeting was informal, meeting #3 bundled two weeks progress)

- Preparation meeting #3 (in meeting #4 announcement)

- Preparation meeting #4 (minutes were not sent)

- Preparation meeting #5

- Preparation meeting #6

- Preparation meeting #7

- Preparation meeting #8

- Preparation meeting #9

- Preparation meeting #10 (informally met with a few team members, no formal meeting)

- Run support meeting, #11

- Run support meeting, #12

- Run support meeting #13 (minutes were not sent - all action items followed-up)

- Run support meeting #14

- Run support meeting #15

- Run support meeting #16

Database initialization check list

TPC Software – Richard Witt NO GMT Software – Richard Witt NO EMC2 Software - Alice Ohlson Yes FGT Software - Anselm Vossen Yes FMS Software - Thomas Burton Yes TOF Software - Frank Geurts Yes Trigger Detectors - Akio Ogawa ?? HFT Software - Spyridon Margetis NO (no DB interface, hard-coded values in preview codes)

Calibration Point of Contacts per sub-system

If a name is missing, the POC role falls onto the coordinator.

Coordinator Possible POC

------------ ---------------

TPC Software – Richard Witt

GMT Software – Richard Witt

EMC2 Software - Alice Ohlson Alice Ohlson

FGT Software - Anselm Vossen

FMS Software - Thomas Burton Thomas Burton

TOF Software - Frank Geurts

Trigger Detectors - Akio Ogawa

HFT Software - Spyridon Margetis Hao Qiu

Online Monitoring POC

The final list from the SPin PWGC can be found at You do not have access to view this node . The table below includes the Spin PWGC feedback and other feedbacks merged.| Directories we inferred are being used (as reported in the RTS Hypernews) | |||

| scaler | Len Eun and Ernst Sichtermann (LBL) | This directory usage was indirectly reported | |

| SlowControl | James F Ross (Creighton) | ||

| HLT | Qi-Ye Shou | The 2012 directory had a recent timestamp but owned by mnaglis. Aihong Tang contacted 2013/02/12 Answer from Qi-Ye Shou 2013/02/12 - will be POC. |

|

| fmsStatus | Yuxi Pan (UCLA) | This was not requested but the 2011 directory is being overwritten by user=yuxip FMS software coordinator contacted for confirmation 2013/02/12 Yuxi Pan confirmed 2013/02/13 as POC for this directory |

|

| Spin PWG monitoring related directories follows |

|||

| L0trg | Pibero Djawotho (TAMU) | ||

| L2algo | Maxence Vandenbroucke (Temple) | ||

| cdev | Kevin Adkins (UKY) | ||

| zdc | Len Eun and Ernst Sichtermann (LBL) | ||

| bsmdStatus | Keith Landry (UCLA) | ||

| emcStatus | Keith Landry (UCLA) | ||

| fgtStatus | Xuan Li (Temple) | This directory is also being written by user=akio causing protection access and possible clash problems. POC contacted on 2013/02/08, both Akio and POC contacted again 2013/02/12 -> confirmed as OK. |

|

| bbc | Prashanth (KSU) | ||

Run XIV

- Meeting minutes

- Pre run-preparation notes (basic checks, annoucements)

- Database initialization check list

- Calibration Point of Contacts per sub-system

- Online Monitoring POC

Preparation meeting meetings, links

- You do not have access to view this node

- You do not have access to view this node

- You do not have access to view this node

- You do not have access to view this node

- You do not have access to view this node

- You do not have access to view this node

- You do not have access to view this node

- You do not have access to view this node

- You do not have access to view this node

- You do not have access to view this node

Notes

- 2013/11/15

- Info gathering begins (directories/areas and Point of Contacts)

Status:

2013/11/22, directory structure, 2 people provided feedback, Renee coordinated the rest

2013/11/25, calibration POC, 3 coordinators provided feedback - Closed 2013/12/04

2013/12/04, geometry for Run 14,

- Basic check: CERT for online is old if coming from the Wireless

Status: fixed at ITD level, 2013/11/18 - the reverse proxy did not have the proper CERT

- Info gathering begins (directories/areas and Point of Contacts)

- 2013/1125

- Send the request to software sub-system cooridnators for calibration POC for Run 14, deadline December 2nd.

Database initialization check list

This actions suggested by this section has not started yet.| Sub-system | Coordinator | Check done |

| DAQ |

Jeff Landgraf | |

| TPC | Richard Witt | |

| GMT | Richard Witt | |

| EMC2 | Mike Skoby Kevin Adkins |

|

| FMS | Thomas Burton | |

| TOF | Daniel Brandenburg | |

| MTD | Rongrong Ma | |

| HFT | Spiros Margetis | (not known) |

| Trigger | Akio Ogawa | |

| FGT | Xuan Li |

Calibration Point of Contacts per sub-system

"-" indicates no feedback was provided. But if a name is missing, the POC role falls onto the coordinator.| Sub-system | Coordinator | Calibration POC |

| DAQ | Jeff Landgraf | - |

| TPC | Richard Witt | - |

| GMT | Richard Witt | - |

| EMC2 | Mike Skoby Kevn Adkins |

- |

| FMS | Thomas Burton | - |

| TOF | Daniel Brandenburg | - |

| MTD | Rongrong Ma | Bingchu Huan |

| HFT | Spiros Margetis | Jonathan Bouchet |

| Trigger | Akio Ogawa | - |

| FGT | Xuan Li | N/A |

Online Monitoring POC

| Not needed 2013/11/25 | ||

| SlowControl | Chanaka DeSilva | OKed on second Run preparation meeting |

| HLT | Zhengquia Zhang | Learn incidently on 2014/01/28 |

| HFT | Shusu Shi | Learn about it on 2014/02/26 |

| Not needed 2013/11/25 | ||

| L0trg | Zilong Chang Mike Skoby |

Informed 2013/11/10 and created 2013/11/15 |

| L2algo | Nihar Sahoo | Informed 2013/11/25 |

| Not needed 2013/11/25 | ||

| zdc | may not be used (TBC) | |

| bsmdStatus | Janusz Oleniacz | Info will be passed from Keith Landry 2014/01/20 Possible backup, Leszek Kosarzewski 2014/03/26 |

| emcStatus | Janusz Oleniacz | Info will be passed from Keith Landry 2014/01/20 Possible backup, Leszek Kosarzewski 2014/03/26 |

| Not needed 2013/11/25 | ||

| bbc |

Akio Ogawa | Informed 2013/11/15, created same day |

Run XV

Run 15 was preapred essentiallydiscussing with indviduals and a comprehensive page not maintained.

Run XVI

This page will contain feedback related to the preparation of the online setup.

Notes

Online Monitoring POC

| scaler | ||

| SlowControl | ||

| HLT | Zhengqiao | Feedback 2015/11/24 |

| HFT | Guannan Xie | Spiros: Feedback 2015/11/24 |

| Akio: Possibly not needed (TBC). 2016/01/13 noted this was not used in Run 15 and wil probably never be used again. | ||

| fmsTrg | Confirmed neded 2016/01/13 | |

| fps | Akio: Not neded in Run 16? Perhaps later. | |

| L0trg | Zilong Chang | Zilong: Feedback 2015/11/24 |

| L2algo | Kolja Kauder | Kolja: will be POC - 2015/11/24 |

| cdev | Chanaka DeSilva | |

| zdc | ||

| bsmdStatus | Kolja Kauder | Kolja: will be POC - 2015/11/24 |

| bemcTrgDb | Kolja Kauder | Kolja: will be POC - 2015/11/24 |

| emcStatus | Kolja Kauder | Kolja: will be POC - 2015/11/24 |

| Not needed since Run 14 ... May drop from the list | ||

| bbc |

Akio Ogawa | Feedback 2015/11/24, needed |

| rp |

Calibration Point of Contacts per sub-system

| Sub-system | Coordinator | Calibration POC |

| DAQ | Jeff Landgraf | - |

| TPC | Richard Witt Yuri Fisyak |

- |

| GMT | Richard Witt | - |

| EMC2 | Kolja Kauder Ting Lin |

- |

| FMS | Oleg Eysser | - |

| TOF | Daniel Brandenburg | - |

| MTD | Rongrong Ma | (same confirmed 2015/11/24) |

| HFT | Spiros Margetis | Xin Dong |

| HLT | Hongwei Ke | (same confirmed 2015/11/24) |

| Trigger | Akio Ogawa | - |

| RP | Kin Yip | - |

Database initialization check list

Shift Accounting

This page will now hold the shift accounting pages. They complement the Shift Sign-up process by documenting it.

- Shift Dues and Special Requests Run 20

- Run 19 special requests

- Run 18 shift dues

- You do not have access to view this node

- You do not have access to view this node

- Shift accounting, dues for Run 15 and findings

- You do not have access to view this node

- You do not have access to view this node

- You do not have access to view this node

- ...

Run 18 shift dues

Run 18 Shift Dues & Notes

Period coordinators

As usual, period coordinators are pre-assigned, as arranged by the Spokespersons.

Special arrangements and requests

- Under the family-related policy, the following 6 weeks of offline QA shifts were pre-assigned:

MAR 27 Kevin Adkins (Kentucky)

APR 03 Kevin Adkins

APR 10 Sevil Salur (Rutgers)

APR 17 Richard Witt (USNA/Yale)

MAY 22 Juan Romero (UC Davis)

JUN 12 Terry Tarnowsky (Michigan State)

- Lanny Ray (UT Austin), as QA coordinator, always is pre-assigned the first QA week.

- FIAS remains in “catch-up mode” and is taking extra shifts above their dues. Pre-assigned shifts can be requested in this scenario. FIAS has been pre-assigned 4 Detector Op shifts.

- Bob Tribble (TAMU) requests the evening Shift leader slot during Apr 10-17.

Run 19 special requests

The following pre-assigned slot requests were made.

9 WEEKS PRE-ASSIGNED QA AS FOLLOWS

==================================

Lanny Ray (UT Austin) QA Mar 5

Richard Witt (USNA/Yale) QA Mar 19

Sevil Salur (Rutgers) QA Apr 16

Wei Li (Rice) QA Apr 23

Kevin Adkins (Kentucky) QA May 14

Juan Romero (UC Davis) QA May 21

Jana Bielcikova (NPI, Czech Acad of Sci) QA May 28

Yanfang Liu (TAMU) QA June 25

Yanfang Liu (TAMU) QA July 02

8 WEEKS PRE-ASSIGNED REGULAR SHIFTS AS FOLLOWS

==================================

Bob Tribble (BNL) Feb 05 SL evening

Daniel Kincses (Eotvos) Mar 12 DO Trainee Day

Daniel Kincses (Eotvos) Mar 19 DO Day

Mate Csanad (Eotvos) Mar 12 SC Day

Ronald Pinter (Eotvos) Mar 19 SC Day

Carl Gagliardi (TAMU) May 14 SL day

Carl Gagliardi (TAMU) May 21 SL day

Grazyna Odyniec (LBNL) July 02 SL evening

Shift Dues and Special Requests Run 20

For the calculation of shift dues, there are two considerations.

1) The length of time of the various shift configurations (2 person, 4 person no trainees, 4 person with trainees, plus period coordinators/QA shifts)

2) The percent occupancy of the training shifts

For many years, 2) has hovered about 45%, which is what we used to calculate the dues. Since STAR gives credit for training shifts (as we should) this needs to be factored in or we would not have enough shifts.

The sum total of shifts needed are then divided by the total number of authors minus authors from Russian institutions who can not come to BNL.

date weeks crew training PC OFFLINE

11/26-12/10 2 2 0 0 0

12/10-12/24 2 4 2 1 0

12/24-6/30 27 4 2 1 1

7/02-7/16 2 4 0 1 1

Adding these together (3x a shift for crew, 3x45% for training, plus pc plus offline) gives a total of 522 shifts.

The total number of shifters is 303 - 30 Russian collaborators = 273 people

Giving a total due of 1.9 per author.

For a given institution, their load is calculated as # of authors - # of expert credits x due -> Set to an integer value as cutting collaborators into pieces is non-collegial behavior.

However, this year, this should have been:

date weeks crew training PC OFFLINE

11/26-12/10 2 2 0 0 0

12/10-12/24 2 4 2 1 0

12/24-6/02 23 4 2 1 1

6/02-6/16 2 4 0 1 1

Adding these together (3x a shift for crew, 3x45% for training, plus pc plus offline) gives a total of 456 shifts for a total due of 1.7 per author.

We allowed some people to pre-sign up, due to a couple different reasons.

Family reasons so offline QA:

James Kevin Adkins

Jana Bielčíková

Sevil Selur

Md. Nasim

Yanfang Liu

Additionally, Lanny Ray is given the first QA shift of the year as our experience QA shifter.

This year, to add an incentive to train for shift leader, we allowed people who were doing shift leader training to sign up for both their training shift and their "real" shift early:

Justin Ewigleben

Hanna Zbroszczyk

Jan Vanek

Maria Zurek

Mathew Kelsey

Kun Jiang

Yue-Hang Leung

Both Bob Tribble and Grazyna Odyniec sign up early for a shift leader position in recognition of their schedules and contributions

This year because of the date of Quark Matter and the STAR pre-QM meeting, several people were traveling on Tuesday during the sign up. These people I signed up early as I did not want to punish some of our most active colleagues for the QM timing:

James Daniel Brandenburg

Sooraj Radhakrishnan

3 other cases that were allowed to pre-sign up:

Panjab University had a single person who had the visa to enter the US, and had to take all of their shifts prior to the end of their contract in March. So that the shifter could have some spaces in his shifts for sanity, I signed up:

Jagbir Singh

Eotvos Lorand University stated that travel is complicated for their group, and so it would be good if they could insure that they were all on shift at the same time. Given that they are coming from Europe I signed up:

Mate Csanad

Daniel Kincses

Roland Pinter

Srikanta Tripathy

Frankfurt Institute for Advanced Studies (FIAS) wanted to be able to bring Masters students to do shift, but given the training requirements and timing with school and travel for Europe, this leaves little availability for shift. So I signed up:

Iouri Vassiliev

Artemiy Belousov

Grigory Kozlov

Tools

This is to serve as a repository of information about various STAR tools used in experimental operations.

Implementing SSL (https) in Tomcat using CA generated certificates

The reason for using a certificate from a CA as opposed to a self-signed certificate is that the browser gives a warning screen and asks you to except the certificate in the case of a self-signed certificate. As there already exists a given list of trusted CAs in the browser this step is not needed.The following list of certificates and a key are needed:

Concatenate the following certs into one file in this example I call it: Global_plus_Intermediate.crt/etc/pki/tls/certs/wildcard.star.bnl.gov.Nov.2012.cert – host cert.

/etc/pki/tls/private/wildcard.star.bnl.gov.Nov.2012.key – host key (don’t give this one out)

/etc/pki/tls/certs/GlobalSignIntermediate.crt – intermediate cert.

/etc/pki/tls/certs/GlobalSignRootCA_ExtendedSSL.crt –root cert.

/etc/pki/tls/certs/ca-bundle.crt – a big list of many cert.

cat /etc/pki/tls/certs/GlobalSignIntermediate.crt > Global_plus_Intermediate.crt cat /etc/pki/tls/certs/GlobalSignRootCA_ExtendedSSL.crt >> Global_plus_Intermediate.crt cat /etc/pki/tls/certs/ca-bundle.crt >> Global_plus_Intermediate.crt

Run this command. Note that “-name tomcat” and “-caname root” should not be changed to any other value. The command will still work but will fail under tomcat. If it works you will be asked for a password, that password should be set to "changeit".

openssl pkcs12 -export -in wildcard.star.bnl.gov.Nov.2012.cert -inkey wildcard.star.bnl.gov.Nov.2012.key -out mycert.p12 -name tomcat -CAfile Global_plus_Intermediate.crt -caname root -chain

Test the new p12 output file with this command:

keytool -list -v -storetype pkcs12 -keystore mycert.p12

Note it should say: "Certificate chain length: 3"

In tomcat’s the server.xml file add a connector that looks like this:

<Connector port="8443" protocol="HTTP/1.1" SSLEnabled="true" maxThreads="150" scheme="https" secure="true" keystoreFile="/home/lbhajdu/certs/mycert.p12" keystorePass="changeit" keystoreType="PKCS12" clientAuth="false" sslProtocol="TLS"/>

Note the path should be set to the correct path of the certificate. And the p12 file should only be readable by the Tomcat account because it holds the host key.

Online Linux pool

March 15, 2012:

THIS PAGE IS OBSOLETE! It was written as a guide in 2008 for documenting improvements in the online Linux pool, but has not been updated to reflect additional changes to the state of the pool, so not all details are up to date.

One particular detail to be aware of: the name of the pool nodes is now onlNN.starp.bnl.gov, where 01<=NN<=14. The "onllinuxN" names were retired several years ago.

Historical page (circa 2008/9):

Online Linux pool for general experiment support needs

GOAL:

Provide a Linux environment for general computing needs in support of the experiemental operations.

HISTORY (as of approximately June 2008):

A pool of 14 nodes, consisting of four different hardware classes (all circa 2001) has been in existence for several years. For the last three (or more?) years, they have had Scientific Linux 3.x with support for the STAR software environment, along with access to various DAQ and Trigger data sources. The number of significant users has probably been less than 20, with the heaviest usage related to L2. User authentication was originally based on an antique NIS server, to which we had imported the RCF accounts and passwords. Though still alive, we have not kept this NIS information maintained over time. Over time, local accounts on each node became the norm, though of course this is rather tedious. Home directories come in three categories: AFS, NFS on onllinux5, and local home directories on individual nodes. Again, this gets rather tedious to maintain over time.

There are several "special" nodes to be aware of:

- Three of the nodes (onllinux1, 2 and 3) are in the Control Room for direct console login as needed. (The rest are in the DAQ room.)

- onllinux5 has the NFS shared home directories (in /online/users). (NB. /online/users is being backed up by the ITD Networker backup system.)

- onllinux6 is (was?) used for many online database maintenance scripts (check with Mike DePhillps about this -- we had planned to move these scripts to onldb).

- onllinux1 was configured as an NIS slave server, in case the NIS master (starnis01) fails.

PLAN:

For the run starting in 2008 (2009?), we are replacing all of these nodes with newer hardware.

The basic hardware specs for the replacement nodes are:

Dual 2.4 GHZ Intel Xeon processors

1GB RAM

2 x 120 GB IDE disks

These nodes should be configured with Scientific Linux 4.5 (or 4.6 if we can ensure compatibility with STAR software) and support the STAR software environment.

They should have access to various DAQ and Trigger NFS shares. Here is a starter list of mounts:

| SERVER | DIRECTORY on SERVER | LOCAL MOUNT PONT | MOUNT OPTIONS |

| evp.starp | /a | /evp/a | ro |

| evb01.starp | /a | /evb01/a | ro |

| evb01 | /b | /evb01/b | ro |

| evb01 | /c | /evb01/c | ro |

| evb01 | /d | /evb01/d | ro |

| evb02.starp | /a | /evb02/a | ro |

| evb02 | /b | /evb02/b | ro |

| evb02 | /c | /evb02/c | ro |

| evb02 | /d | /evb02/d | ro |

| daqman.starp | /RTS | /daq/RTS | ro |

| daqman | /data | /daq/data | rw |

| daqman | /log | /daq/log | ro |

| trgscratch.starp | /data/trgdata | /trg/trgdata | ro |

| trgscratch.starp | /data/scalerdata | /trg/scalerdata | ro |

| startrg2.starp | /home/startrg/trg/monitor/run9/scalers | /trg/scalermonitor | ro |

| online.star | /export | /onlineweb/www | rw |

WISHLIST Items with good progress:

- <Uniform and easy to maintain user authentication system to replace the current NIS and local account mess. Either a local LDAP, or a glom onto RCF LDAP seems most feasible> -- An ldap server (onlldap.starp.bnl.gov) has been set-up and the 15 onllinux nodes are authenticating to it *BUT* it is using NIS!

- <Shared home directories across the nodes with backups> -- onlldap is also hosting the home directories and sharing them via NFS. EMC Networker is backing up the home directories and Matt A. is recieving the email notifications.

- <Integration into SSH key management system (mechanism depends upon user authentication method(s) selected).> -- The ldap server has been added to the STAR SSH key management system, and users are able to login to the new onlXX nodes with keys now.

- <Common configuration management system> -- Webmin is in use.

- <Ganglia monitoring of the nodes> -- I think this is done...

- <Osiris monitoring of the nodes> -- I think this is done - Matt A. and Wayne B. are receiveing the notices...

WISHLIST Items still needing significant work:

- None?

SSH Key Management

Overview

An SSH public key management system has been developed for STAR (see 2008 J. Phys.: Conf. Ser. 119 072005), with two primary goals stemming from the heightened cyber-security scrutiny at BNL:

- Use of two-factor authentication for remote logins

- Identification and management of remote users accessing our nodes (in particular, the users of "group" accounts which are not tied to one individual) and achieve accountability

A benefit for users also can be seen in the reduction in the number of passwords to remember and type.

In purpose, this system is similar to the RCF's key management system, but is somewhat more powerful because of its flexibility in the association of hosts (client systems), user accounts on those clients, and self-service key installation requests.

Here is a typical scenario of the system usage:

- A sysadmin of a machine named FOO creates a user account named "JDOE" and, if not done already, installs the key_services client.

- A user account 'JDOE' on host 'FOO' is configured in the Key Management system by a key management administrator.

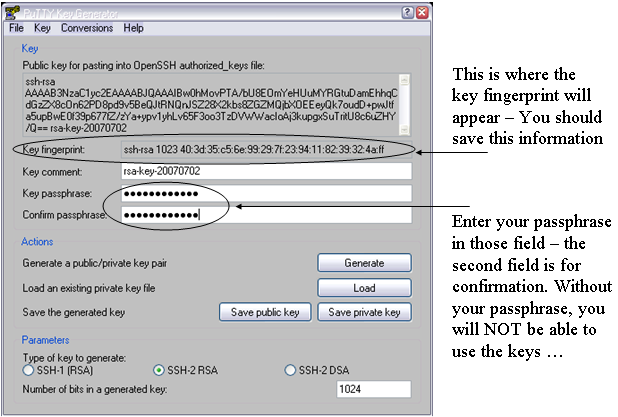

- John Doe uploads (via the web) his or her public ssh key (in openssh format).

- John Doe requests (via the web) that his key be added to JDOE's authorized_keys file on FOO.

- A key management administrator approves the request, and the key_services client places the key in ~JDOE/.ssh/authorized_keys.

At this point, John Doe has key-based access to JDOE@FOO. Simple enough? But wait, there's more! Now John Doe realizes that he also needs access to the group account named "operator" on host BAR. Since his key is already in the key management system he has only to request that his key be added to operator@BAR, and voila (subject to administrator approval), he can now login with his key to both JDOE@FOO and operator@BAR. And if Mr. Doe should leave STAR, then an administrator simply removes him from the system and his keys are removed from both hosts.

Slightly Deeper...

There are three things to keep track of here -- people (and their SSH keys of course), host (client) systems, and user accounts on those hosts:

People want access to specific user accounts at specific hosts.

So the system maintains a list of user accounts for each host system, and a list of people associated with each user account at each host.

(To be clear -- the system does not have any automatic user account detection mechanism at this time -- each desired "user account@host" association has to be added "by hand" by an administrator.)

This Key Management system, as seen by the users (and admins), consists simply of users' web browsers (with https for encryption) and some PHP code on a web server (which we'll call "starkeyw") which inserts uploaded keys and user requests (and administrator's commands) to a backend database (which could be on a different node from the web server if desired).

Behind the scenes, each host that is participating in the system has a keyservices client installed that runs as a system service. The keyservices_client periodically (at five minute intervals by default) interacts a different web server (serving different PHP code that we'll call starkeyd). The backend database is consulted for the list of approved associations and the appropriate keys are downloaded by the client and added to the authorized_keys files accordingly.

In our case, our primary web server at www.star.bnl.gov hosts all the STAR Key Manager (SKM) services (starkeyw and starkeyd via Apache, and a MySQL database), but they could each be on separate servers if desired.

Perhaps a picture will help. See below for a link to an image labelled "SKMS in pictures".

Deployment Status and Future Plans

We have begun using the Key Management system with several nodes and are seeking to add more (currently on a voluntary basis). Only RHEL 3/4/5 and Scientific Linux 3/4/5 with i386 and x86_64 kernels have been tested, but there is no reason to believe that the client couldn't be built on other Linux distributions or even Solaris. We do not anticipate "forcing" this tool onto any detector sub-systems during the 2007 RHIC run, but we do expect it (or something similar) to become mandatory before any future runs. Please contact one of the admins (Wayne Betts, Jerome Lauret or Mike Dephillips) if you'd like to volunteer or have any questions.

User access is currently based on RCF Kerberos authentication, but may be extended to additional authentication methods (eg., BNL LDAP) if the need arises.

Client RPMs (for some configurations) and SRPM's are available, and some installation details are available here:

http://www.star.bnl.gov/~dmitry/skd_setup/

An additional related project is the possible implementation of a STAR ssh gateway system (while disallowing direct login to any of our nodes online) - in effect acting much like the current ssh gateway systems role in the SDCC. Though we have an intended gateway node online (stargw1.starp.bnl.gov, with a spare on hand as well), it's use is not currently required.

Anxious to get started?

Here you go: https://www.star.bnl.gov/starkeyw/

You can use your RCF username and Kerberos password to enter.

When uploading keys, use your SSH public keys - they need to be in OpenSSH format. If not, please consult SSH Keys and login to the SDCC.

Software Infrastructure

On the menu today ...

- General Information

- Infrastructure & Software

Software Release, Sanity, ..

Problem reporting: General RCF Information and problem reporting, STAR problem reporting, - General Tools

- Web Sanity, Software & Documentation tools

- HPSS tools & services

General Information

SOFI stands for SOFtware infrastructure and Infrastructure. It includes any topics related to code standards, tools compiling your code, problems with base code and Infrastructure. SOFI also addresses (or try to address) your need in terms of monitoring or easily manage activities and resources in the STAR environment.

- Discussion list: starsoft-l@lists.bnl.gov (old: starsofi-hn@www.star.bnl.gov)

- Web archive

- RCF liaison meeting support documents

- IO performance of some of our hardware or tested hardware.

Infrastructure & Software

- Current software releases page

- A tutorial exists on Setting up your computing environment and what is defined and how to use it ...

- See the Autobuild & Code Sanity page where you will find the AutoBuild, Insure++, profiling compilation results as well as information about valgrind, Jprof etc ...

- If you are searching for a quick startup documentation for Batch system, resource management system, ...

- General RCF problems should be reported using Computing Facility Issue reporting system (RT).

You should NOT use this system to report STAR-specific problems. Instead, use the STAR Request Tracking system described below. - To report STAR specific problems: Request Tracking (bug and issues tracker) system,

Submitting a problem (bug), help request or issue to the Request Tracking system using Email

You can always submit a report to the bug tracking system by sending an Email directly. There is no need for a personalized account and using the Web Interface is not mandatory. For each BugTracking category, an equivalent @www.star.bnl.gov mailing list exists.

The currently available queues are

bugs-high problem with ANY STAR Software with a need to be fixed without delay bugs-medium problem with ANY STAR Software and must be fixed for the next release bugs-low problem with ANY STAR Software. Should be fixed for the next release comp-support General computing operation support (user, hardware and middleware provisioning) issues-infrstruct Any Infrastructure issues (General software and libraries, tools, network)

issues-scheduler Issues related to the SUMS project (STAR Unified Meta-Scheduler)

issues-xrootd Issues related to the (X)rootd distributed data usage issues-simu Issues related to Simulation grid-general STAR VO general Grid support : job submission, infrastructure, components, testing problem etc ... grid-bnl STAR VO, BNL Grid Operation support grid-lbl STAR VO, LBNL Grid Operation support wishlist Use it for or suggesting what you would wish to see soon, would be nice to have etc ...

You may use the guest account for accessing the Web interface. The magic word is here.

- To create a ticket, select the queue (drop down menu after the create-ticket button). Queues are currently sorted by problem priority. Select the appropriate level. A wishlist queue has been created for your comments and suggestions. After the queue is selected, click on the create-ticket button and fill the form. Please, do not forget the usual information i.e. the result of STAR_LEVELS and of uname -a AND a description of how to reproduce the problem.

- If you want to request a private account instead of using the guest account, send a message to the wishlist queue. There are 2 main reasons for requesting a personalized account :

- If you are planning to be an administrator or a watcher of the bug tracking system (that is, receive tickets automatically, take responsibility for solving them etc ...) you MUST have a private account.

- If you prefer to see the summary and progress of your own submitted tickets at login instead of seeing all tickets submitted under the guest account, you should also ask for a private account.

- At login, the left side panels shows the tickets you have requested and the tickets you own. The right panel shows a the status of all queues. Having a private account setup does NOT mean that you cannot browse the other users tickets. It only affects the left panels summary.

- To find a particular bug, click on search and follow the instructions.

- Finally, if you would like to have a new queue created for a particular purpose (sub-system specific problems), feel free to request to setup such a queue.

General Tools

Data location tools

- Several tools exists to locate data both on disk and in HPSS. Some tools are available from the production page and we will list here only the tools we are developing for the future.

- FileCatalog (command line interface get_file_list.pl and Perl module interface).

- You do not have access to view this node

Resource Monitoring tools

- Nova shows jobs running per users, per machine etc ...

- STAR disk space overall

- GPFS occupancy

- Farm monitoring - Ganglia Reports

- STAR Ganglia reports - those pages required the protected or the ganglia password.

- STAR Offline Ganglia monitoring a tool monitoring global resources.

- The Online Ganglia monitoring page

- RCF/STAR Ganglia reports - you need to use your RCF/Kerberos credentials for the below monitoring

- STAR Ganglia reports - those pages required the protected or the ganglia password.

- Queue Monitoring

- Users with Heavy NFS (GPFS) IO traffic [coming soon]

- Condor Status and Information : Occupancy plot, Usage plot, Pool/Queue plot and Running users and shares statistics.

- (Old and may break soon: pool usage monitoring and condor pool occupancy and general aggregate statistics)

- CRS jobs monitoring for STAR

- Nagios Based Farm Alert

- Cacti network traffic plots (to/from a few selected nodes incuding DB and Xrootd)

Browsers

- Database Browser

- Current RunLog Browser

- 2008 RunLog Browser

- 2007 RunLog Browser

- 2006 RunLog Browser

- ...

- Fast-Offline Browser

Web Sanity, Software & documentation tools

Web based access and tools

- Available CGIs

- You do not have access to view this node

- RCF Web based Email access

Web Sanity

- You can consult the server's log by using this cgi.

- Usage statistics using awstats.

- Status interafce includes: perl-status, serv-status, serv-info.

Software & documentation auto-generation

- Our STAR Software CVS Repositories browser

Allows browsing the full offline and online CVS repositories with listings showing days since last modification, modifier, and log message of last commit, display and download (checkout) access to code, access to all file versions and tags, and diff'ing between consecutive or arbitrary versions, direct file-level access to the cross-referenced presentation of a file, ... You can also sort by - Doxygen Code documentation (what is already doxygenized )

and the User documentation (a quick startup ...) Our current Code documentation is generated using the doxygen generator. Two utilities exists to help you with this new documentation scheme :- doxygenize is a utility which takes as argument a list of header files and modify them to include a "startup" doxygen tag documentation. It tries to guess the comment block, the author and the class name based on content. The current version also documents struct and enum lists. Your are INVITED TO CHECK the result before committing anything. I have tested on several class headers but there is always the exception where the parsing fails ...

- An interface to doxygen named doxycron.pl was created and installed on our Linux machines to satisfy the need of users to generate the documentation by themselves for checking purposes. That same generator interface is used to produce our Code documentation every day so, a simple convention has been chosen to accomplish both tasks. But why doxycron.plinstead of directly using doxygen? If you are a doxygen expert, the answer is 'indeed, why ?'. If not, I hope you will appreciate that doxycron.pl not only takes care of everything for you (like creating the directory structure, a default actually-functional configuration file, safely creating a new documentation set etc ....) but also adds a few more tasks to its list you normally have to do it yourself when using doxygen base tools (index creation, sorting of run-time errors etc ...). This being said, let me describe this tool now ...

The syntax for doxycron.pl is

% doxycron.pl [-i] PathWhereDocWillBeGenerated Path(s)ToScanForCode Project(s)Name SubDir(s)Tag

The arguments are:- -i is used here to disable the doxytag execution, a useless pass if you only want to test your documentation.

- PathWhereDocWillBeGenerated is the path where the documentation tree will be or TARGETD

- Path(s)ToScanForCode is the path where the sources are or INDEXD (default is the comma separated list /afs/rhic.bnl.gov/star/packages/dev/include,/afs/rhic.bnl.gov/star/packages/dev/StRoot)

- Project(s)Name is a project name (list) or PROJECT (default is the comma separated include,StRoot)

- SubDir(s)Tag an optional tag (list) for an extra tree level or SUBDIR. The default is the comma separated list include, . Note that the last element is null i.e. "". When encountered, the null portion of a SUBDIR list will tell doxycron.pl to generate an searchable index based all previous non-null SUBDIR in the list.

Note that if one uses lists instead of single values, then, ALL arguments MUST be a list and the first 3 are mandatory.

To pass an empty argument in a list, you must use quotations as in the following example

% doxycron.pl /star/u/jeromel/work/doxygen /star/u/jeromel/work/STAR/.$STAR_HOST_SYS/DEV/include,/star/u/jeromel/work/STAR/DEV/StRoot include,StRoot 'include, '

In order to make it clear what the conventions are, let's describe a step by step example as follow:

Examples 1 (simple / brief explaination):

% doxycron.pl `pwd` `pwd`/dev/StRoot StRoot

would create a directory dox/ in `pwd` containing the code documentation generated from the relative tree dev/StRoot for the project named StRoot. Likely, this (or similar) will generate the documentation you need.

Example 2 (fully explained):

% doxycron.pl /star/u/jeromel/work/doxygen /star/u/jeromel/work/STAR/DEV/StRoot Test

In this example, I scan any source code found in my local cvs checked-out area /star/u/jeromel/work/STAR/DEV starting from StRoot. The output tree structure (where the documentation will end) are requested to be in TARGETD=/star/u/jeromel/work/doxygen. In order to accomplish this, doxycron.pl will check and do the following:- Check that the doxygen program is installed

- Create (if it does not exists) $TARGETD/dox directory where everything will be stored and the tree will start

- Search for a $TARGETD/dox/$PROJECT.cfg file. If it does not exists, a default configuration file will be created. In our example, the name of the configuration file defaults to /star/u/jeromel/work/doxygen/dox/Test.cfg. You can play with several configuration file by changing the project name. However, changing the project name would not lead to placing the documents in a different directory tree. You have to play with the $SUBDIR value for that.

- The $SUBDIR variable is not used in our example. If I had chosen it to be, let's say, /bof, the documentation would have been created in $TARGETD/dox/bof instead but the template is still expected to be $TARGETD/dox/$PROJECT.cfg.

The configuration file should be considered as a template file, not a real configuration file. Any item appearing with a value like Auto-> or Fixed-> will be replaced on the fly by the appropriate value before doxygen is run. This ensures keeping the conventions tidy and clean. You actually, do not have to think about it neither, it works :) ... If it does not, please, let me know. Note that the temporary configuration file will be created in /tmp on the local machine and left there after running.

What else does one need to know : the way doxycron.pl works is the safest I could think off. Each new documentation set is re-generated from scratch, that is, using temporary directories, renaming old ones and deleting very old ones. After doxycron.pl has completed its tasks, you will end up with the directories $TARGETD/dox$SUBDIR/html and $TARGETD/dox$SUBDIR/latex. The result of the preceding execution of doxycron.pl will be in directories named html.old and latex.old.

One thing will not work for users though : the indexing. The installation of indexing mechanism in doxygen is currently not terribly flexible and fixed values were chosen so that clicking on the Search index link will go to the cgi searching the entire main documentation pages.As a last note, doxygen understands ABSOLUTE path names only and therefore, doxycron.pl will die out if you try to use relative paths as the arguments. Just as a reminder, /titi/toto is an absolute path while things like ./or ./tata are relative path.

HPSS tools & services

- How to retrieve files from HPSS. Please, use the Data Carousel and ONLY the DataCarousel.

Note: DO NOT use hsi to retrieve files from HPSS - this access mode locks tape drives for exclusive use (only you, not shared with any other user) and have dire impacts on STAR;s operations from production to data restores. If you are caught using it, you will be banned from accessing HPSS (your privilege to access HPSS resources will be revoked).

Again - Please, use the Data Carousel.- Data Carousel Quick Start/Tutorial

- Accounting interface (see the result of you requests as they are processed)

- DataCarousel Input file generator for raw files (valid only for what the FastOffline system knows about)

- Archiving into HPSS

Several utilities exists. You can find the reference on the RCF HPSS Service page. Those utilities will bring you directly in the Archive class of service. Note that the DataCarousel can retrieve files from ANY class of service. The prefered mode for archining is the use of htar.

NOTE: You should NOT abuse those services to retreive massive amount of files from HPSS (your operation will otherwise clash with other operations, including stall or slow down data production). Use the DataCarousel instead for massive file retreival. Abuse may lead to supression of access to archival service.

- For rftp, history is in an Hypernews post Using rftp . If you save individual files and have lots of files in a directory, please avoid causing a Meta_data lookup. Meta-data lookup happens when you 'ls -l'. As a reminder, please keep in mind that HPSS is NOT made for neither small files and large amount of files in directories but for massive large files storage (on 2007/10/10 for example, a user crashed HPSS with a single 'ls -l' lookup of a 3000 files directory). In that regard, rftp is most useful if you create first an archive of your files yourself (tar, zip,...) and push the archive into HPSS afterward. If this is not your mode of operation, the preferred method is the use of htar which provides a command-line direct HPSS archive creation interface.

- htar is the recommended mode for archining into HPSS. This utility provides a tar-like interface allowing for bundling together several files or an entire directory tree. Note the syntax of htar and especiallythe extract below from this thread:

If you want the file to be created in /home/<username>/<subdir1> and <subdir1> does not existed yet, use % htar -Pcf /home/<username>/<subdir1>/<filename> <source> If you want the file to be created into /home/<username>/<subdir2> and <subdir2> already exists, use % htar -cf /home/<username>/<subdir2>/<filename> <source>

Please consult the help on the web for more information about htar. - File size is limited to be <55GB, and if exceeded you will get Error -22. In this case consider using split-tar. A simple example/syntax on how to use split-tar is:

% split-tar -s 55G -c blabla.tar blabla/

This will at least create blabla-000.tar but also the next sequences (001, 002, ...) each of 55 GBytes until all files from directory blabla/ are packed. The magic 55 G suggested herein and in many posts works for any generation of drive for the past decade. But a limit of 100-150 GB should also work on most media at BNL as per 2016. See this post for a summary of past pointers. - You may make split-tar archive cross-compatible with htar by creating afterward the htar indexes. To do this, use a command such as

% htar -X -E -f blabla-000.tar

this will create blabla-000.tar.idx you will need to save in HPSS along the archive.

Batch system, resource management system

SUMS (aka, STAR Scheduler)

SUMS, the product of the STAR Scheduler project, stands for Star Unified Meta-Scheduler. This tool is currently documented on its own pages. SUMS provides a uniform user interface to submitting jobs on "a" farm that is, regardless of the batch system used, the language it provides (in XML) is identical. The scheduling is controlled by policies handling all the details on fitting your jobs in the proper queue, requesting proper resource allocation and so on. In other words, it isolates users from the infrastructure details.

You would benefit from starting with the following documents:

- Manual for users

- Description of existing policies and dispatchers

- The SUMS' FAQ

LSF

LSF was dropped from BNL facility support in July 2008 due to licensing cost. Please, refer to the historical revision for information about it. If a link brought you here, please update or send a note to the page owner. Information have been kept un-published You do not have access to view this node.

Condor

Quick start ...

Condor Pools at BNL

The condor pools are segmented into four pools extracted from this RACF page:

| production jobs | +Experiment = "star" | +Job_Type = "crs" | high priority CRS jobs, no time limit, may use all the slots on CRS nodes and up to 1/2 available job slots per system on CAS ; the CRS portion is not available to normal users and using this Job_Type for user will fail |

| users normal jobs | +Experiment = "star" | +Job_Type = "cas" | short jobs, 3 to 5 hour soft limit (when resources are requested by others), 40 hour hard limit - this has higher priority than the "long" Job_Type. |

| user long jobs | +Experiment = "star" | +Job_Type = "long" | long running jobs, 5 day soft limit (when resources are requested by others), 10 day hard limit, may use 1 job slot per system on a subset of machines |

| general queue | +Experiment = "general" +Experiment = "star" |

General queue shared by multiple experiments, 2 hours guaranteed time minimum (can be evicted afterward by any experiment's specific jobs claiming the slot) |

The Condor configurations do not have create a simple notion of queues but generates a notion of pools. Pools are group of resources spanning all STAR machines (RCAS and RCRS nodes) and even other experiment's nodes. The first column tend to suggest four of such pools although we will see below that life is more complicated than that.

First, it is important to understand that the +Experiment attribute is only used for accounting purposes and what makes the difference between a user job or a production job or a general job is really the other attributes.

Selection of how your jobs will run is the role of +Job_Type attribute. When it is unspecified, the general queue (spanning all RHIC machines at the facility) is assumed but your job may not have the same time limit. We will discuss the restriction later. The 4th column of the table above shows the CPU time limits and additional constraints such as the number of slots within a given category one may claim. Note that the +Job_type="crs" is reserved and its access will be enforced by Condor (only starreco may access this type).

In addition of using +Job_type which as we have seen controls what comes as close as possible to a queue in Condor, one may need to restrict its jobs to run on a subset of machines by using the CPU_Type attribute in the Requirements tag (if you are not completely lost by now, you are good ;-0 ). An example to illustrate this:

+Experiment = "star" +Job_type = "cas" Requirements = (CPU_type != "crs") && (CPU_Experiment == "star")

In this example, a cas job (interpret this as "a normal user analysis job") is being run on behalf of the experiment star. The CPU / nodes requested are the CPU belonging to the star experiment and the nodes are not RCRS nodes. By specifying those two requirements, the user is trying to make sure that jobs will be running on RCAS nodes only (or != "crs") AND, regardless of a possible switch to +Experiment="general", the jobs will still be running on the nodes belonging to STAR only.

In this second example

+Experiment = "star" +Job_type = "cas" Requirements = (CPU_Experiment == "star")

we have pretty much the same request as before but the jobs may also run on RCRS nodes. However, if data production runs (+Job_type="crs" only starreco may start), the user's job will likely be evicted (as production jobs will have higher priorities) and the user may not want to risk that hence specifying the first Requirements tag.

Pool rules

A few rules apply (or summarized) below:

- Production jobs cannot be evicted on their claimed slots ... since they have higher priority than user jobs even on CAS nodes, this means that as soon as production jobs starts, its pool of slots will slowly but surely be taken - user's jobs may use those slots at low-downs of utlization.

- Users jobs can be evicte. Eviction happens after 3 hours of runtime from the time they start but only if the slot they are running in is claimed by other jobs. For example, if a production job wants a node being used by a user job that has been running for two hours then that user job has one hour left before it gets kicked out ...

- This time limit comes into effect when a higher priority job wants the slot (i.e. production vs. user or production)

- general queue jobs are evicted after two hours of guaranteed time when the slot is wanted by ANY STAR job (production, user)

- general queue jobs will be evicted however if they consume more than 750 MB of memory

This provides the general structure of the Condor policy in place for STAR. The other policy options in place goes as follows:

- The following options apply to all machines: the 1 mn load has to be less than 1.4 on a two CPU node for a job to start

- General queue jobs will not start on any node unless 1 min < 1.4, swap > 200M, memory > 100M.

- User fairshare is in place.

In the land of confusion ...

Also, users are often confused of the meaning of their job priority. Condor will consider a user's job priority and submit jobs in priority order (where the larger the number, more likely the job willl start) but those priorities have NO meaning across two distinct users. In other words, it is not because user A sets job priorities larger by an order of magitude comparing to user B that his job will start first. Job priority only providesa mechanism for a user to specify which of their idle jobs in the queue are most important. Jobs with higher numerical priority should run before those with lower priority, although because jobs can be submitted from multiple machines, this is not always the case. Job prioritties are listed by the condor_q command in the PRIO column.

The effective user priority is dynamic, on the other hand, and changes as a user has been given access to resources over a period of time. A lower numerical effective user priority (EUP) indicates a higher priority. Condor's fairshare mechanism is implemented via EUP. The condor_userprio command hence provides an indication of your faireshareness.

You should be able to use condor_qedit to manually modify the "Priority" parameter, if desired. If a job does not run for weeks, there is likely a problem with its submitfile or one of its input, and in particular its Requirements line. You can use condor_q -analyze JOBID, or condor_q -better-analyze JOBID to determine why it cannot be scheduled.

What you need to know about Condor

First of all, we recommend you use SUMS to submit to Condor as we would take care of adding codes, tricks, tweaks to make sure your jobs run smoothly. But if you really don't want to, here are a few issues you may encounter:

- Unless you use the GetEnv=true datacard directive in your condor job description, Condor jobs will start with a blank set of environment variables unlike a shell startup. Especially, none of

SHELL, HOME, LOGNAME, PATH, TERM and MAIL

will be define. The absence of $HOME will have for side effect that, whenever a job starts, your .cshrc and .login will not be seen hence, your STAR environment will not be loaded. You must take this into account and execute the STAR login by hand (within your job file).

Note that using GetEnv=true has its own sde effects which includes a full copy of the environment variables as defined from the submitter node. This will NOT be suitable for distributed computing jobs. The use of getenv() C primitive in your code is especially questionable (it will unlikely return a valid value) and- STAR user may look at this post for more information on how to use ROOT function calls for defining some of the above.

- You may also use getent shell command (if exists) to get the value of your home directory

- A combinations of getpwuid(), getpwnam() would allow to define $USER and $HOME

- Condor follows a multi-submitter node model with no centralized repository for all jobs. As a consequence, whenever you use a command such as condor_rm, you would kill the jobs you have submitted from that node only. To kill jobs submitted from other submitter nodes (any interactive node at BNL is a potential submitter node), you need to loop over the possibilities and use the -name command line option.

- Condor will keep your jobs indefinitely in the Pool unless you either remove the jobs or specify a condition allowing for jobs to be automatically removed upon status and expiration time. A few examples below could be used for the PeriodicRemove Condor datacard

- To automatically remove jobs which have been in the queue for more than 2 days but marked as status 5 (held for a reason or another and not moving) use

(((CurrentTime - EnteredCurrentStatus) > (2*24*3600)) && JobStatus == 5)

- To automatically remove jobsruning the the queue for more than 2 days but using less than 10% of the CPU (probably looping or inefficient jobs blocking a job slot), use

(JobStatus == 2 && (CurrentTime - JobCurrentStartDate > (54000)) && ((RemoteUserCpu+RemoteSysCpu)/(CurrentTime-JobCurrentStartDate) < 0.10))

PeriodicRemove = (JobStatus == 2 && (CurrentTime - JobCurrentStartDate > (54000)) && ((RemoteUserCpu+RemoteSysCpu)/(CurrentTime-JobCurrentStartDate) < 0.10)) || (((CurrentTime - EnteredCurrentStatus) > (2*24*3600)) && JobStatus == 5) - To automatically remove jobs which have been in the queue for more than 2 days but marked as status 5 (held for a reason or another and not moving) use

Some condor commands

This is not meant to be an exhaustive set of commands nor a tutorial. You are invited to read to the manpages for condor_submit, condor_rm, condor_q, condor_status. Those will be most of what you will need to use on a daily basis. Help for version 6.9 is available online.

- Query and information

- condor_q -submitter $USER

List jobs of specific submitter $USER from all the queues in the pool - condor_q -submitter $USER -format "%s\n" ClusterID

Shows the JobID for all jobs of $USER. This command may succeed although an unconstrained condor_q may fell if we had a large amount of jobs - condor_q -analyze $JOBID

Perform an approximate analysis to determine how many resources are available to run the requested jobs. - condor_status -submitters

shows the numbers of running/idle/held jobs for each user on all machines - condor_status -claimed

Summarize jobs by servers as claimed - condor_status -avail

Summarize resources which are available

- condor_q -submitter $USER

- Removing jobs, controlling them

- condor_rm $USER

removes all of your jobs submitted from this machine - condor_rm -name $node $USER

removed all jobs for $USER submitted from machine $node

- condor_rm -forcex $JOBID

Forces the immediate local removal of jobs in undefined state (only affects jobs already being removed). This is needed if condor_q -submitter shows your job but condor_q -analyze $JOBID does not (indicating an out of sync information at Condor level).

- condor_release $USER

releases all of your held jobs back into the pending pool for $USER - condor_vacate -fast

may be used to remove all jobs from the submitter node job queue. This is a fast mode command (no checks) and applies to running jobs (not pending ones)

- condor_rm $USER

- More advanced

- condor_status -constraint 'RemoteUser == "$USER@bnl.gov"'

lists the machines on which your jobs are currently running

- condor_q -submitter username -format "%d" ClusterId -format " %d" JobStatus -format " %s\n" Cmd

shows the job id, status, and command for all of your jobs. 1==Idle, 2==Running for Status. I use something like this because the default output of condor_q truncates the command at 80 characters and prevents you from seeing the actually scheduler job ID associated with the Condor job. I'll work on improving this command, but this is what I've got for now. - To access the reason for job 26875.0 to be held from a submitter node advertized to be rcas6007, use the following command to have a human readable format

condor_q -pool condor02.rcf.bnl.gov:9664 -name rcas6007 -format "%s\n" HoldReason 26875.0

- condor_status -constraint 'RemoteUser == "$USER@bnl.gov"'

Computing Environment

The pages below will give you a rapid overview of the computing environment at BNL, including information for visitors and employees, accessible printers, best practices, recommended tools for managing Windows.

FAQs and Tips

Software Site Licenses

Do we have a site license for software package XYZ? The answer is (almost) always: No!

Neither STAR nor BNL have site licences for any Microsoft product, Hummingbird Exceed, WinZIP, ssh.com's software or much of anything intended to run on individual users' desktops. Furthermore, for most purposes BNL-owned computers do not qualify for academic software licenses, though exceptions do exist.

FAQ: PDF creation

How can I create a file in pdf format?Without Adobe Acrobat (an expensive bit of software), this can be a daunting question. I am researching answers, some of which are available in my Windows software tips. Here is the gist of it in a nutshell as I write this -- there are online conversion services and OpenOffice is capable of exporting PDF documents.

FAQ: X Servers

What X server software should I use in Windows?I recommend trying the X Server that is available freely with Cygwin, for which I have created some documentation here: Cygwin Tips. If you can't make that work for you, then I next recommend a commercial product called Xmanager, available from http://www.netsarang.com. Last time I checked, you could still download a fully functional version for a time-limited evaluation period.

TIP: Windows Hibernation trick

Hibernate or Standby -- There is a difference which you might find handy:- "Standby" puts the machine in a low power state from which it can be woken up nearly instantly with some stimulus, such as a keystroke or mouse movement (much like a screensaver) but the state requires a continuous power source. The power required is quite small compared to normal running, but it can eventually deplete the battery (or crash hard if the power is lost in the case of a desktop).

- "Hibernate" actually dumps everything in memory to disk and turns off the computer, then upon restarting it reloads the saved memory and basically is back to where it was. While hibernating, no power source is required. It can't wake up quickly (it takes about as long as a normal bootup), but when it does wake up, (almost) everything is just the way you left it. One caveat about networking is in order here: Stateful connections (eg. ssh logins) are not likely to survive a hibernation mode (though you may be able to enable such a feature if you control both the client and server configurations), but most web browsing activity and email clients, which don't maintain an active connection, can happily resume where they left off.

Imagine: the lightning is starting, and you've got 50 windows open on your desktop that would take an hour to restore from scratch. You want to hibernate now! Here's how to enable hibernating if it isn't showing up in the shutdown box:

Open the Control Panels and open "Power Options". Go to the "Hibernate" tab and make sure the the box to enable Hibernation is checked. When you hit "Turn Off Computer" in the Start menu, if you still only see a Standby button, then try holding down a Shift key -- the Standby button should change to a Hibernate button. Obvious, huh?

For the curious:

There are actually six (or seven depending on what you call "official") ACPI power states, but most motherboards/BIOSes only support a subset of these. To learn more, try Googling "acpi power state", or you can start here as long as this link works. (Note there is an error in the main post -- the S5 state is actually "Shutdown" in Microsoft's terminology).

From the command line, you can play around with these things with such straightforward commands as:

%windir%\System32\rundll32.exe powrprof.dll,SetSuspendState 1

Even more obvious, right? If you like that, then try this on for size.

TIP: My new computer is broken!:

It's almost certainly true - your new computer is faulty and the manufacturer knows it! Unfortunately, that's just a fact of life. Straight out of the box, or after acquiring a used PC, you might just want to have a peek at the vendor's website for various updates that have been released. BIOS updates for the motherboard are a good place to start, as they tend to fix all sorts of niggling problems. Firmware updates for other components are common as are driver updates and software patches for pre-installed software. I've solved a number of problems applying these types of updates, though it can take hours to go through them thoroughly and most of the updates have no noticeable effect. And it is dangerous at times. One anecdote to share here -- we had a common wireless PC Card adapter that was well supported in both Windows and Linux. The vendor provided an updated firmware for the card, installed under Windows. But it turned out that the Linux drivers wouldn't work with the updated firmware. So back we went to reinstall a less new firmware. You'll want to try to be intelligent and discerning in your choices. Dell for instance does a decent job with this (your Dell Service Tag is one very useful key here), but still requires a lot from the updater to help ensure things go smoothly. This of course is in addition to OS updates that are so vital to security and discussed elsewhere.Printers

STAR's publicly available printers are listed below.

| IP name Wireless (Corus) CUPS URL |

IP address | Model | Location | rcf2 Queue Name | Features |

| lj4700.star.bnl.gov http://cups.bnl.gov:631/printers/HP_Color_LaserJet_4700_2 |

130.199.16.220 | HP Color LaserJet 4700DN | 510, room M1-16 | lj4700-star | color, duplexing, driver download site (search for LaserJet 4700, recommend the PCL driver) |

| lj4700-2.star.bnl.gov http://cups.bnl.gov:631/printers/lj4700-2.star.bnl.gov |

130.199.16.221 | HP Color LaserJet 4700DN | 510, room M1-16 | lj4700-2-star | color, duplexing, driver download site (search for LaserJet 4700, recommend the PCL driver) |

| onlprinter1.star.bnl.gov http://cups.bnl.gov/printers/onlprinter1.star.bnl.gov |

130.199.162.165 | HP Color LaserJet 4700DN | 1006, Control Room | staronl1 | color, duplexing |

| chprinter.star.bnl.gov N/A |

130.199.162.178 | HP Color LaserJet 3800dtn | 1006C, mailroom | n/a | color, duplexing |

There are additional printing resources available at BNL, such as large format paper, plotters, lamination and such. Email us at starsupport 'at' bnl.gov and we might be able to help you locate such a resource if needed.

Printing from the wireless (Corus) network

The "standard" way of printing from the wireless network is to go through ITD's CUPS server on the wireless network. How to do this varies from OS to OS, but here is a Windows walkthrough. The key thing is getting the URI for the printer into the right place:

- Open the Printers Control Panel and click "Add a Printer".

- Select the option to add a network printer. (Ignore the list of printers that it generates automatically).

- Click on the button or option for "the printer that I want isn't listed".

- Select the option for a shared printer and enter the green URL from the list above for the printer you want.

eg. http://cups.bnl.gov:631/printers/HP_Color_LaserJet_4700_2 - On the next window, select the hardware manufacturer and model (if not listed, let Windows search for additional models).

- Print a test page and cross your fingers...

- If your test print does not come out, it doesn't necessarily mean your configuration is wrong - sometimes a problem occurs on the the CUPS server that prevents printing - it isn't always easy to tell where the fault lies.

Since printing through ITD's CUPS servers at BNL has not been very reliable, here are some less convenient alternatives to using the printers that you may find handy. (Note that with these, you can even print on our printers while you are offsite - probably not something to do often, but might come in handy sometimes.)

1. Use VPN. But if you are avoiding the internal network altogether for some reason, or can't use the VPN client, then keep reading...

2. Get your files to rcf2.rhic.bnl.gov and print from there. Most of printers listed above have rcf print queues (hence the column "rcf2 queue name"). But if you want to use a printer for which there is no queue on rcf2, or you have a format or file type that you can't figure out how to print from rcf2, then the next tip might be what you need.

3. SSH tunnels can provide a way to talk directly (sort-of) to almost any printer on the campus wired network. At least as far as your laptop's print subsystem is concerned, you will be talking directly to the printer. (This is especially nice if you want to make various configuration changes to the print job through a locally installed driver.) But if you don't understand SSH tunnels, this is gonna look like gibberish:

Here is the basic idea, using the printer in the Control Room. It assumes you have access to both the RSSH and STAR SSH gateways. The ITD SSH gateways might also work in place of rssh (I haven't tried them yet). If they can talk directly to our printers, then it would eliminate step C below. A. From your laptop: ssh -A -L 9100:127.0.0.1:9100 <username>@rssh.rhic.bnl.gov (Note 1: -A is only useful if you are running an ssh-agent with a loaded key, which I highly recommend) (Note 2: Unfortunately, the rssh gateways cannot talk directly to our printers, so we have to create another tunnel to a node that can... If the ITD SSH gateways can communicate directly with the printers, then the next hop would be unnecessary...) B. From the rssh session: ssh -L 9100:130.199.162.165:9100 <username>@stargw1.starp.bnl.gov (Note 1: 130.199.162.165 is the IP address of onlprinter1.star.bnl.gov - it could be replaced with any printer's IP address on the wired network.) (Note 2: port 9100 is the HP JetDirect default port - non-HP printers might not use this, and there are other ways of communicating with HP network printers, so ymmv - but the general idea will work with most TCP communications, if you know the port number in use. C. On your laptop, set up a local print queue as if you were going to print directly to the printer over the network (with no intermediate server), but instead of supplying the printer's IP address, use 127.0.0.1 instead. D. Start printing... If you close either of the ssh sessions above, you will have to re-establish them before you can print again. The two ssh commands can be combined into one and you can create an alias to save typing the whole thing each time. (Or use PuTTY or some other GUI SSH client wrapper to save these details for reuse.) You could set up multiple printers this way, but to use them simultaneously, you would need to use unique port numbers for each one (though the port number at the end of the printer IP would stay 9100).

Direct connection, internal network

You can use direct connections to access them over the network.

- Direct: These printers accept direct TCP/IP connections, without any intermediate server.

- JetDirect (AppSocket) and lpd usually work under Linux.

- For Windows NT/2K/XP, a Standard TCP/IP port is usually the way to go.

How to configure this varies with OS and your installed printing software.

Tips

What follows are miscellaneous tips and suggestions that will be irregularly maintained.

- The 2-sided printers are configured to print 2-sided by default, but the default for many printer drivers will override this and specify 1-sided. If you are printing from Windows, you can usually choose your preferences for this in the printer preferences or configuration GUI. You may need to look in the Advanced Settings and/or Printing Defaults to enable 2-sided printing in Windows.

- Depending on the print method and drivers used, from the Linux command line you may be able to specify various options for things like duplex printing. To see available options for a given print queue, try the "lpoptions" command. For instance, on rcf2 you could do "lpoptions -d xerox7300 -l". In the output, you will find a line like this: "Duplex/2-Sided Printing: DuplexNoTumble *DuplexTumble None" (DuplexNoTumble is the same as flip on long edge, while DuplexTumble is the same as flip on short edge, and the * indicates the default setting.) So to turn off duplex printing, you could do "lp -d xerox7300 -o Duplex=None <filename>". Keep in mind that not all options listed by lpoptions may actually be supported by the printer, and the defaults (especially in the rcf queues) may not be what you'd like. There are so many print systems, options and drivers in Linux/Unix that there's no way to quickly describe all the possible scenarios.

- There is a handy utility called a2ps that is available on most Linux distributions. It is an "Any to PostScript" filter that started as a Text to PostScript converter, with pretty printing features and all the expected features from this kind of program. But it is also able to deal with other file types (PostScript, Texinfo, compressed, whatever...) provided you have the necessary tools installed.

- psresize is another useful utility in Linux for dealing with undesired page sizes. If you are given a PostScript file that specifies A4 paper, but want to print it on US Letter-sized paper, then you can do:

psresize -PA4 -pletter in.ps out.ps

See the man page for more information.

- Some of the newer printers have installation wizards for Windows that can be accessed through their web interfaces. I've had mixed success with the HP IPP installation wizards. The Xerox wizard (linked above) has worked well, though it pops up some unnecessary windows and is a bit on the slow side.

- Windows 9x/Me users will likely have to install software on their machines in order to print directly to these printers. HP and Xerox have such software available for download from their respective support websites, but who uses these OSes anymore?

- For linux users setting up new machines, CUPS at least for recent distros is the default printing system (unless upgrading from an older distribution, in which case LPRng may still be in use). Given an appropriate PPD file, CUPS is capable of utilizing various print options, such as tray selection and duplexing, or at least you can create different queues with different options to a single printer.

- There are other potentially useful printers around that are not catalogued here. Some are STAR printers out of the mainstream (like in 1006D), and some belong to other groups in the physics department.

Quick (?) start guide for visitors with laptops

So you brought a laptop to BNL… and the first thing you want to do is get online, right?

Ok, here's a quick (?) guide to getting what you want without breaking too many rules.

Wired Options:

-

Visitors' network: Dark purple jacks (usually labeled VNxx) are on a visitors' network and are effectively outside of the BNL firewall. They support DHCP and do not require any sort of registration to use. Being outside the firewall can be advantageous, but will prevent you from